Post Author

When tools claim to deliver real-time answers pulled from the web, backed by citations, we tend to assume there is a human-level guarantee of accuracy. Over months of research, I found that Perplexity’s growth story is less about removing humans from the loop and more about hiding them behind citations, incentives, and design choices that feel authoritative but are far from flawless.

Here, I will argue this point directly: Perplexity’s credibility is anchored in systems that mimic human judgment without clearly revealing where humans actually influence the product. Along the way, I will show where evidence exists, where it does not, and what that reveals about the limits of automated search.

A New Player in the Search Paradigm

Perplexity AI did not just emerge quietly. In the span of a few years, it went from startup curiosity to one of the most talked-about entrants in the evolving landscape of generative search engines. Where traditional search tools like Google and Bing rely on ranked lists of links and snippets, Perplexity offers users direct answers backed by numbered citations that link to the original sources. This is not a cosmetic feature. It is foundational to how the product is positioned and how users perceive credibility.

In a typical Perplexity result, you type a query, and the engine synthesizes an answer by examining information from around the web in real time, then lists inline sources that you can click to verify claims. This hybrid of retrieval and generation stands in stark contrast to the legacy search model, which leaves users to click through dozens of results and judge relevance themselves. The company and its evangelists openly frame this model as a leap forward for research-oriented information discovery.

The numbers reflect that this model has resonated with many users. Internal metrics show that As per Courthouse News1, Perplexity reached 120 million registered users by 2025, with 8 million new users joining monthly and 20 million weekly active users across platforms. Its user base grew especially quickly in India, surging 310 percent in 2025 alone, and the education sector accounts for a significant portion of active usage. Daily active user engagement exceeded 16 million, with session durations averaging over 7 minutes, indicating that people use the platform for in-depth research rather than cursory queries.

Even as it grows, Perplexity’s absolute traffic remains far smaller than that of traditional engines like Google, which handles billions of searches daily. University of California2 reports that Perplexity’s query volumes have been measured in the hundreds of millions per month, a fraction of mainstream search traffic. Nevertheless, its model is clearly gaining traction within a subset of users who prioritize exploratory research and source traceability.

The interface itself reinforces this shift. Rather than pages of ranked links where relevance must be interpreted through snippets, Perplexity delivers answers that read like a narrative summary but with embedded URLs. Users can click through to original sources within the answer view instead of leaving the query page to hunt for context. Product documentation and analyst commentary emphasize this transparency as a differentiator in the crowded field of AI assistants.

From a user perspective, that difference matters. For example, Reddit discussions reveal firsthand that people appreciate being spared multiple browser tabs and keyword fiddling when pursuing specific, complex questions. One detailed comment contrasted Perplexity’s conversational responses and faster retrieval against what feels like a more laborious Google workflow:

“It handles long-tail searches better than anything I’ve used… Perplexity just handles those specific questions with ease, and then the ability to follow up on any answer endlessly.”

This difference in interaction style has fueled enthusiastic adoption among knowledge workers, students, and professionals who are tired of scrolling through ten blue links to find what they want. A community-sourced comment noted that Perplexity’s interface even streamlined tasks as varied as technical support for hardware setups to culinary queries.

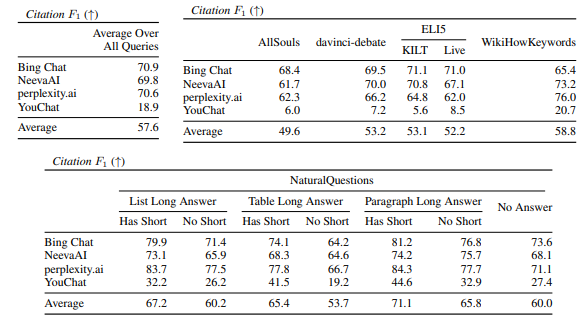

Yet this citation-first paradigm reveals an important tension. Citations provide visibility into source material, but they do not equate to verified accuracy in the human sense. Being able to click a link does not ensure that the model interpreted that source correctly, nor that it selected the most authoritative evidence. Indeed, independent research on generative search engines found that even with inline citations, a substantial share of generated content contains statements not fully supported by the cited sources. In systematic evaluations, only roughly half of the generated sentences were fully supported by citations, while about three out of every four citations correctly backed their associated sentences.

That research highlights the heart of Perplexity’s core tension: transparency without guarantees is not the same as human fact-checking. The model’s outputs look verifiable and feel credible because they carry links, but the underlying mechanisms that decide what to present and how to interpret source content are still automated and subject to the well-known limitations of large language models.

This explains why, in some circles, Perplexity’s model has been hailed as a “citation-first revolution” that promises to save professionals time and reduce misinformation by surfacing sources alongside answers. But the experiences of real users, and independent evaluations of generative search accuracy, tell a more complex story—one in which interface design and transparency signals do a lot of credibility work, even when the system’s interpretation of sources can be flawed.

Hyperlinks are elegant. They feel scholarly. But they are not equivalent to a human research assistant cross-checking every claim before presenting it.

When Transparency Isn’t Enough

Even with citations, the model is still machine-generated. That means when it interprets source content, it can misrepresent what the source says or fabricate elements of an argument because language models do not understand information the way humans do. ACL Anthology3 compares generative assistants found that engines like Perplexity may have fluency and appear informative, yet they “frequently contain unsupported statements and inaccurate citations,” with only about half of the sentences fully backed by citations in human evaluations.

Forum threads from actual Perplexity users provide vivid windows into how this plays out in practice.

One Reddit4 user detailed a research workflow that ran head-on into Perplexity’s limits:

I pasted around a dozen real working links from major outlets like New York Times and PBS and asked Perplexity to verify them. It replied that some did not exist. Then the user pasted the same link alone and Perplexity found it. This happened again and again.

That may sound trivial on the surface, but it exposes a deeper problem: reliability does not scale if the engine cannot consistently process what a human could instantly verify.

Another user reported contradictory answers to the same question, with Perplexity refusing to provide source links in one answer and then giving different information entirely in another.

Even more concerning, there are widely circulated reports of the system fabricating information about medical reviews that did not exist in the cited sources.

These are not isolated technical complaints. They are credibility failures by any reasonable definition of the term. They also suggest that Perplexity’s generation layer is not reliably constrained by its citation layer, as the product messaging implies.

Credibility Signals vs. Human Judgement

When Perplexity cites sources, users are meant to assume that a form of verification has occurred. It looks like human review, but there is no public documentation from Perplexity explaining any structured human-in-the-loop review process that vets answers before users see them. What exists instead are signals.

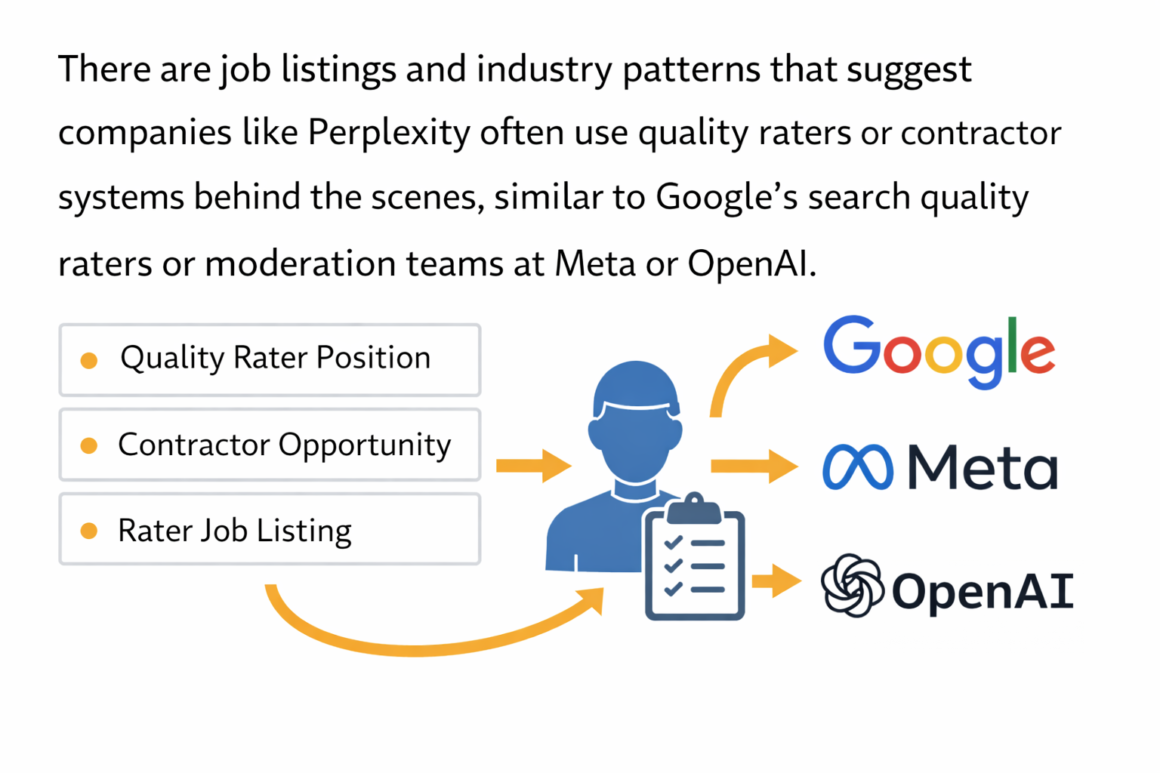

There are job listings and industry patterns that suggest companies like Perplexity often use quality raters or contractor systems behind the scenes, similar to Google’s search quality raters or moderation teams at Meta or OpenAI. But unlike those companies, Perplexity does not publish detailed workflows, guidelines, or audit mechanisms for how human labor supports its outputs. So we have signals and incentives rather than disclosure.

Academic research from NIH5 shows that generative search engines are evaluated by humans in formal studies. For example, a systematic comparison of several assistants, including Perplexity, involved human judges auditing whether responses aligned with credible sources. That kind of evaluation exists in research, not as an internal system Perplexity users can see.

When humans do touch data, it tends to show up indirectly. Labeling training data, shaping search indices, or reviewing models for safety rather than live answer verification. But the product itself never details when a particular answer is human-checked before being delivered.

So if the human role is real, it is deeply opaque. That opacity becomes a credibility layer by implication rather than clear documentation.

Legal Pressure and the Data Race

Another revealing dimension of Perplexity AI’s credibility story is how it acquires and uses data from around the web. The perceived quality of any search engine, AI assistant, or “answer engine” depends heavily on access to high-quality informational content. For Perplexity, that means drawing upon the live web in real time to generate answers with citations. But that very dependency has led to intense legal and ethical disputes over whether and how the company obtains and uses that content.

Perplexity’s model differs from legacy search engines because it synthesizes answers from online content rather than simply listing links. People expect that means the system has lawful access to whatever it cites. Yet platforms and publishers are increasingly challenging that assumption in court.

The most high-profile example came in October 2025 as reported by Reuters6, when Reddit filed a federal lawsuit against Perplexity AI and several data-scraping companies in the Southern District of New York, alleging “industrial-scale, unlawful circumvention of data protections” to harvest Reddit content. The complaint specifically invokes the Digital Millennium Copyright Act’s anti-circumvention provisions (17 U.S.C. §1201) and also asserts claims for unjust enrichment and unfair competition. According to the filing, Perplexity and the other defendants allegedly used scraped Reddit material without permission, bypassing technological controls to obtain it.

Reddit’s attorneys described the alleged conduct in stark terms, saying the defendants masked their identities and rotated IP addresses to scrape billions of Google search results pages (“SERPs”) containing Reddit text, images, and URLs — content Reddit claims was then incorporated into Perplexity’s answer engine. In a vivid portion of the complaint, the companies involved are likened to “would-be bank robbers”, bypassing barriers designed to protect content rights holders.

One closely cited test involved Reddit posting a piece of content accessible only through Google search results. Within hours, questions submitted to Perplexity produced that content in answers, which Reddit cited as evidence that its protections had been circumvented via SERP scraping.

Reddit’s chief legal officer, Ben Lee, framed the dispute as part of a larger industry battle over quality human content:

“AI companies are locked in an arms race for quality human content — and that pressure has fueled an industrial-scale data-laundering economy.”

Reddit is seeking a permanent injunction, financial damages, and restrictions preventing defendants from continuing unauthorized use or sale of its scraped data.

CNBC7 reported that Perplexity, for its part, denied the allegations. They argued that it does not train foundational AI models on scraped content and that it supports an “open Internet” model for access to public information. In official statements and on forums, company representatives have rejected claims that they acted improperly, characterizing the lawsuit as part of broader data-licensing negotiations rather than clear wrongdoing.

The Reddit lawsuit is significant not just because of its scale but because Reddit already has licensing arrangements with some major AI players. Companies like Google and OpenAI have negotiated paid access to Reddit’s data for training models, underscoring that not all access to user-generated content is treated the same way legally.

Legal disputes have not been limited to formal lawsuits. Cloudflare8, a major web infrastructure provider, publicly documented behavior by Perplexity’s crawling systems that raised industry concerns about compliance and transparency. In August 2025, Cloudflare published findings suggesting that Perplexity’s web crawlers attempted to hide their identity and evade site blocks when restricted.

According to Cloudflare, when its verified Perplexity bot user agents were blocked via robots.txt or firewall rules, other requests continued coming in, masquerading as standard browsers (for example, using strings identical to real Chrome browsers) and rotating IP addresses not associated with Perplexity’s official infrastructure. This behavior, Cloudflare said, ignored standard web crawling norms and constituted “stealth crawling” — a practice that undermines website owners’ ability to control access to their content.

Cloudflare ultimately de-listed Perplexity’s bot as verified and added new rules to block the stealth crawler on behalf of site owners because it was inconsistent with expected crawler transparency. The blog noted that undeclared crawlers skirted robots.txt directives and persisted across thousands of domains with millions of daily requests.

This conflict underscores a broader technical and legal tension: websites expect automated agents to respect explicit controls like robots.txt files and firewall blocks. When AI companies deploy crawling infrastructure that may skirt or circumvent those preferences, the result is not just a technical problem but a rights and consent issue for content owners.

The legal pressure on Perplexity has extended beyond Reddit. Major news organizations, including The New York Times9, Dow Jones, and New York Post, have reportedly initiated lawsuits alleging unauthorized use of copyrighted journalism to power AI tools, including Perplexity’s retrieval-augmented generation systems. These plaintiffs argue that delivering full or partial texts from behind paywalls constitutes a form of copyright infringement because it undercuts the pay models that fund newsroom operations.

On social media and public forums, users have shared snippets of these developments as they unfold, such as news that The New York Times is seeking the court’s intervention to stop Perplexity from copying its subscriber-only material.

Taken together, these legal actions and infrastructure disputes reflect a larger industry debate over how AI systems should access and use the vast troves of human-generated content on the web. Websites, platforms, and publishers increasingly see their content as commercially valuable assets, not free resources for automated systems to ingest without consent or compensation.

This dynamic is playing out in federal courts and infrastructure platforms alike, raising questions about how the rights of content creators, platform users, and publishers should be balanced against the demands of automated systems that rely on that content to function. The outcomes of these cases, especially against Perplexity and similar AI search providers, may set precedents that shape data licensing standards across the industry.

In this environment, credibility becomes not only a technical challenge but also a legal and ethical one. If an answer engine’s outputs are built upon disputed or contested data acquisition practices, then the underlying credibility of those answers is linked not just to algorithmic accuracy but to the lawful source and consent-based use of the content it cites.

Where or When Are People Involved?

If you look for mentions of “human review” or “moderators” in Perplexity’s official help pages and product documentation, you will find language about safety filters and abuse prevention, not explicit statements like “trained humans review each answer before it goes out.” The documentation focuses on how the product retrieves and synthesizes data, not on human-in-the-loop checks.

That does not mean Perplexity employs no humans at all. It almost certainly has staff and contractors who work on model evaluation, dataset curation, quality benchmarking, and safety monitoring. Those are standard roles in AI companies. But that labor is performance validation over time, not live answer certification.

What does appear in evidence is that human community-level feedback exists around the product, often in external forums. Multiple threads show users complaining about errors, hallucinations, and perceived inconsistencies. These threads are not internal channels, but they act like a crowdsourced review mechanism of the product’s outputs:

“Perplexity’s Deep Search hallucinates more often than it should and UI glitches make it unstable.”

“Is it serving old news while claiming freshness? Not cool.”

That kind of user surveillance is an external, ad hoc, user-driven form of review far different from structured human validation.

What Users Expect vs. What Automation Delivers

Part of the mismatch between expectation and reality comes from how Perplexity is marketed and framed. Citations convey credibility and inner verification, but they do not inherently enforce correctness.

Research10 on generative search engines shows that even systems with inline citations may have only about 51.5 percent of their generated sentences fully supported by citations and only 74.5 percent of citations accurately backing their statements. That means even the best engines are still wrestling with verifiability gaps.

This resonates with user complaints about inconsistency and fallibility. One forum thread described how Perplexity would forget context between queries, fail to retain crucial information, and produce answers that looked grounded but were inconsistent and sometimes incorrect.

Traditional search engines may lack fluent explanation, but they rely on decades of human curation in ranking and indexing. AI search tools like Perplexity put confidence and synthesis front and center — but without fully replacing the human judgment that distinguishes truth from plausible fabrications.

Here is where the argument becomes interesting: Perplexity’s growth was propelled by a narrative of automation, yet the product implicitly leans on human systems, whether training oversight, external evaluation research, content provider relationships, or community feedback loops.

Researchers have used human evaluation to benchmark Perplexity’s performance. Community reactions and complaints act like a distributed review network that surfaces errors, contradictions, or hallucinations. Users themselves become peer reviewers of outputs that the system claims should be reliable.

In the absence of public documentation of live human review, the gap itself is informative. If a system is presented as credible because of its citations yet produces misinformation — the role of human systems, available research evaluation, and user community behavior becomes the invisible scaffolding that actually upholds trust.

Why This Matters

The implications here reach beyond one product. Perplexity is one of many emerging generative search engines. Its user base and visibility give it outsized influence in shaping how people interact with information online.

But when a system can cite a source and still misinterpret or invent facts, when legal fights erupt over how data is acquired, and when credibility comes from external human judgment rather than internal transparency, then we confront a deeper question about AI search:

Does automation truly eliminate the human in the loop, or does it render human judgment invisible?

The answer, based on this investigation, is the latter.

Human systems are still essential to establishing and maintaining credibility. They exist in training processes, legal pressures, community feedback, and post-hoc evaluation research. They are not always disclosed or celebrated. Instead, they are woven into product design decisions that give users the impression of verification without revealing the actual work of interpretation and correction that humans perform outside the interface.

Perplexity AI’s rapid growth and popularity reflect a real desire for tools that can make sense of the sprawling digital world quickly and with references. Its citation-first approach is elegant on the surface and often useful.

Yet credibility is not the same as apparent verification. Citations can be misinterpreted, summaries can be fabricated in subtle ways, and legal controversies over data scraping show that the foundations of the data ecosystem itself are contested.

I realized that Perplexity’s technology does not eliminate humans from the knowledge loop. It hides them behind links, design language, and research citations. Users, in forums and communities, have become reviewers by necessity, surfacing errors and limitations.

An automated answer engine may feel like a definitive source, but in practice, it is part of a larger human ecosystem of evaluation, interpretation, correction, and contestation. The line between automation and human judgment is not gone — it is just more complex and less visible than many users assume.

Sources

- 10 Sept. 2025, www.courthousenews.com/wp-content/uploads/2025/09/www.courthousenews.com/wp-content/uploads/2025/09/perplexity-ai-complaint.pdf. Accessed 6 Feb. 2026. ↩︎

- Girard, Kim. “Perplexity AI CEO Aravind Srinivas, PhD 21, on why he ditched pitch decks” UC Berkeley Haas, 21 Oct. 2025, newsroom.haas.berkeley.edu/deans-speaker-series-perplexity-ai-ceo-aravind-srinivas-phd-21-on-why-he-ditched-pitch-decks/. Accessed 6 Feb. 2026. ↩︎

- “Evaluating Verifiability in Generative Search Engines” ACL Anthology, aclanthology.org/2023.findings-emnlp.467/. Accessed 9 Feb. 2026. ↩︎

- Reddit www.reddit.com/r/perplexity_ai/comments/1kpqy0c/perplexity_struggles_with_basic_url_parsingand/. Accessed 6 Feb. 2026. ↩︎

- “The Use of Generative Artificial Intelligence (AI) in Academic Research: A Review of the Consensus App” PMC , pmc.ncbi.nlm.nih.gov/articles/PMC12318603/. Accessed 6 Feb. 2026. ↩︎

- “Reuters.Com” www.reuters.com/world/reddit-sues-perplexity-scraping-data-train-ai-system-2025-10-22/. Accessed 6 Feb. 2026. ↩︎

- Butts, Dylan. “Reddit accuses Perplexity of stealing user posts, expanding data rights battle with AI industry” 23 Oct. 2025, www.cnbc.com/2025/10/23/reddit-user-data-battle-ai-industry-sues-perplexity-scraping-posts-openai-chatgpt-google-gemini-lawsuit.html. Accessed 6 Feb. 2026. ↩︎

- Corral, Gabriel. “Perplexity is using stealth, undeclared crawlers to evade website no-crawl directives” 4 Aug. 2025, blog.cloudflare.com/perplexity-is-using-stealth-undeclared-crawlers-to-evade-website-no-crawl-directives/. Accessed 6 Feb. 2026. ↩︎

- “Nytimes.Com” 5 Dec. 2025, www.nytimes.com/2025/12/05/technology/new-york-times-perplexity-ai-lawsuit.html. Accessed 6 Feb. 2026. ↩︎

- “ResearchGate”, www.researchgate.net/publication/370127041_Evaluating_Verifiability_in_Generative_Search_Engines. Accessed 6 Feb. 2026. ↩︎