Post Author

Google Search sits at the center of how billions of people access information every day. The company’s algorithms determine what appears at the top of results for news, health information, legal guidance, product advice, and nearly every other query imaginable.

What is less visible is the role of a group of human reviewers often called quality raters. These contractors evaluate search results against a detailed set of guidelines. Their judgments do not directly alter rankings, but their evaluations feed into internal quality assurance processes that influence how algorithms are tuned.

This article examines who these raters are, how their guidelines operate, and why their work effectively shapes the boundaries of what information is allowed to rank well. Through public documentation, academic research, and quotes from raters themselves, I explore the policy enforcement role built into the system and the implications for information diversity and freedom.

Who Are Google Search Quality Raters and What the Guidelines Require

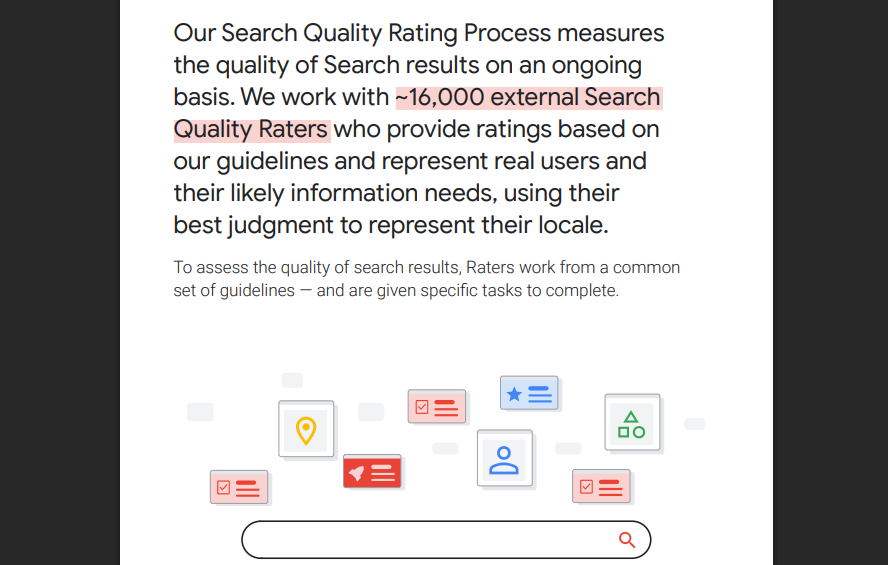

Google relies on a large global workforce of contractors known as Search Quality Raters. These workers are not Google employees. They are hired through third-party vendors and typically work remotely. Public reporting over the years has estimated that the number of raters reaches into the tens of thousands worldwide, spanning multiple languages and regions.

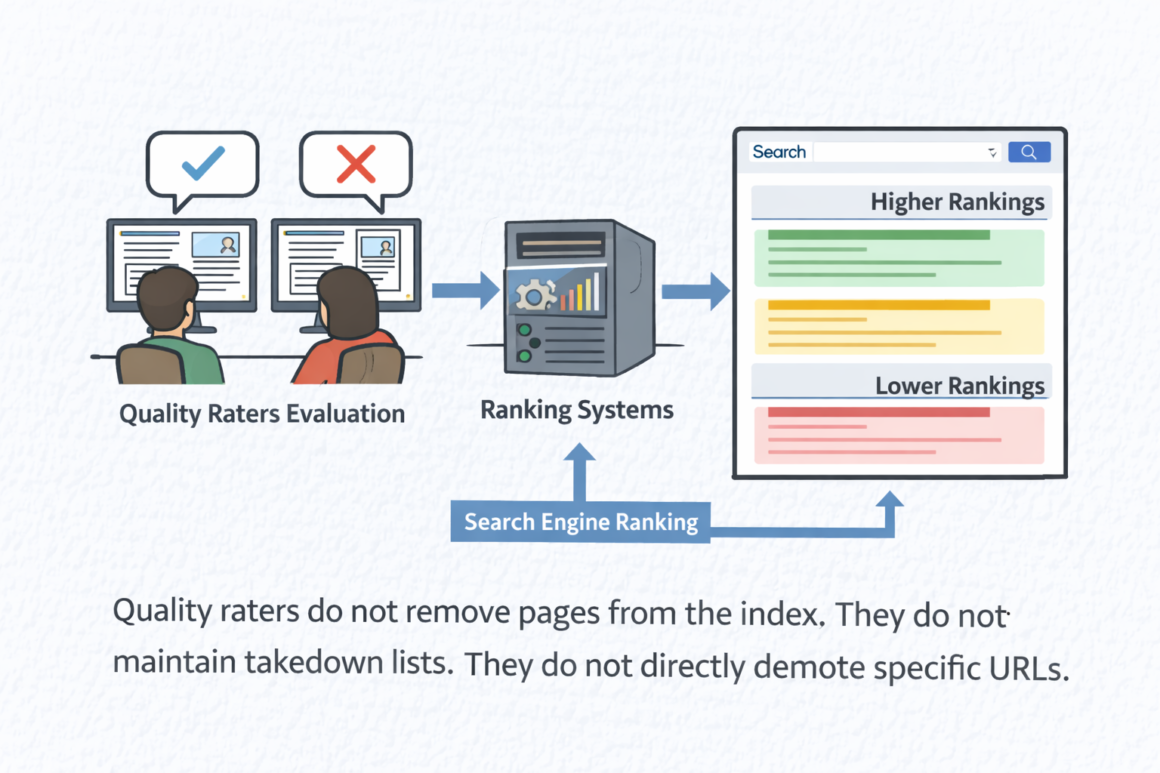

Their job is not to manually change rankings or remove pages from the index. Instead, they evaluate search results against Google’s published Quality Rater Guidelines, often referred to as the QRG. These guidelines provide detailed instructions on how to assess whether a result is useful, trustworthy, relevant, and aligned with user intent.

Google1 states clearly that rater feedback does not directly alter individual rankings. In the publicly available guidelines, the company explains that rater data “does not directly affect individual search results, but is used to evaluate the quality of results overall.”

The distinction is important. Raters do not demote a specific page themselves. They score the sample results. Engineers then use those scores to evaluate whether algorithm changes improve or degrade overall search quality.

Yet the influence of the QRG extends far beyond individual rating sessions. The document codifies what Google defines as high-quality content and what it defines as low-quality content. When raters consistently flag certain patterns as poor quality, those signals can inform model training and ranking adjustments. Over time, this process shapes what types of content are more likely to rank prominently.

The Quality Rater Guidelines are extensive and highly structured. They include examples of real search queries and step-by-step instructions on how raters should evaluate the results shown.

Central to the framework are the concepts of expertise, authoritativeness, and trustworthiness, often abbreviated in industry discussions as EAT. While Google has refined the terminology in recent years, the core principle remains consistent. Content that demonstrates clear expertise and reliable sourcing should be rated higher than content that lacks credibility signals.

For instance, the guidelines instruct raters that for medical queries, the highest quality results typically come from recognized medical institutions, licensed professionals, or authoritative health organizations. A personal blog offering medical advice without credentials would generally receive a lower rating.

One section of the guidelines states:

“High quality pages provide a satisfying amount of high quality main content, and show that the creator of the MC (main content) is an expert or authoritative source for the topic.”

According to Taboola2, Google also introduced a special category known as Your Money or Your Life, often abbreviated as YML. These are queries related to health, financial stability, safety, or civic participation. Because inaccurate information in these areas can cause real harm, raters are instructed to apply extremely high standards. Content that could mislead users about medical treatment, financial decisions, or voting processes should be rated lowest if it lacks credible sourcing.

Raters are also instructed to evaluate purpose and context. A page whose primary purpose is to misinform, manipulate, or deceive should be rated as low quality regardless of presentation. Even well-designed pages can receive poor ratings if their intent appears misleading.

On their face, these principles align with common sense. Promoting accurate health information and discouraging deceptive content seems uncontroversial. However, when these principles are operationalized at scale through human evaluations that inform algorithm training, they take on structural significance.

The guidelines do not merely describe quality. They define it in operational terms. Those definitions become embedded in how search systems are evaluated and refined. Over time, they shape the boundaries of what types of content are considered trustworthy enough to rank highly.

It is at this point that the conversation shifts from neutral quality control to questions about informational power. What counts as authority? Who decides what constitutes sufficient expertise? And how do these definitions influence what billions of users ultimately see at the top of search results?

Google says rater feedback does not change individual search results directly. However, the process of training and evaluating algorithms depends on rater judgments.

Search Engine Journal3 reported that Danny Sullivan explained how search quality raters contribute to improving results and how their feedback is used when refining ranking systems.

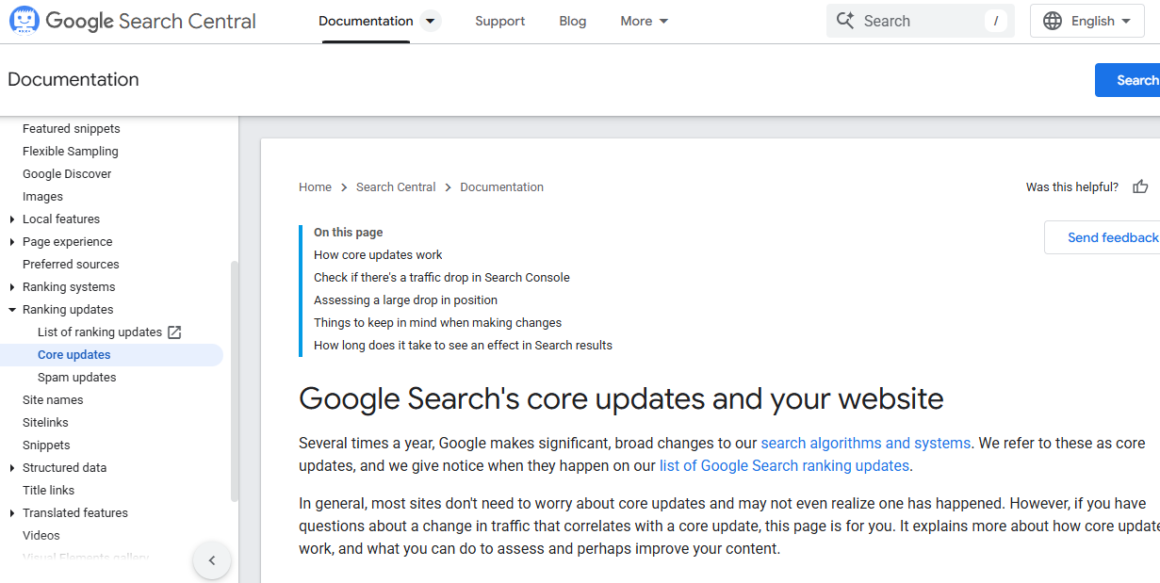

Each year, Google makes thousands of adjustments to its search algorithms. Before any change is rolled out broadly, it is carefully evaluated to determine whether it will genuinely improve the experience for users worldwide.

Algorithms are machine learning systems. They learn from examples. When raters label certain types of results as high quality, the system uses those signals as benchmarks. When raters consistently flag other types of results as low quality, algorithms are adjusted to avoid similar patterns.

The connection between human rating and automated ranking is technical, but it is real. One Science Direct4 article shows how researchers in information science have documented how user feedback mechanisms and human evaluation shape search quality systems over time.

A former search quality rater explained in an online forum:

“Raters are not deciding the rankings day to day. But our feedback trains the teams building the ranking models. Over time, what they hammer us on shapes what the algorithm optimizes for.”

In other words, the guidelines act as policy code. What is included in the QRG defines what good content looks like. What is excluded is implicitly defined as less valuable or harmful.

A Narrow Definition of Quality

The emphasis on credentials and institutional authority has clear benefits. In domains such as medicine, law, and finance, prioritizing recognized expertise can reduce the visibility of harmful misinformation. For high-risk queries, especially those categorized as Your Money or Your Life, elevating established institutions may protect users from demonstrably false claims.

But the same framework can also narrow the range of voices that are treated as credible.

Because the guidelines instruct raters to favor visible expertise, formal credentials, and strong reputational signals, established publishers and well-known institutions often align more easily with the model of “high quality.” Independent writers, smaller outlets, and unconventional experts may struggle to demonstrate authority in the prescribed ways, even when their reporting or analysis is rigorous.

One former contractor described the tension this way:

“Sites with visible credentials and big domain names always score better. Even if the independent article is more accurate, the guideline pushes us to prefer institutional sources.”

This does not necessarily reflect intentional bias. It reflects the operational definition of quality embedded in the document. When raters are trained to look for signals such as formal qualifications, recognized institutions, and external reputation, they are incentivized to reward those markers consistently.

Academic research suggests that this dynamic can have structural consequences. Scholars studying algorithmic systems have found that models trained on authority-based signals tend to reinforce incumbents and established actors. As one Sage Journal5 paper on Social Media and Society argues, algorithmic curation systems can reproduce and amplify existing power asymmetries, particularly when credibility is inferred from institutional visibility rather than solely from content accuracy.

Over time, this can reduce informational diversity. If high-visibility domains repeatedly receive strong quality ratings, algorithms tuned to mirror those evaluations may increasingly privilege the same set of sources. The result is not fabricated information, but a narrower distribution of recognized authority.

The core issue is that “quality” is not a purely technical variable. It is socially defined. When guidelines codify particular markers of authority, they shape whose knowledge is systematically elevated and whose is marginalized.

Quality raters do not remove pages from the index. They do not maintain takedown lists. They do not directly demote specific URLs.

However, their evaluations feed into the systems that determine ranking. In practice, this means they influence which types of content are more likely to appear prominently and which are more likely to appear further down the results page.

Consider a sensitive topic such as vaccines. The guidelines instruct raters to apply especially high standards to health-related queries. Official public health institutions, recognized medical organizations, and credentialed experts are expected to receive higher ratings than personal blogs or anecdotal accounts. When engineers test algorithm changes against rater feedback, models that surface institutional sources more reliably may be judged as “improving quality.”

Over time, that feedback loop shapes ranking behavior.

Some critics describe this as content moderation by proxy. The platform does not formally ban most alternative or controversial viewpoints. Instead, it uses structured human evaluations to train systems that systematically deprioritize content that fails to meet guideline standards.

As one digital rights advocate noted in public commentary:

“There is no formal censorship list. But what the guidelines elevate and what they downgrade functions as policy enforcement. It shapes what the public sees.”

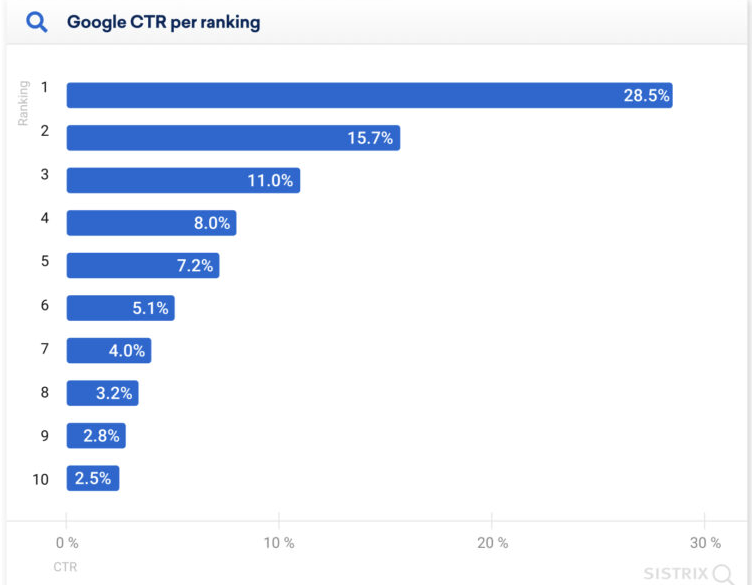

The distinction is significant. Removal is visible and contestable. Ranking changes are quieter. A page may remain indexed, but if it is pushed far enough down the results, its practical visibility declines sharply. Research from Search Engine Journal6 consistently shows that users (more than 25%) overwhelmingly click results on the first page, with a steep drop-off beyond the top positions.

From Google’s perspective, this system is about quality assurance and user safety. From a governance perspective, it represents a powerful mechanism for shaping the informational environment without explicit content bans.

The result is a model where contractors, following detailed guidelines, participate in defining what counts as trustworthy knowledge at scale. They do not write policy in the traditional sense. But through their evaluations, they help operationalize it.

Data on Rater Influence

Precise quantitative data on how much quality rater feedback affects live ranking systems is not publicly available. Google does not release internal weighting metrics, model parameters, or experimental dashboards. The mechanics of how evaluation data flows into production systems remain proprietary.

However, Google7 has openly acknowledged that human evaluation plays a central role in measuring and improving search quality. In research publications and technical documentation, the company describes the use of human raters to assess experimental ranking changes before they are launched widely.

In practice, this means engineers propose adjustments to ranking models. Those adjustments are then tested against sets of queries. Human raters evaluate the resulting outputs using the Quality Rater Guidelines. If the new system performs better according to rater judgments, it may be selected for deployment or further refinement.

This evaluation pipeline gives rater judgments a gatekeeping function. Even if raters do not directly alter rankings, their scores determine which models are considered improvements and which are rejected.

Academic research on information retrieval systems helps clarify why this matters. Machine learning models depend on labeled data. If the labeling process reflects a specific definition of quality, then models trained or validated on that data will converge toward that definition.

Independent researchers have attempted to simulate aspects of this process. One study on Arxiv8 used evaluation criteria derived from guideline-style frameworks to construct test sets for ranking experiments. The findings showed that models optimized on such human-labeled sets tend to align closely with the evaluative standards embedded in those labels.

The implication is straightforward. When quality is operationalized through structured human judgment, and models are optimized to satisfy that judgment, the evaluative framework becomes embedded in system behavior.

There is also indirect data from Google’s9 public statements about core updates. When major algorithm changes are rolled out, the company often frames them as improvements in surfacing high-quality or authoritative content. While specific metrics are not disclosed, these updates are described as reflecting ongoing refinements informed by evaluation processes.

The absence of transparent numerical data does not mean influence is negligible. On the contrary, in machine learning systems, evaluation data is central to iteration. A ranking system that consistently fails human quality tests is unlikely to be deployed at scale.

In this sense, rater influence operates at the model selection and optimization layer rather than at the individual query level. It shapes the criteria against which systems are judged successful.

These research practices and public disclosures provide indirect but meaningful evidence. They support the conclusion that the Quality Rater Guidelines are not merely advisory documents. They function as evaluative benchmarks that shape how ranking systems are trained, tuned, and validated.

While the precise magnitude of their influence remains opaque, their structural role in the development lifecycle suggests that the guidelines help define what the system is trained to prefer, avoid, and amplify over time.

The Question of Bias

Any evaluation framework reflects underlying values. When those values prioritize institutional authority, formal credentials, and reputational signals, a particular vision of credibility becomes embedded in the system.

Bias does not have to be intentional to be consequential. It can arise from well-meaning efforts to reduce misinformation. If a guideline instructs raters to reward recognized expertise and established domains, the cumulative effect may systematically advantage major news organizations, government institutions, and large publishers over niche outlets, independent researchers, or minority perspectives.

One academic study in the Science Direct10 warned that authority-based ranking systems can bias visibility against diverse or emerging viewpoints, particularly when credibility signals are tied to institutional status rather than argument quality or evidentiary strength.

The concern is structural. When raters consistently score institutional sources more highly, and algorithms are optimized to align with those scores, dominant institutions may become further entrenched in top positions. Alternative perspectives may remain accessible, but less visible.

Critics argue that by embedding these evaluative standards into training data, the system effectively enforces a policy of informational alignment with dominant cultural and professional institutions. This does not require explicit censorship. It operates through reinforcement.

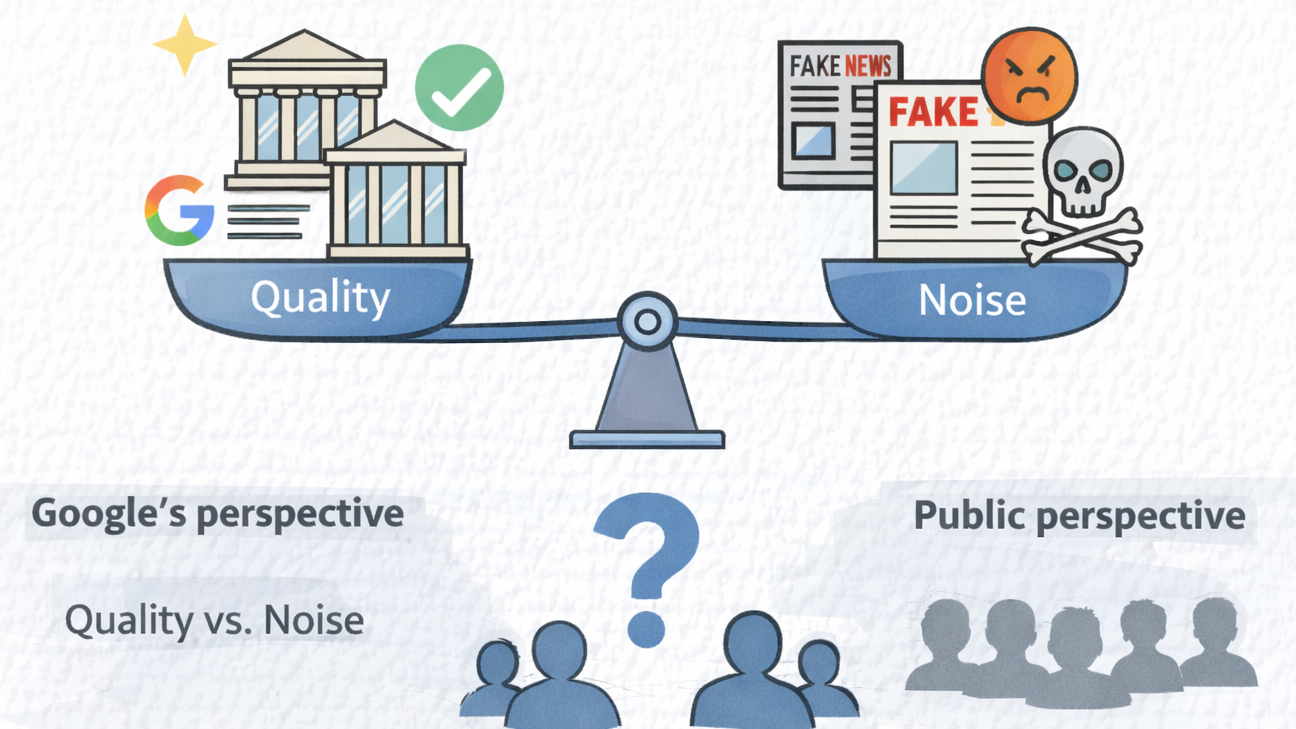

From Google’s perspective, the tradeoff is framed as quality versus noise. In a search environment flooded with low-quality, deceptive, or malicious content, privileging recognized authority can be seen as a pragmatic safeguard.

From a public perspective, however, the question becomes more fundamental: who defines quality, and according to which values? When one private framework shapes the informational architecture of billions of queries, the normative assumptions behind that framework matter.

Public discussion among contractors reveals that raters themselves do not speak with one voice.

Some express confidence in the clarity and purpose of the guidelines. They view their role as contributing to a safer information ecosystem by rewarding accuracy and demoting misleading content.

One commenter in a publicly visible contractor forum wrote:

“The guidelines give us clear rules. If everyone follows them, low-quality and harmful content gets pushed down.”

For these raters, the framework offers consistency. It reduces ambiguity and provides a structured way to evaluate complex material at scale.

Others are more ambivalent. They question how rigid criteria apply to nuanced topics, especially where local context, lived experience, or minority viewpoints are central.

Another contractor wrote:

“Sometimes the highest authority is not the most relevant. The guidelines do not always appreciate context.”

This tension highlights a deeper issue. Authority and relevance are not always aligned. A national institution may carry stronger reputational signals, yet a local expert or community source may provide more precise or culturally grounded information for a specific query.

The divergence in rater perspectives underscores that the guidelines are interpreted by humans, each bringing their own judgment and experience to the task. Even within a structured system, gray areas persist.

Taken together, these debates illustrate the central challenge. The quality rater model seeks to standardize evaluation in order to train algorithms effectively. But quality itself remains contested, contextual, and socially constructed. When those contestations are filtered through a global ranking system, their consequences extend far beyond individual contractor decisions.

Broader Impacts on Information Access

The influence of quality raters extends well beyond internal evaluation workflows. Because Google Search remains the dominant gateway to online information in much of the world, the standards embedded in the Quality Rater Guidelines ripple outward into the broader information economy.

Publishers adapt. Newsrooms, independent writers, affiliate sites, nonprofits, and academic institutions increasingly optimize content to align with the signals emphasized in the guidelines. These include clear authorship, visible credentials, institutional affiliation, outbound citations, and a demonstrable track record of trustworthiness. Industry discussions often reference Experience, Expertise, Authoritativeness, and Trustworthiness as practical proxies for what tends to perform well.

Search Engine Optimization practices reinforce these incentives. SEO agencies routinely advise clients to add detailed author bios, link to authoritative sources, secure mentions from recognized publications, and build domain-level authority over time. In effect, the expectations that shape rater evaluations also shape content production.

This creates a feedback loop. Raters are trained to value certain signals. Algorithms are tuned to align with rater judgments. Publishers then design content to surface those same signals. Over time, informational norms converge around what the guidelines reward.

The result is not necessarily uniformity of opinion, but it can mean uniformity of format and voice. Smaller publishers without institutional markers may struggle to compete, even when their reporting is rigorous. Community-specific perspectives may be technically accessible but less discoverable.

An executive at a mid-sized digital publisher described the dynamic this way:

“We are not just writing for readers anymore. We are writing for systems that are trained on guidelines. If we do not look authoritative in the right way, we disappear.”

The structural effect is subtle but powerful. Quality expectations become infrastructure. And infrastructure shapes outcomes.

Google Search quality raters do not enforce policy in the traditional regulatory sense. They do not delete webpages. They do not adjudicate legal disputes. They do not maintain a formal blacklist.

Yet the structure of their work means they function as indirect policy enforcers.

Through comprehensive guidelines that define what high-quality content looks like, and through feedback that is used to evaluate and refine ranking systems, human raters help calibrate the standards by which information is surfaced. When raters consistently reward certain institutional signals and downgrade others, algorithms learn from those patterns.

The criteria embedded in the guidelines influence which content is elevated and which becomes harder to find. Given the central role of Google Search in everyday information access, this influence is amplified at scale.

The debate, then, is not simply about misinformation. It is about governance. When a private framework defines expertise, authority, and trustworthiness for billions of queries, those definitions shape public discourse.

Supporters argue that without strong quality signals, search results would be overwhelmed by spam, scams, and harmful falsehoods. Critics counter that embedding institutional authority as a primary filter risks narrowing epistemic diversity and reinforcing existing power structures.

Both positions acknowledge the same reality: human judgment and algorithmic systems are intertwined. Quality raters sit at that intersection. Their evaluations reflect values codified in guidelines. Those values are translated into ranking adjustments. Those rankings shape what people read, learn, and believe.

Understanding this layered process is essential for anyone concerned with information access, diversity of knowledge, and the growing role of platforms in structuring public life.

Sources

- “Searchqualityevaluatorguidelines” 10 Sept. 2025, static.googleusercontent.com/media/guidelines.raterhub.com/en/static.googleusercontent.com/media/guidelines.raterhub.com/en/searchqualityevaluatorguidelines.pdf. Accessed 18 Feb. 2026. ↩︎

- Faris, Stephanie. “YMYL (Your Money Your Life): What It Is, Why It Matters” www.taboola.com/marketing-hub/ymyl/. Accessed 19 Feb. 2026. ↩︎

- Southern, Matt G. “Google’s Search Quality Raters Do Not Directly Influence Rankings” 4 Aug. 2020, www.searchenginejournal.com/googles-search-quality-raters-do-not-directly-influence-rankings/376614/. Accessed 19 Feb. 2026. ↩︎

- “ScienceDirect” www.sciencedirect.com/topics/mathematics/user-feedback. Accessed 18 Feb. 2026. ↩︎

- Sage Journal, journals.sagepub.com/doi/10.1177/17456916231185057. Accessed 19 Feb. 2026. ↩︎

- Southern, Matt G. “Over 25% of People Click the First Google Search Result” 15 July 2020, www.searchenginejournal.com/google-first-page-clicks/374516/. Accessed 20 Feb. 2026. ↩︎

- Works, How Search. “Search Engine Testing & Evaluation” Google Search, www.google.com/intl/en_us/search/howsearchworks/how-search-works/rigorous-testing/. Accessed 19 Feb. 2026. ↩︎

- “Leveraging Passage Retrieval with Generative Models for Open Domain Question Answering” 3 Feb. 2021, arxiv.org/abs/2007.01282. Accessed 19 Feb. 2026. ↩︎

- “Google Search’s Core Updates | Google Search Central | Documentation | Google for Developers” Google Search Central | Documentation | Google for, developers.google.com/search/docs/appearance/core-updates. Accessed 20 Feb. 2026. ↩︎

- “ScienceDirect” www.sciencedirect.com/science/article/pii/S0957417423021437. Accessed 19 Feb. 2026. ↩︎