Post Author

When Microsoft1 reports that Microsoft Teams has more than 320 million monthly active users, the number carries weight. It appears in earnings calls, financial coverage, and market analysis. It suggests not only scale but deep integration into how organizations function.

- The Growth Story in Microsoft’s Own Words

- What Microsoft’s Documentation Actually Says

- The Acceleration of Adoption and Insights From IT Communities

- Industry Norms and Comparable Metrics

- The Influence of Incentives

- When High Activity Signals Overload

- Interpreting the Word “Inflation”

- Toward More Nuanced Measurement

As I began researching how Microsoft defines that number, I was less interested in whether it was accurate and more interested in what it actually measures. In enterprise software, words like active and engaged often blur together in public conversation. They are not the same.

The distinction matters because leadership teams, investors, and even employees frequently treat active user metrics as shorthand for meaningful adoption. The deeper I looked into Microsoft’s own documentation, investor transcripts, and industry reporting norms, the more I saw how that shorthand can shape perception in subtle but important ways.

The Growth Story in Microsoft’s Own Words

Microsoft’s Investor Relations archive provides a clear chronological record of how the company has framed the rise of Microsoft Teams. In April 2021, during its quarterly earnings communications, Microsoft2 announced that Teams had reached 145 million daily active users. That milestone was presented not as an isolated achievement but as part of a broader transformation narrative centered on hybrid work, cloud acceleration, and enterprise digital infrastructure.

In subsequent earnings updates, Microsoft reported that Teams had surpassed 320 million monthly active users. The scale of that number places Teams among the most widely deployed enterprise collaboration platforms in the world. The company’s earnings transcripts, which are publicly available, repeatedly connect this growth to Microsoft 365 expansion3, Azure cloud integration, and cross-product bundling strategies.

As I read through multiple transcripts across several fiscal quarters, I paid attention not only to the figures themselves but also to the framing. Teams’ growth was frequently described alongside phrases such as continued customer momentum, durable demand, and broad-based adoption. Executives highlighted large enterprise wins, government contracts, and education sector deployments. Growth in active users was consistently positioned as validation of Microsoft’s strategy to embed collaboration deeply into its productivity ecosystem.

In several calls, leadership referenced hybrid work trends as a structural shift rather than a temporary pandemic response. Teams was portrayed as foundational infrastructure for the future of work. The repetition of usage milestones served to reinforce that narrative.

From an investor’s perspective, these numbers carry important signals. Active user growth implies more than mere signups. It suggests recurring interaction, ecosystem stickiness, and integration with daily workflows. For publicly traded technology companies, such metrics are closely tied to long-term revenue expectations and market confidence.

The numbers are also credible in scale. Teams is bundled with many Microsoft 365 enterprise plans, and Microsoft maintains a global footprint across industries and regions. Government agencies, multinational corporations, universities, and small businesses all operate within the Microsoft ecosystem. It is reasonable that a collaboration platform integrated into that ecosystem would achieve broad deployment.

However, what earnings calls are designed to do is communicate momentum and financial performance. They are not designed to dissect methodological nuance. The transcripts do not typically explain how daily or monthly active users are calculated at a granular level. They do not differentiate between light usage and heavy workflow integration. They report the aggregate result.

As I examined the earnings materials, I found no evidence of hidden definitions or misleading language. The company consistently used the term daily active users or monthly active users without expanding on how those categories distinguish between different patterns of engagement.

That omission is understandable within the context of financial reporting. Yet it raises an analytical question. When hundreds of millions of active users are cited as evidence of adoption, what behaviors are actually being measured? To answer that question, I turned from investor communications to Microsoft’s technical and administrative documentation, where definitions are spelled out with greater specificity.

What Microsoft’s Documentation Actually Says

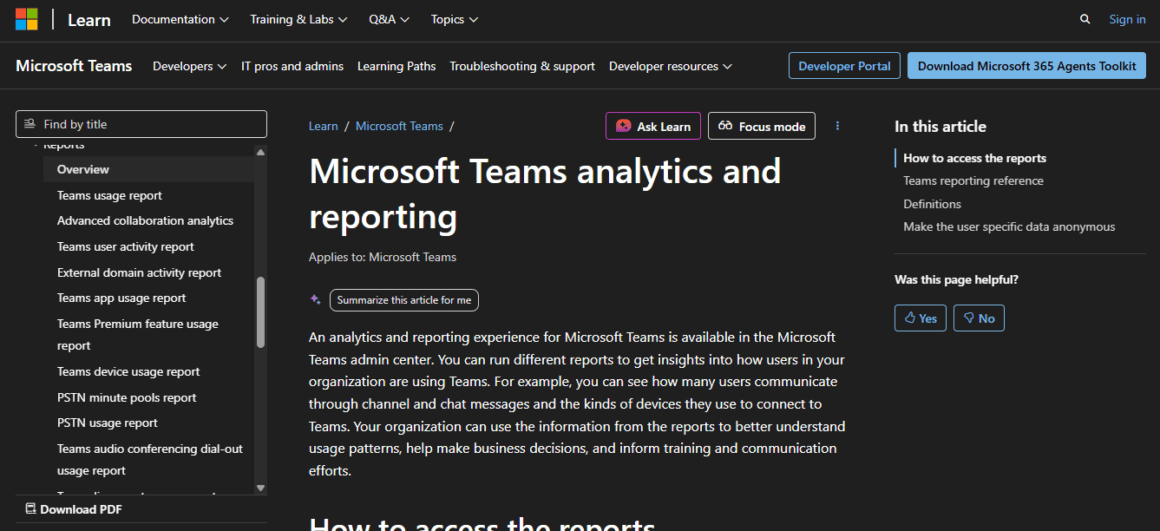

After reviewing earnings transcripts, I turned to Microsoft’s administrative documentation to understand how usage metrics are constructed at the operational level. On Microsoft Learn4, the company provides detailed guidance for Teams analytics and reporting. These materials are written for administrators who manage Microsoft 365 environments and need to interpret dashboards, usage summaries, and adoption data.

Within that documentation, Microsoft explains that usage reports are generated from service-level telemetry and aggregated into predefined reporting windows. Administrators can review data over different time frames, typically 7 days, 28 days, 90 days, or 180 days, depending on the report.

More specifically, the Microsoft 365 admin center documentation clarifies how Teams activity reports define active users.

In the Teams user activity report description, Microsoft states that the report provides insights into “the number of daily active users and activity counts” and that a user is considered active if they performed at least one activity during the selected reporting period. Activities include actions such as sending a chat message, replying in a channel conversation, participating in a meeting, or making a call.

As I read through the definitions and report descriptions, what became clear is that the methodology is consistent and technically precise. Microsoft does not obscure what counts as activity. The documentation specifies which interactions qualify and how reporting windows are applied. It also distinguishes between user-level activity reports and device usage reports, offering administrators multiple ways to view aggregated data.

However, the operational clarity of the definition contrasts with its behavioral breadth. A user who sends a single chat message in a 28-day reporting window qualifies as active. A user who joins one meeting in that period also qualifies. At the same time, a project manager who conducts daily meetings, coordinates multiple teams, and initiates dozens of conversations is recorded in the same active category.

The metric does not rank or weight these behaviors. It does not assign higher significance to recurring or intensive use. It answers a binary question. Did the user perform at least one qualifying action within the reporting window?

That binary structure makes the metric highly scalable. It allows Microsoft to aggregate usage across millions of organizations in a standardized way. It also simplifies internal reporting for enterprise administrators who need quick adoption snapshots.

Yet the simplicity carries limitations. Because the metric does not differentiate between minimal and sustained interaction, it cannot, on its own, describe engagement depth, workflow integration, or collaboration intensity. It confirms participation at a threshold level, but it does not measure how central Teams is to an individual’s daily work.

This distinction becomes important when active user percentages are presented as indicators of transformation success. The documentation makes the definition clear. The interpretation, however, depends on how organizations contextualize what “active” truly represents.

The Acceleration of Adoption and Insights From IT Communities

According to Business Insider5, at the height of the initial global shift to remote work, Microsoft announced that Teams had reached 75 million daily active users. I reviewed the original company blog post from that period, which emphasized how rapidly organizations were transitioning to digital collaboration.

The growth was dramatic and understandable. Enterprises needed continuity. Meetings, file sharing, and chat all moved online. Teams became critical infrastructure almost overnight.

What interested me as I revisited these announcements was how the crisis context shaped measurement culture. When deployment speed is the priority, activity becomes the most immediate proof of success. If employees can log in and attend meetings, adoption appears validated.

Rapid deployment does not necessarily equal deep workflow integration. Many organizations rolled out Microsoft Teams at speed, especially during the early phases of remote and hybrid work. Governance models, structured training, and meaningful measurement frameworks are often followed later.

In practice, active user metrics remained the most visible signal. They were accessible in dashboards, simple to chart in executive slides, and easy to communicate upward. By contrast, deeper engagement analysis required more context. It demanded segmentation by department, examination of feature usage patterns, and conversations about how work was actually being done.

To understand how these metrics are interpreted on the ground, I reviewed discussions across IT professional forums, including Reddit’s r/sysadmin community and Microsoft 365 administrative threads. These are not formal research environments. They are operational spaces where administrators compare notes, troubleshoot reporting inconsistencies, and share candid impressions.

In one discussion about Microsoft 365 usage dashboards, an administrator wrote, “Leadership saw 85 percent active and assumed we were fully adopted. In reality, most people were just joining mandatory meetings.” Another contributor observed,

“Active user does not mean active contributor. It just means they touched it.”

A third participant described the reporting tension this way:

“The numbers look great on paper, but when you break it down by team, usage is concentrated in a few groups.”

The tone across these discussions was not accusatory. It was pragmatic. Administrators acknowledged that the metrics are defined clearly and calculated consistently. Several posters noted that the dashboards are useful for trend tracking. At the same time, many emphasized the difficulty of translating raw activity counts into meaningful adoption narratives.

One IT manager summarized the challenge succinctly:

“Executives want one number. Collaboration is not one number.”

What stood out to me was the recurring gap between executive interpretation and operational nuance. Forum contributors did not claim the numbers were false. Instead, they described how easily a high active percentage could be equated with cultural integration or collaboration maturity.

These conversations reinforced my broader conclusion. The issue lies less in hidden methodology and more in interpretation gaps. The dashboards report activity accurately within their definitions. The assumptions layered on top of those numbers are where inflation, in the perceptual sense, can occur.

Industry Norms and Comparable Metrics

To determine whether Microsoft’s reporting approach stands out, I examined how other major SaaS providers define and communicate user activity. The pattern is consistent across the collaboration sector.

Slack6, for example, publishes product updates and milestone announcements in its newsroom.

In its public communications over the years, Slack has highlighted daily active users and paid customer growth as indicators of platform momentum. Like Microsoft Teams, Slack’s active user reporting relies on interaction within a defined time frame. While Slack does not disclose every methodological nuance in press releases, its growth announcements follow the same broad industry logic. Activity within a reporting window signals engagement. That engagement is aggregated and reported at scale.

Zoom7 follows a comparable model that frequently emphasizes meeting participants, paid accounts, and usage growth. During the pandemic, Zoom regularly reported the number of daily meeting participants as a measure of adoption. That metric captured how many individuals joined meetings within a defined period. It did not measure how deeply each participant relied on Zoom beyond those interactions.

Across platforms, the pattern holds. Active user definitions are broad by design. They must be. Companies operating at a global scale need metrics that are consistent, auditable, and comparable over time. Binary activity thresholds provide that consistency.

From a financial reporting standpoint, this approach is logical. Investors need trend lines. They need quarter-over-quarter comparisons. A clear count of monthly or daily active users provides a simple growth narrative that can be evaluated against prior performance.

From an engagement standpoint, however, the metric offers only partial insight. It confirms that users touched the platform. It does not reveal how central the platform is to daily workflow. Nor does it distinguish between habitual power users and occasional participants.

When I compared Teams, Slack, and Zoom side by side, I did not find evidence that Microsoft is uniquely aggressive in how it defines activity. Instead, I found an industry convention. The broader question is not whether Microsoft inflates numbers outside the norm, but whether the norm itself adequately captures real engagement inside enterprises.

The Influence of Incentives

To understand how analysts evaluate these metrics, I reviewed research commentary from firms such as Gartner. Gartner’s8 research portal provides extensive coverage of digital workplace technologies and collaboration platforms. While much of the detailed research is gated behind subscriptions, Gartner’s public materials consistently emphasize the importance of multidimensional measurement when evaluating digital workplace tools.

Analysts frequently recommend tracking a combination of indicators. These include feature diversity, collaboration patterns, integration depth with business processes, user satisfaction, and employee sentiment. Adoption, in this framework, is not a single metric. It is a composite of behaviors.

This broader analytical perspective contrasts with the streamlined metrics that dominate earnings calls. Public companies operate under structural incentives to communicate growth clearly and efficiently. Monthly active users are intuitive. They translate cleanly into charts. They support headline comparisons between competitors. They fit neatly into quarterly narratives about momentum.

The incentive structure does not necessarily encourage deception. In my review of Microsoft’s documentation and investor materials, I did not find contradictions between definitions and reported figures. The methodology is disclosed at the administrative level. The reporting aligns with industry standards.

What emerges instead is simplification driven by communication needs. Complex engagement patterns are distilled into a single headline number. That number becomes shorthand for success.

When simplification becomes the dominant narrative, nuance tends to recede. A rising active user count may reflect deeper integration into workflows. It may also reflect occasional or compliance-driven usage, particularly in environments where Teams is bundled with Microsoft 365 licenses.

Without supplementary metrics, it is difficult to determine which interpretation is more accurate. The incentives of public reporting favor clarity and comparability. The reality of enterprise engagement is more layered.

That tension sits at the center of the broader debate about Microsoft Teams usage metrics.

When High Activity Signals Overload

Another layer complicates the interpretation of active user metrics. High activity can signal meaningful engagement. It can also signal strain.

Microsoft’s Work Trend Index9, published through its WorkLab research platform, documents the evolution of hybrid work patterns across industries. After reviewing multiple editions of the report, I noticed a recurring theme. Microsoft consistently acknowledges rising meeting volumes, increasing chat traffic, and expanding digital coordination demands.

Several reports describe what Microsoft calls meeting fatigue and the intensity of always-on communication. The data highlights growth in weekly meeting time, after-hours messaging, and cross-time zone collaboration. These patterns reflect real adoption of digital collaboration tools. At the same time, they point to saturation.

In that context, a high percentage of active users does not automatically equate to healthy collaboration. It may indicate that employees are deeply embedded in digital workflows. It may also indicate that employees are navigating dense communication ecosystems that require constant responsiveness.

The active user metric itself cannot distinguish between productive interaction and excessive coordination. It records that the activity occurred. It does not assess quality, necessity, or cognitive load.

As I compared Work Trend Index findings with Teams usage definitions, I found an important analytical tension. Rising activity can signal digital transformation success. It can also reveal systemic overload inside hybrid workplaces.

Without complementary qualitative metrics, the interpretation remains incomplete.

Interpreting the Word “Inflation”

The phrase usage metrics inflation carries a strong implication. It suggests intentional exaggeration or manipulation. Based on Microsoft’s publicly available documentation on Microsoft Learn and its investor disclosures, the definitions behind Teams activity reporting are transparent. The methodology is accessible. Administrative guides clearly outline what qualifies as activity and how reporting windows function.

In my review, I did not find evidence that Microsoft conceals its definitions. The technical explanation of active users is available to customers who manage Microsoft 365 environments. The calculation logic is straightforward. A user performs at least one qualifying action within the reporting period. That user is counted as active.

What often appears inflated is not the arithmetic. It is an expectation.

When a dashboard reports that 90 percent of licensed users are active, it is natural for executives, managers, or non-technical stakeholders to interpret that figure as evidence of strong engagement. The mental shortcut is understandable. High activity percentage equals deep adoption.

In reality, the metric indicates that 90 percent performed at least one qualifying action within the selected window. That action could represent routine workflow integration. It could also represent minimal or compliance-driven interaction.

As I traced this distinction across documentation, analyst commentary, community discussions, and industry norms, I reached a different conclusion than the one implied by the word inflation. The issue is less about numerical distortion and more about interpretive drift.

A technically accurate metric can still generate inflated assumptions. When a simplified measure becomes a proxy for complex behavior, nuance compresses. The number remains correct within its definition. The story attached to it can expand beyond what the metric alone supports.

Understanding that gap is essential for evaluating collaboration platform adoption responsibly.

Toward More Nuanced Measurement

Organizations seeking clearer insight into collaboration effectiveness can move beyond binary activity counts. Layered measurement approaches include examining frequency of use per user, diversity of features adopted, cross-departmental communication patterns, and recurring project activity.

Microsoft10 provides advanced analytics integration through Power BI for Microsoft 365, enabling deeper exploration of usage data.

The tools are capable. The question is whether organizations invest in interpreting them thoroughly.

Active user metrics answer the question of whether interaction occurred. They do not answer how meaningful, sustained, or balanced that interaction was.

Microsoft Teams has achieved substantial global adoption and plays a central role in digital collaboration. The scale of its reported user base reflects real deployment across industries and geographies.

At the same time, the definition of “active user” is broad by design. It captures minimum qualifying activity rather than engagement depth. This approach aligns with industry norms across SaaS providers and reflects practical reporting incentives.

The critical distinction lies in interpretation. When activity counts are treated as proxies for collaboration quality, strategic decisions may rest on an incomplete understanding.

My research across investor transcripts, administrative documentation, analyst commentary, and IT community discussions suggests that the metric itself is not inherently misleading. The risk emerges when its scope is not fully appreciated.

For enterprises and observers alike, the solution is not skepticism but literacy. Understanding how metrics are defined allows decision makers to interpret them responsibly.

Presence in a system is measurable. Participation is more complex. Recognizing that difference is essential in evaluating the true state of digital work.

Sources

- “Teams Grows to 320 Million Monthly Active Users” Microsoft Community Hub, 26 Oct. 2023, techcommunity.microsoft.com/discussions/microsoftteams/teams-grows-to-320-million-monthly-active-users/3964746. Accessed 24 Feb. 2026. ↩︎

- “Teams Daily Active User Number Hits 145 Million” Microsoft Community Hub, 28 Apr. 2021, techcommunity.microsoft.com/t5/office-365/teams-daily-active-user-number-hits-145-million/m-p/2301367. Accessed 24 Feb. 2026. ↩︎

- Hirning, David. “Modernizing IT infrastructure at Microsoft: A cloud-native journey with Azure” Microsoft Research, 4 Sept. 2025, www.microsoft.com/insidetrack/blog/modernizing-it-infrastructure-at-microsoft-a-cloud-native-journey-with-azure/. Accessed 24 Feb. 2026. ↩︎

- “Microsoft Teams analytics and reporting” Microsoft Learn, learn.microsoft.com/en-us/microsoftteams/teams-analytics-and-reports/teams-reporting-reference. Accessed 24 Feb. 2026. ↩︎

- Zaveri, Paayal. “Microsoft Teams now has 75 million daily active users, adding 31 million in just over a month” Business Insider, 29 Apr. 2020, www.businessinsider.com/microsoft-teams-hits-75-million-daily-active-users-2020-4. Accessed 24 Feb. 2026. ↩︎

- Slack. “News” Slack, slack.com/intl/en-in/blog/news. Accessed 24 Feb. 2026. ↩︎

- “Investor Relations” Zoom Communications, Inc., investors.zoom.us/. Accessed 24 Feb. 2026. ↩︎

- Gartner, www.gartner.com/en/information-technology. Accessed 24 Feb. 2026. ↩︎

- Microsoft Research, www.microsoft.com/en-us/worklab/work-trend-index. Accessed 24 Feb. 2026. ↩︎

- “About Microsoft 365 usage analytics – Microsoft 365 admin” Microsoft Learn, learn.microsoft.com/en-us/microsoft-365/admin/usage-analytics/usage-analytics?view=o365-worldwide. Accessed 24 Feb. 2026. ↩︎