Post Author

A central concern in discussions about take-home assignments is whether they generate value for companies beyond candidate evaluation. In principle, these exercises are designed strictly as assessment tools. Companies, including Netflix, consistently frame them as simulations rather than contributions. Candidates are told their work will not be used in production systems and exists only to evaluate skills and decision-making.

There is no credible public evidence that major technology companies systematically reuse candidate submissions in live products. This distinction is important. Most concerns raised by candidates are not about direct appropriation of code, but about something more indirect and harder to measure.

As I looked into this more closely, the issue appeared less about misuse and more about exposure. When assignments closely resemble real business problems, they can produce ideas, approaches, and tradeoffs that are relevant beyond the hiring decision itself.

Realism in Assignments and Its Implications

Modern hiring practices increasingly favor realism, but few companies have pushed this shift as visibly as Netflix. Instead of relying on abstract algorithmic puzzles, Netflix and companies influenced by its approach often ask candidates to engage with problems that resemble real production work. This can include designing distributed systems, debugging performance issues, or working through data scenarios that mirror the scale and ambiguity of real-world operations.

This shift is not just a trend. It is grounded in decades of research in industrial and organizational psychology. A landmark meta-analysis by Frank L. Schmidt and John E. Hunter1 found that work sample tests are among the most reliable predictors of job performance. As they note,

“work sample tests have high predictive validity because they closely mirror the tasks required on the job.”

Netflix’s2 broader culture helps explain why this approach fits so naturally into its hiring process. The company is known for emphasizing what it calls “high performance” and “freedom and responsibility,” where employees are expected to operate with significant autonomy and deliver impact quickly. In such an environment, hiring decisions carry higher stakes. The cost of a poor hire is not just productivity loss but potential disruption to highly independent teams.

As a result, evaluation at Netflix tends to focus less on theoretical knowledge and more on applied judgment. Candidates are expected to demonstrate how they think, prioritize, and make tradeoffs in scenarios that resemble actual engineering or business challenges. In practice, this means assignments that feel less like tests and more like work.

I find this distinction important. Traditional interviews often reward memorization or pattern recognition. Realistic assignments, by contrast, reward decision-making under constraints. They reveal how candidates handle ambiguity, communicate assumptions, and balance competing priorities. These are precisely the skills that matter in production environments.

However, this realism introduces a second-order effect that is often overlooked.

When assignments closely resemble real business problems, they do more than evaluate capability. They also generate solutions that exist within a meaningful context. Even if simplified, these tasks often reflect genuine challenges faced by the organization, whether in system scalability, data processing, or user experience design.

This creates a situation where candidate outputs can contain relevant insights. Not polished, production-ready solutions, but perspectives, tradeoffs, and approaches that may still be useful at a conceptual level.

Research3 in organizational learning helps explain why. Companies do not learn only from internal work and training. They also learn by observing how different individuals approach the same problem. Exposure to multiple independent solutions can highlight patterns, reveal blind spots, and introduce alternative ways of thinking.

In the context of Netflix-style hiring, this effect is amplified by scale and selectivity. The company reviews a relatively small number of candidates compared to its size, but those candidates are often highly skilled. This increases the likelihood that submissions will reflect thoughtful, well-developed approaches.

From the company’s perspective, this is a natural byproduct of rigorous evaluation. From a candidate’s perspective, it can feel more ambiguous.

The assignment is framed as a test, but experienced as real work. The output is evaluated individually, but exists within a broader set of solutions that the company can observe and learn from.

That tension does not imply wrongdoing. There is no evidence that Netflix or similar companies systematically reuse candidate work. But it does highlight how realism changes the nature of hiring. The closer the evaluation gets to real work, the more it begins to produce real-world value, even if indirectly.

As I see it, this is the tradeoff at the heart of modern hiring. Realism improves signal quality. It makes hiring more predictive, more relevant, and arguably more fair in terms of assessing actual ability. At the same time, it blurs the boundary between evaluation and contribution in ways that are not always fully acknowledged.

Understanding that tradeoff is essential for both companies and candidates navigating Netflix-influenced hiring practices.

Indirect Value and Knowledge Spillovers

To understand the less visible effects of Netflix-style hiring, it helps to look beyond recruiting and into economic and organizational theory. Companies like Netflix do not operate in isolation when they evaluate candidates. Even when assignments are designed purely for assessment, they exist within a broader system of learning, comparison, and idea exchange.

Economic research provides a strong foundation for this perspective. Adam B. Jaffe4, in his work on knowledge spillovers, explains that the benefits of innovation are not fully captured by the firm that creates them. In other words, ideas naturally extend beyond their original context, often influencing others through indirect exposure rather than deliberate transfer.

In parallel, research by Wesley M. Cohen and Daniel A. Levinthal5 introduces the concept of absorptive capacity. They define it as a firm’s ability to recognize the value of new external information, assimilate it, and apply it. This capability, they argue, is central to how organizations learn and innovate over time.

When I map these ideas onto hiring practices at companies like Netflix, the connection becomes clear. Take-home assignments and real-world interview scenarios expose organizations to multiple independent approaches to the same problem. Each candidate brings a slightly different perspective, shaped by their experience, assumptions, and technical preferences.

Individually, these submissions are evaluated to make hiring decisions. Collectively, they can function as a form of external input.

Netflix’s hiring philosophy, which emphasizes high judgment and context-driven decision-making, reinforces this effect. The company is known for prioritizing candidates who can operate in ambiguous, production-like environments. This often leads to assignments that are open-ended rather than strictly defined, allowing candidates to explore tradeoffs rather than converge on a single “correct” answer.

That openness increases variability across submissions.

From an evaluation standpoint, this variability is useful. It helps hiring teams distinguish between candidates based on how they think, not just what they produce. But from a learning standpoint, it also creates exposure to a range of possible solutions.

Research in innovation studies suggests that this kind of exposure matters. Studies from the Massachusetts Institute of Technology6 have shown that diverse problem-solving approaches, when compared and aggregated, can lead to better overall decision-making. Groups that incorporate varied perspectives tend to outperform those relying on a single dominant approach.

In a hiring context, this does not mean that companies are intentionally harvesting ideas. There is no evidence that Netflix or similar organizations systematically use candidate submissions in product development. The primary goal remains evaluation.

However, exposure leads to learning, even when learning is not the explicit objective.

I think this is where the nuance often gets lost in online discussions. The concern is sometimes framed as companies “getting free work.” That framing suggests direct extraction, which is not supported by evidence in most cases.

A more accurate description is indirect value creation.

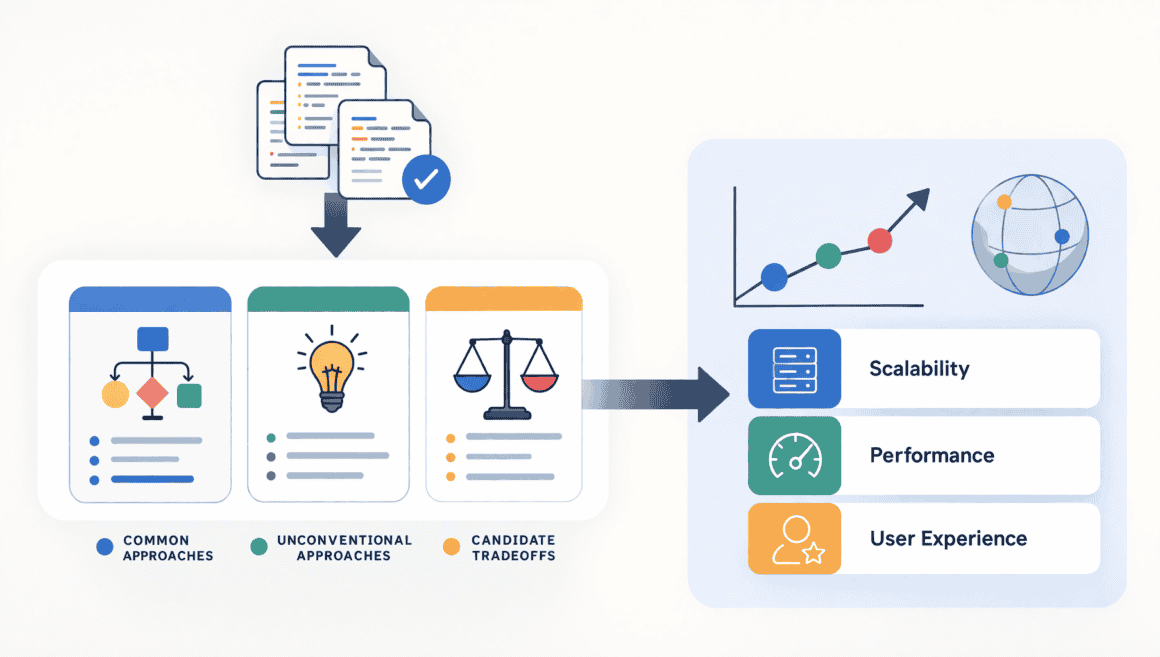

By reviewing multiple candidate solutions, organizations can observe patterns. They can see which approaches are common, which are unconventional, and which tradeoffs candidates consistently make. Over time, this can influence how internal teams think about similar problems.

This is particularly relevant at a company like Netflix, where engineering and product decisions often involve balancing scalability, performance, and user experience at a global scale. Even small variations in approach can be meaningful when applied across such systems.

At the same time, it is important to keep this effect in perspective. The value generated through hiring evaluations is diffuse and incremental. It does not replace internal R&D or structured innovation processes. Instead, it acts as a supplementary form of exposure to external thinking.

From a candidate’s perspective, however, the experience can feel different.

When I consider the process from that side, the asymmetry becomes more visible. Candidates invest focused effort into solving realistic problems. They may explore multiple approaches, refine their solutions, and document their reasoning. Even if their work is not used directly, it becomes part of a broader set of inputs that the company can learn from.

That does not make the process exploitative. But it does make it more complex than a simple evaluation.

In the end, Netflix-style hiring highlights a broader truth about modern knowledge work. Learning does not happen only through formal channels. It happens through exposure, comparison, and the accumulation of diverse perspectives.

Take-home assignments, especially those grounded in real-world scenarios, naturally create those conditions. The challenge is not to eliminate this effect, but to recognize it and ensure that the balance between evaluation and effort remains fair and transparent.

While academic research explains how indirect value can emerge, candidate discussions reveal how it is perceived.

On Hacker News7, one user noted that companies benefit from seeing “a variety of solutions to the same problem,” adding that this can surface approaches “you might not have considered internally.”

Others in the same discussion pushed back, arguing that this is simply an unavoidable consequence of evaluating realistic work. If companies want to assess practical skills, they will inevitably encounter practical ideas.

On Reddit’s r/cscareerquestions8 forum, a candidate framed the issue more critically, writing that even if code is not reused,

“you’re still giving them insight into how people solve their problems.”

These comments are anecdotal, but they are consistent across platforms. Candidates rarely accuse companies of direct misuse. Instead, they express concern about asymmetry. They invest time and creativity, while companies gain exposure to a wide range of approaches at little cost.

Aggregation and Pattern Recognition

The potential for indirect value creation becomes much clearer when viewed through the lens of scale, and this is where a company like Netflix offers a particularly relevant case.

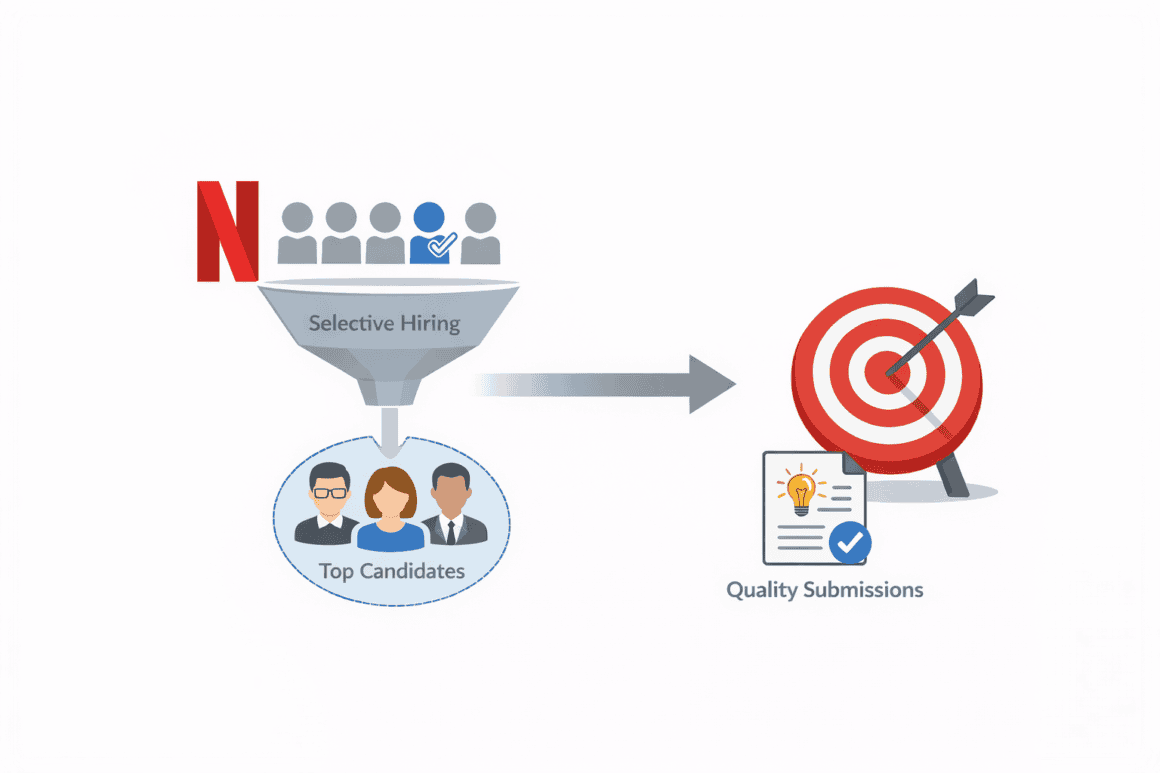

Netflix is known for maintaining a highly selective hiring process. It does not hire at the same volume as many large tech companies, but it places a strong emphasis on quality and alignment. This means that for any given role, multiple highly skilled candidates may be evaluated against similar real-world scenarios. Even if the absolute number of candidates is smaller, the depth and quality of submissions tend to be high.

Individually, a single take-home assignment or interview solution has a limited impact. But when multiple candidates independently approach the same problem, their combined output begins to reveal patterns. Certain architectural decisions may appear repeatedly. Similar tradeoffs may emerge across submissions. At the same time, a few candidates may introduce novel or unconventional approaches that stand out.

This aggregation effect is well documented in research on collective intelligence. Studies9 show that groups can outperform individuals when diverse perspectives are combined and compared. As their research highlights, “diverse groups of people can be smarter than individuals” when solving complex problems.

While hiring processes are not explicitly designed as collective intelligence systems, Netflix’s evaluation model can produce similar dynamics. By exposing hiring panels to multiple independent solutions, the process naturally creates opportunities for comparison and pattern recognition.

This aligns with Netflix’s broader decision-making culture. The company emphasizes context, judgment, and informed debate rather than rigid rules. In such an environment, seeing multiple ways to solve the same problem can be valuable, not because any single solution is adopted, but because it enriches internal understanding.

For example, if several candidates converge on a particular system design approach, that convergence can act as a form of informal validation. It suggests that certain tradeoffs are widely recognized as effective. On the other hand, if a candidate presents a distinctly different solution that is still viable, it can challenge assumptions or highlight alternatives that may not have been previously considered.

Over time, this exposure can subtly influence how teams think.

Research in organizational learning supports this idea. Firms do not rely solely on internal experimentation. They also learn by observing external approaches and integrating relevant insights. In a hiring context, candidate submissions become one such source of external input, even if that is not their primary purpose.

From Netflix’s perspective, this is likely an incidental benefit rather than a deliberate objective. The primary goal remains to identify candidates who can perform at a high level in real-world conditions. The aggregation of insights is simply a byproduct of evaluating realistic work at a high standard.

However, from a candidate’s perspective, the dynamic can feel different.

When I think about the experience from that side, the asymmetry becomes more apparent. Each candidate is solving the problem independently, investing time and effort into producing a thoughtful solution. Yet their work exists alongside many others, forming a broader pool of approaches that the company can observe and learn from.

This is where the concern becomes more nuanced. The value is not derived from any single submission, nor is it typically captured directly or tangibly. Instead, it emerges from the aggregation of many contributions and the patterns that become visible through comparison.

That distinction matters. It moves the conversation away from claims of direct exploitation and toward a more subtle question about how value is created in modern hiring systems.

For Netflix-style hiring, the implication is clear. The closer assessments get to real work, the more they generate not just signals about candidates, but also insights about the problem space itself.

From a company perspective, this may simply be a natural extension of rigorous evaluation. From a candidate perspective, it can feel like contributing to a broader knowledge system without clear acknowledgment.

Recognizing this dual perspective is essential. It does not invalidate the effectiveness of realistic hiring methods, but it does highlight the need for greater transparency and thoughtful design in how those methods are applied.

Legal Boundaries and Ethical Considerations

When examining Netflix-style hiring, it is important to separate what is legally permissible from what candidates experience in practice. From a legal standpoint, companies like Netflix are operating within well-established norms. Take-home assignments and work samples are widely accepted as valid hiring tools, particularly for roles that require demonstrable technical or analytical skills.

Under U.S. labor law, the key question is whether the employer derives direct benefit from the work performed. Guidance from the U.S. Department of Labor10 states that unpaid work arrangements may raise concerns if the employer is the “primary beneficiary” of the activity.

In the context of hiring, this threshold is typically not crossed. Netflix and similar companies structure assignments as hypothetical or evaluative exercises. There is no credible public evidence that candidate submissions are systematically incorporated into production systems or commercial outputs. From a compliance perspective, this keeps the process within legal boundaries.

However, Netflix’s emphasis on realism introduces a layer of complexity that the legal framework does not fully address.

Because the company prioritizes real-world problem solving, its assessments often resemble actual work scenarios. Candidates may be asked to design systems, reason through scalability challenges, or analyze data in ways that closely mirror internal tasks. This alignment improves evaluation quality, but it also makes the boundary between “test” and “work” less clear from the candidate’s point of view.

The law focuses on direct, tangible outcomes, such as whether code is reused or integrated into products. It does not account for indirect benefits, such as learning, pattern recognition, or exposure to diverse approaches. As discussed earlier, these forms of value can emerge naturally when organizations evaluate multiple high-quality submissions.

This creates a gap between legal compliance and perceived fairness.

From Netflix’s perspective, the process is consistent with its culture of high performance and informed decision-making. The goal is to simulate the kinds of challenges employees will actually face, ensuring that hiring decisions are based on meaningful signals rather than abstract proxies.

From a candidate’s perspective, the experience can feel different.

When I consider it from that angle, the effort required to complete these assignments stands out. Candidates may spend several hours, sometimes more, developing thoughtful solutions, refining their reasoning, and presenting their work clearly. Even if the assignment is officially scoped to a shorter timeframe, the competitive nature of Netflix-style hiring often pushes candidates to invest additional effort.

This is where the ethical debate begins to diverge from the legal one.

The core issue is not whether companies are violating labor laws. It is whether the exchange between the candidate and employer feels balanced. Candidates contribute time, energy, and expertise with no guarantee of return. In highly realistic assignments, that contribution can feel indistinguishable from unpaid professional work, even when no direct value is extracted.

Transparency plays a significant role in shaping this perception. Netflix, like many companies, does not publicly detail how submissions are analyzed beyond their role in evaluation. Candidates are rarely told whether their solutions are compared across applicants, how long their work is retained, or whether patterns are discussed internally.

This lack of visibility can amplify concerns. When expectations are high and processes are opaque, candidates are more likely to question how their work is being used.

The broader industry has begun to respond to these concerns, in part due to the influence of companies like Netflix setting higher standards for realism. Some organizations now offer stipends for take-home assignments, acknowledging the time investment required. Others impose strict limits, such as capping assignments at two to four hours, or replacing them entirely with live, collaborative problem-solving sessions.

These alternatives aim to preserve the benefits of realistic evaluation while reducing the burden on candidates.

For Netflix, this presents an interesting balance. The company’s brand and compensation structure allow it to maintain a rigorous hiring bar without losing access to top talent. However, as its approach influences the broader industry, not all organizations have the same ability to offset the costs imposed on candidates.

As a result, what works for Netflix at the top of the market may create friction when adopted elsewhere without adjustment.

In the end, Netflix-style hiring sits at the intersection of strong predictive assessment and evolving ethical expectations. Legally, the model is sound. Practically, it is effective. But ethically, it raises questions about time, effort, and transparency that the industry is still working to address.

The challenge going forward is not to abandon realism, but to ensure that it is applied in a way that respects both the goals of the company and the contributions of the candidate.

One of the most important aspects of this issue is how differently it affects candidates.

Experienced professionals often have the option to decline time-intensive assignments. They may have multiple opportunities or enough leverage to negotiate alternatives.

Early-career candidates, by contrast, are more likely to accept these requirements. They may feel that completing assignments is necessary to remain competitive, even when the time investment is significant.

This creates an uneven distribution of unpaid effort.

Labor market research consistently shows that individuals with fewer options are more likely to accept higher costs in exchange for a potential opportunity. In hiring, this translates into a system where the burden of take-home assignments falls disproportionately on those with less bargaining power.

A Balanced View

The question of value creation in take-home assignments does not have a simple answer.

There is no strong evidence of widespread direct misuse of candidate work. Most companies, including Netflix, appear to operate within established legal and professional norms.

At the same time, the structure of realistic assignments creates conditions where indirect value can emerge. Through aggregation and comparison, organizations may gain insights that extend beyond individual evaluations.

As I worked through this topic, what stood out was the gap between intention and perception. Companies design assignments as evaluation tools. Candidates experience them as high-effort contributions with uncertain outcomes.

Bridging that gap will require more than policy changes. It will require clearer communication, better scoping, and a stronger acknowledgment of the time and effort candidates invest.

In a hiring landscape shaped by Netflix-style expectations, that balance will be essential.

Sources

- PsycNet, psycnet.apa.org/doiLanding?doi=10.1037%2F0033-2909.124.2.262. Accessed 18 Mar. 2026. ↩︎

- “Netflix Jobs” jobs.netflix.com/culture. Accessed 18 Mar. 2026. ↩︎

- SagePub, journals.sagepub.com/doi/10.1177/2158244018794224. Accessed 19 Mar. 2026. ↩︎

- 22 Sept. 1999, www.nber.org/system/files/working_papers/w3993/www.nber.org/system/files/working_papers/w3993/w3993.pdf. Accessed 18 Mar. 2026. ↩︎

- “JSTOR: www.jstor.org/stable/2393553?origin=crossref. Accessed 18 Mar. 2026. ↩︎

- “Research CCI” MIT Center for Collective Intelligence, cci.mit.edu/research/. Accessed 19 Mar. 2026. ↩︎

- “I turned my interview task for Google into a startup” Hacker News, news.ycombinator.com/item?id=19927266. Accessed 19 Mar. 2026. ↩︎

- Reddit, www.reddit.com/r/cscareerquestions/comments/ulnja7/made_the_decision_to_never_do_another_online/. Accessed 19 Mar. 2026. ↩︎

- tandfonline, www.tandfonline.com/doi/full/10.1080/0022250X.2024.2428641. Accessed 19 Mar. 2026. ↩︎

- “Fact Sheet #71: Internship Programs Under The Fair Labor Standards Act” U.S. Department of Labor, www.dol.gov/agencies/whd/fact-sheets/71-flsa-internships. Accessed 19 Mar. 2026. ↩︎