Post Author

It happened on a Tuesday morning, which is when these things always seem to happen. A restaurant owner in Chicago opened her laptop, pulled up her Google Business Profile, and watched her review count drop in front of her. Forty-seven reviews, gone. No warning. No email. No explanation from Google. Just a smaller number staring back at her where a larger one used to be. Her four-and-a-half-star rating, built over eight years of long Saturdays and good food, dropped to four-point-one overnight. She told a local business forum that it felt like someone had broken into her restaurant and stolen the testimonials off the wall.

This kind of story is not unusual anymore. It is, in fact, ordinary. Across forums like Local Search Forum1, Google’s own Business Profile community2, and dozens of Reddit threads, business owners have been filing similar accounts since at least 2024, and the pace of deletions has not slowed. What started as a quiet algorithmic housecleaning has grown into something more troubling: a system so aggressive in its pursuit of fake reviews that it has begun devouring the real ones.

I spent several weeks digging into this. I read through complaint threads, studied industry research, and tried to understand how a company with Google’s resources manages to regularly delete content that real customers wrote from real experience. The answer, it turns out, is complicated, and not entirely Google’s fault. But the human cost is real, and the opacity around the whole process is troubling in ways that go beyond a simple algorithmic glitch.

The Scale of the Problem

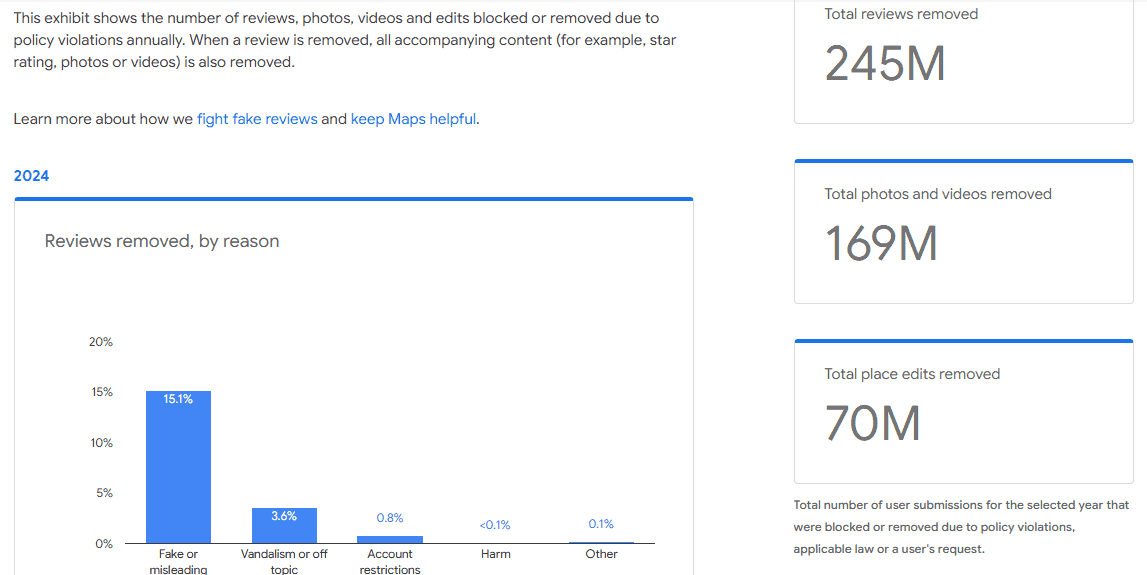

Start with the numbers, because they are staggering. For example, Yahoo Tech3 reported that Google blocked or removed more than 240 million policy-violating reviews, a figure that represents a 40 percent increase over the previous year. In 2023 it removed roughly 170 million. In 2020, the number was 55 million. The trajectory is steep and it only points upward.

Those headline figures sound like progress. And for the most part, Google frames them that way. The company describes its work as protecting consumers from a marketplace riddled with fraudulent five-star reviews bought from review farms, bot networks, and reputation management agencies operating out of countries with few legal constraints. None of that framing is wrong. Review fraud is a genuine and serious problem. A study by The Transparency Company4 found that review fraud costs consumers an estimated 300 billion dollars in annual harm, with the average American household losing roughly 2,385 dollars per year by being misled into choosing businesses inflated by fake praise.

But embedded in that 240 million number is a figure nobody talks about much. Research from Search Engine Land5tracking over 60,000 Google Business Profiles across 79 countries found that roughly 3 to 5 percent of all Google reviews are removed, and that many of those removals are false positives, legitimate reviews from genuine customers caught in an algorithmic net that does not distinguish very well between a fake review and a real one that happens to look unusual. As one local search forum member pointed out:

“At this point, missing legitimate, hard-earned reviews for local businesses seems less like a bug and more like a feature. This has been going on for years and Google is getting worse about doing anything about it.”

Between January and July 2025, things got noticeably worse. ALM Corp6 reported that deletion rates surged by over 600 percent following the deeper integration of Google’s Gemini AI into review moderation. At the peak of enforcement in July 2025, nearly 1,200 monitored business locations experienced at least one review deletion within a single seven-day period. That figure extrapolates, across Google’s full ecosystem, to potentially millions of deletions per month.

In February 2025, businesses worldwide woke up to an acute version of the problem. Reports flooded into support forums of review counts dropping by 10 to 50 reviews overnight. Some businesses lost 159 reviews in six days. Some saw 60 reviews vanish in a single morning. Google eventually acknowledged it as a known issue. The phrasing it used was carefully chosen: a ‘bug.’ But many business owners who watched it happen do not think what they saw looked like an accident.

The Machine That Decides

To understand why legitimate reviews disappear, you need to understand the machine doing the removing. For most of its existence, Google’s review moderation system relied on a combination of user reports and basic spam filters. If a review was written in obviously identical language to a dozen others posted around the same time, it would get flagged. If a reviewer posted nothing but five-star ratings for a cluster of unrelated businesses in multiple countries over 48 hours, the system would notice.

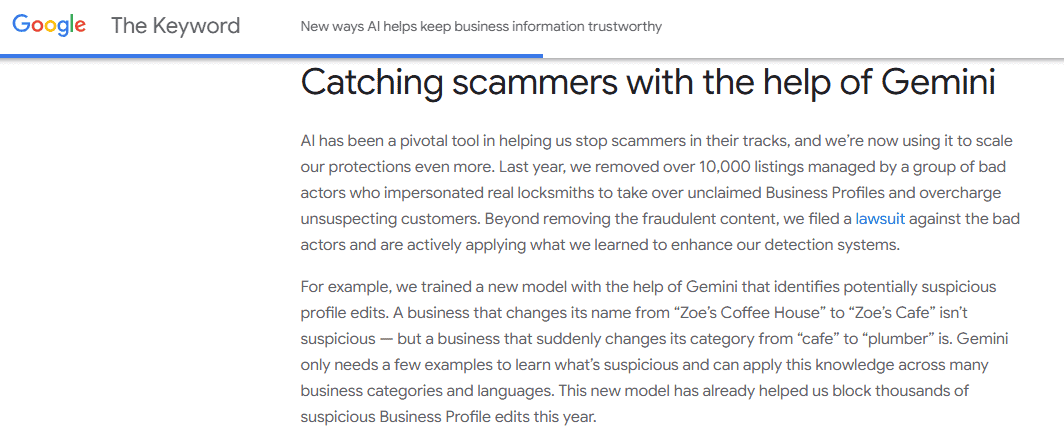

In April 2024, that changed. Google7 announced the integration of Gemini, its most advanced large language model, into its review moderation infrastructure. The company described the upgrade with enthusiasm. Gemini, it explained, could analyze review content, reviewer behavior, timing patterns, and business-reviewer relationship signals. It could identify abuse patterns retroactively, meaning reviews that seemed fine at the time of posting could now be revisited and removed months later. It could spot suspicious profile edits, flagging a business that suddenly changed its category from ‘cafe’ to ‘plumber’ while letting slide a routine name update from ‘Zoe’s Coffee House’ to ‘Zoe’s Cafe.’

The problem is that no AI system trained on historical patterns operates without false positives. Gemini is not exempt from this. An academic study published in Applied Sciences at MDPI8 analyzing fake review detection on Google Maps found that as detection sensitivity increases, so does the rate of false positives, meaning legitimate accounts get flagged alongside fake ones. The researchers noted that Google does not publicly disclose its detection methodology, which makes external auditing of its error rate essentially impossible.

What the research community suspects, and what the patterns of deletion seem to confirm, is that Gemini is catching real fraud and collateral damage simultaneously. Reviews from customers who only ever left one or two reviews on Google look, to a pattern-matching system, the same as reviews posted by a sockpuppet account created to game a rating. A burst of genuine reviews following a local news story, a viral social media moment, or an email campaign looks, from the outside, exactly like a coordinated fake-review attack. The algorithm does not ask why the pattern exists. It just acts on what it sees.

One particularly revealing data pointfrom Up and Social9 shows that 73.1 percent of deleted reviews in early 2025 were five-star ratings. That is consistent with the theory that Gemini is trained to be especially skeptical of the star ratings most associated with manipulation. But five-star reviews are also, of course, the most common rating real satisfied customers leave. The algorithm cannot easily tell the difference, and for now, it errs on the side of removal.

What Gets Caught in the Net

I want to be specific here, because the technical language can obscure the human reality. Let me describe what happens to a real review from a real customer.

A customer visits a dentist. She has a good experience. She opens Google Maps, writes three sentences about the staff and the painless procedure, and posts it. For a while, it appears on the dentist’s profile. Then, weeks later, it disappears. Why? Possibly because she has only ever left one other review on Google, making her look, statistically, like an account created for artificial purposes. Possibly because a competitor recently tried to manipulate the dentist’s ratings, triggering heightened scrutiny on the entire profile. Possibly because she wrote her review from her workplace, where a colleague also reviewed the same dentist from the same IP address, and the system interpreted that as coordinated behavior. None of these explanations require bad faith from the customer. All of them can lead to removal.

The documentation from Igniting Business10, one of the more methodical trackers of Google’s review filtering behavior, lists some of the content triggers that can lead to suppression: excessive capitalization, multiple exclamation points, phrases that pattern-match to review spam templates, links or phone numbers embedded in the text. But it also notes behavioral signals that have nothing to do with the content of the review itself. Reviews posted from shared IP addresses get flagged. A business owner who sends a bulk email request to customers from the past six months, generating a sudden spike in reviews, can trigger the algorithm. Even a legitimate business that appears in local news and gets a natural surge of attention can look suspicious to a system calibrated to treat surges as manipulation.

A particularly cruel irony ReplyOnTheFly11 shows that 66 percent of deleted reviews had received no response from the business. Google’s system appears to interpret business engagement as a signal of legitimacy. Unresponded-to reviews look more likely to be orphaned spam. So the businesses that are too busy running their operations to respond to every post online are, in effect, penalized twice: once by not responding, and once when the reviews quietly disappear.

The Ranking Consequences

If the human cost of losing reviews is real, the economic cost is measurable, and it runs through something called the local pack. When you search Google for a plumber, a restaurant, or a dentist near you, the first results you typically see are a block of three business listings displayed on a map. This is the local pack, sometimes called the three-pack, and it is the most valuable real estate in local search. Getting into it, or staying in it, is the difference between a phone that rings and one that does not.

Reviews are a core ranking factor in the local pack. The Whitespark 2026 Local Search Ranking Factors report from MetricsRule12 found that review signals account for roughly 20 percent of local pack ranking weight, up from 16 percent in 2023. Research from BrightLocal as shown by ALM Corp13 indicates that businesses in positions one through three receive around 42 percent of all clicks, while positions four through ten collectively receive only about 13 percent. A drop from second place to sixth place following a review purge is not a minor inconvenience. It is a structural change in who can find the business.

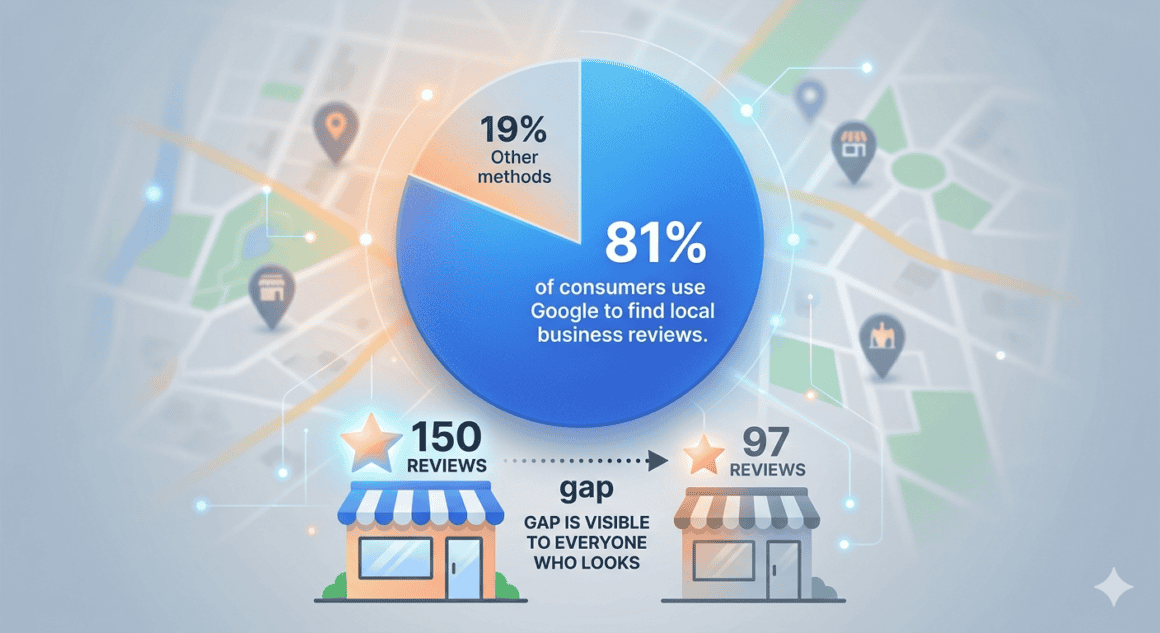

The review count matters as much as the rating. According to Marketing LTB14, 81 percent of consumers use Google to find local business reviews, and when a business that had 150 reviews now shows 97, the gap is visible to everyone who looks. Potential customers see it. They do the informal math. They move on to the competitor with more reviews. The business owner, meanwhile, has no clear way to explain what happened, because Google does not tell them. There is no notification when a review is removed. No reason given. No appeals process that reliably works.

Consider one business owner’s story documented on Local Search Forum, posted in late 2025:

“Some of the old reviews, five-plus years ago, have vanished. The reviews are from the clients that I am still actively working with and their emails are still active. Obviously, no violations of Google policies. I’ll be contacting support about it but I have very little hope that they will do anything about it.”

That pessimism about Google support is a recurring theme in every forum I read. The platform’s own support threads are full of identical stories, and the responses from Google are often boilerplate rejections that do not engage with the specifics of what was lost.

The Black Box and the Business Owner

Here is what strikes me most about all of this. Google’s position is essentially that it cannot be more transparent about its detection methods because doing so would help bad actors game them. That is a defensible argument. If Google published a list of every signal it uses to identify fake reviews, the people running review farms would read it over a cup of coffee and update their methods before lunch.

But the opacity cuts both ways. Google’s own Content Trust and Safety Report15 provides aggregate numbers on what was removed, but no methodology on how individual review decisions are made, no data on the false positive rate, and no mechanism for business owners to understand why a specific review was removed. The system functions like a court where the verdict is read but the evidence is never shown.

When business owners try to appeal, the process is opaque to the point of uselessness. A user on the Local Search Forum16 who once served as a Google Product Expert wrote in 2025:

“Even though it does still show up for the person who left the review, when we submit screenshots and there’s absolutely no policy violation whatsoever, we just get a generic canned response.”

The person had worked inside the ecosystem that was supposed to handle these problems and still could not get a meaningful answer.

There is also the question of whether some reviews are removed not through automated error but through systematic pressure. In Germany, a cottage industry of law firms has developed around using defamation laws to pressure Google into removing negative reviews. The analysis of 5,000 German business locations found that 2.19 percent of all reviews had been deleted since July 2024, a rate significantly higher than other markets, and that the deletions in Germany disproportionately targeted one-star reviews rather than the five-star reviews being scrubbed in English-speaking markets. That pattern is not consistent with an algorithm running neutral anti-fraud enforcement. It is consistent with legal intimidation working.

In the United States, the dynamics are different but no less troubling. The Electronic Frontier Foundation has raised concerns that the ability of businesses to suppress authentic customer feedback through legal intimidation threatens the fundamental purpose of consumer review systems. Section 230 of the Communications Decency Act protects Google from liability for third-party content, which means Google can remove almost anything it wants from its platform without legal consequence. That protection, designed to enable free expression online, also enables the quiet erasure of legitimate customer voices with no recourse.

The Review Extortion Parallel

While legitimate reviews disappear from one direction, fake negative reviews flood in from another. This is a problem that has gotten worse as Google’s spam removal has made fake positive reviews harder to maintain. The operators who once sold five-star reviews have diversified.

The New York Times17 investigated this phenomenon in 2025, documenting how small business owners were being approached via WhatsApp with messages warning that negative review campaigns had been ordered against their listings. A Los Angeles contractor described watching her five-star rating plunge to 3.6 after 20 fake one-star reviews appeared. She paid 250 dollars in two payments to have them removed. The cycle did not stop.

In Georgia, a moving company owner named Nick Betourney received similar messages from a man who later admitted to the Times that he sold negative Google reviews for 100 dollars per batch of 20. The reviews were not generic. They were detailed and elaborate enough to pass Google’s content filters, which are optimized to remove low-quality text but less capable of detecting sophisticated fabrication.

The irony is that these extortion reviews are, in some ways, better at surviving Google’s filters than genuine reviews are. A genuine review from a regular customer with little Google history gets suppressed. An elaborate fake negative review, written to sound authentic, passes. The algorithm is better at identifying low-effort fraud than high-effort fraud, and the extortion market has professionalized accordingly.

What the Data Actually Shows

When you step back and look at the full picture, a few things become clear.

First, Google is genuinely fighting a serious problem. Review fraud causes real consumer harm, real competitive distortion, and real economic damage. The company’s investment in Gemini-based detection represents a meaningful technical advance over what existed before. The 240 million reviews removed in 2024 included a large number of genuinely fake ones, and the platform is measurably cleaner for the effort.

Second, the collateral damage to legitimate reviews is real, widespread, and growing. The 600 percent surge in deletion rates between January and July 2025 was not matched by a 600 percent surge in fraud. Something in the algorithm became more aggressive, and real reviews paid the price. Even at the moderated rates seen in late 2025, deletion rates remain approximately 400 percent higher than they were at the start of that year.

Third, the people who are harmed most by this are not the large businesses with marketing teams and SEO agencies on retainer. They are the small ones. The dentist. The restaurant. The moving company. The sole proprietor who spent years earning 80 reviews and now has 52, with no explanation and no path to recovery.The problem was significant enough that they had to build automated monitoring into their product specifically to track when reviews were removed, because the removals were happening too frequently to catch manually.

Fourth, the transparency gap is indefensible. Google can publish aggregate removal statistics while refusing to explain individual decisions. Business owners are expected to trust a process they cannot see, appeal to a system that does not engage with them, and absorb losses they cannot quantify. This is not a technical necessity. It is a policy choice.

The Regulatory Pressure Building

The regulatory environment around reviews is changing fast. The FTC’s Consumer Review Rule18, which took effect in October 2024, imposes civil penalties of up to 53,088 dollars per violation for businesses engaged in fake reviews, incentivized reviews, or review suppression. In December 2025, the FTC issued its first ten warning letters. The penalties are real and the enforcement is beginning.

In Europe, the Digital Services Act19 and strengthened consumer protection laws have increased platform accountability for review systems. The regulatory climate has visibly influenced Google’s enforcement posture, pushing the company toward more aggressive enforcement of its own policies. Whether that regulatory pressure also pushes Google toward more transparency about its false positive rate is less clear.

What is clear is that the current situation is unstable. A platform that removes a quarter of all submitted reviews in a given year, with no public accounting of how many of those removals were errors, is operating with a level of unilateral authority over small business visibility that seems disproportionate to its transparency obligations. Google Maps is not a social media platform where the stakes are mostly reputational. For a local business, it is infrastructure. It is the thing that determines whether new customers can find you. Treating it with that kind of care requires more than a press release about how many fake reviews got caught.

What You Can Do About It

I want to be practical here because there is no point in ending on pure frustration. If you run a business that depends on Google Maps reviews, there are things you can do to reduce the risk of legitimate reviews being filtered out.

Ask for reviews from every customer, not just the happy ones. Review gating, the practice of pre-screening customers before directing them to leave feedback, is explicitly against Google’s policy and is increasingly detectable. Google’s updated contributor policy from 2024 specifically targets this behavior. More importantly, soliciting only positive reviews creates an unnatural pattern that looks, from the algorithm’s perspective, like manipulation.

Respond to every review you receive. The data on this is consistent across multiple sources: businesses that respond to reviews have a lower rate of review removal. Whether Google’s algorithm actively uses engagement as a legitimacy signal or whether this is correlation rather than causation, the result is the same. Responding is worth the time.

Space out your review requests. A sudden burst of reviews triggered by a bulk email campaign can look exactly like a coordinated fake review attack to an algorithm that does not know about your email list. Sending requests in batches, distributed over time, reduces the velocity signal that flags accounts for scrutiny.

Encourage detailed reviews. A review that mentions a specific service, a staff member’s name, or a particular product is much harder for an algorithm to misidentify as generic spam. It is also more useful to potential customers. Both reasons are sufficient.

Diversify your review presence. Yelp, TripAdvisor, industry-specific directories, and other platforms offer some buffer against the volatility of any single platform’s enforcement decisions. If Google removes reviews and your reputation exists only on Google, you have nothing to point to. If your reputation exists across multiple platforms, you have evidence that your ratings are genuine.

The Larger Question

There is a version of this story where Google is simply doing the hard and imperfect work of keeping a valuable platform honest. That version is true, up to a point. Review fraud is real and it causes real harm, and a company that ignored it entirely would be failing its users.

But there is another version of this story, and it runs alongside the first one. In that version, a company with dominant market power over local business discovery operates a content moderation system with no external oversight, no meaningful transparency, no effective appeals process, and a growing false positive rate. In that version, the small business owner who spent years building a reputation through genuine customer relationships can lose pieces of it overnight, for reasons she will never be told, with no mechanism for recovery that reliably works. Both versions of the story are happening at the same time, in the same system, to the same people.

One Local Search Forum member put it plainly in a thread from 2025, speaking not about technical details but about the basic experience of being a small business owner in this environment:

“From the perspective of a searcher and potential customer looking up which local plumber or chiropractor to use, the one with 100 reviews or the one with 150 reviews, it’s one issue.”

It is one issue. And it is one that Google has the capacity to address more honestly than it currently does.

Sources

- “Local Search Forum” Local Search Forum, 15 Oct. 2022, localsearchforum.com. Accessed 7 Apr. 2026. ↩︎

- “Reviews on Google Maps are disappearing en masse.” Google Business Profile Community, support.google.com/business/thread/323812000/reviews-on-google-maps-are-disappearing-en-masse?hl=en. Accessed 7 Apr. 2026. ↩︎

- Kerns, Taylor. “Google is using AI to crack down on problematic reviews on Maps” 7 Apr. 2025, tech.yahoo.com/articles/google-using-ai-crack-down-130010025.html. Accessed 7 Apr. 2026. ↩︎

- Seidel, Ben. “How Google Business Profile Reviews Are Filtered for Spam in 2026” Igniting Business, 6 Mar. 2026, www.ignitingbusiness.com/blog/how-google-business-profile-reviews-are-collected-and-filtered-for-spam. Accessed 7 Apr. 2026. ↩︎

- Search Engine Land, searchengineland.com/google-deleting-reviews-record-levels-466546. Accessed 7 Apr. 2026. ↩︎

- ALM Corp, almcorp.com/blog/google-reviews-deleted-ai-legal-takedowns-2025/. Accessed 7 Apr. 2026. ↩︎

- Leader, Ian. “New ways AI helps keep business information trustworthy” 7 Apr. 2025, blog.google/products/maps/google-business-profiles-ai-fake-reviews/. Accessed 7 Apr. 2026. ↩︎

- MDPI, www.mdpi.com/2076-3417/13/10/6331. Accessed 10 Apr. 2026. ↩︎

- Up and Social, upandsocial.com/google-reviews-disappeared-business-guide/. Accessed 10 Apr. 2026. ↩︎

- Seidel, Ben. “How Google Business Profile Reviews Are Filtered for Spam in 2026” Igniting Business, 6 Mar. 2026, www.ignitingbusiness.com/blog/how-google-business-profile-reviews-are-collected-and-filtered-for-spam. Accessed 10 Apr. 2026. ↩︎

- Team, ReplyOnTheFly. “Why Google Reviews Disappear: Causes and Recovery (2026)” ReplyOnTheFly, 22 Feb. 2026, www.replyonthefly.com/blog/google-reviews-disappearing. Accessed 10 Apr. 2026. ↩︎

- Sp, Ivan. “Why Local Businesses Rank in Google Maps But Not Organic Search — And How to Fix Both – SEO & AI Automation Consultant in Vancouver” Metrics Rule, www.metricsrule.com/research/why-local-businesses-rank-in-google-maps-but-not-organic-search/. Accessed 10 Apr. 2026. ↩︎

- ALM Corp, almcorp.com/blog/google-reviews-deleted-ai-legal-takedowns-2025/. Accessed 10 Apr. 2026. ↩︎

- Nash, Bill. “Local SEO Statistics 2025: 98+ Stats & Insights [Expert Analysis]” Marketing LTB, 22 Oct. 2025, marketingltb.com/blog/statistics/local-seo-statistics/. Accessed 10 Apr. 2026. ↩︎

- “Google Transparency Report” transparencyreport.google.com/maps-content/enforcement. Accessed 10 Apr. 2026. ↩︎

- “It appears that Google has removed more reviews this past week.” Local Search Forum, 17 Oct. 2025, localsearchforum.com/threads/it-appears-that-google-has-removed-more-reviews-this-past-week.62842/. Accessed 10 Apr. 2026. ↩︎

- “Nytimes.Com” 11 Sept. 2025, www.nytimes.com/2025/09/11/technology/fake-reviews-small-businesses.html. Accessed 10 Apr. 2026. ↩︎

- FTC, www.ftc.gov/business-guidance/blog/2025/12/warning-letter-or-ten-businesses-comply-ftcs-consumer-review-rule. Accessed 10 Apr. 2026. ↩︎

- “The Digital Services Act” Shaping Europes digital future, digital-strategy.ec.europa.eu/en/policies/digital-services-act. Accessed 10 Apr. 2026. ↩︎