Post Author

The first thing you notice when you scroll through the reviews for a newly launched product on Amazon is the green badge. ‘Vine Customer Review of Free Product.’ It sits just below the reviewer’s name, a small rectangular stamp that Amazon designed to disclose something most shoppers do not stop to think about: the person who wrote this review did not pay for what they are describing. They requested it. It was shipped to their door at no cost. And then they sat down to tell you whether it is worth your money.

- The Mechanics of the Machine

- The History Behind the Badge

- What the Numbers Actually Say

- Gratitude Bias and the Elephant in the Room

- The Seller Side of the Story

- The Tax Wrinkle That Changes the Calculus

- What Regulators Are Watching

- The Bigger Picture: What Reviews Are Actually For

- The Verdict: Useful, But Not What It Claims

I have spent weeks looking at this system, reading seller complaints, academic papers, forum threads from reviewers themselves, and the data that has trickled out from researchers. What I found is not a simple story. It is not a story about fraud, exactly, nor is it a story about a program that works perfectly as intended. It is something messier than either: a structure that creates subtle pressures nobody fully controls, in the service of a problem that is genuinely hard to solve.

The Mechanics of the Machine

Amazon launched Vine in 2007, initially as a vendor-only program. For years it sat quietly at the premium end of the market, accessible only to large brands with deep pockets. Then in 2023, Amazon restructured it and opened enrollment to smaller third-party sellers registered in Brand Registry. The cost dropped sharply. Today,

according to Amazon’s own seller documentation1, sellers can enroll between one and two units for free, with fees climbing to $75 for up to ten units and $200 for up to thirty units per product listing.

The pitch is straightforward. You have a new product. Nobody has reviewed it yet. Without reviews, Amazon’s algorithm treats you like a stranger at a party; you exist but you do not rank. So you pay to enroll, ship your units to an Amazon fulfillment center, and wait. On the other side of the transaction, Amazon has assembled an invitation-only network of its most prolific and well-regarded reviewers. These people are called Vine Voices.

Amazon2 selects them based on the helpfulness scores their past reviews have earned from other customers. They are organized into tiers. Silver-tier members can request up to three products per day with a cap of $100 per item. Gold-tier members, those who have maintained a ninety-percent review rate across at least eighty items over six months, can request up to eight products daily with no price ceiling.

The reviewer picks something they want. Amazon ships it. The reviewer uses it, writes about it, and publishes the review. Amazon explicitly prohibits sellers from contacting these reviewers, selecting specific individuals, or influencing the content in any way. The review appears on the product page alongside regular customer reviews, distinguished only by that green badge.

On the surface, this is a reasonable solution to a genuine market problem. New products need social proof to sell. Consumers need reviews to make informed decisions. The review gap for newly launched products is real. What Vine does is bridge that gap using a curated group of experienced evaluators rather than the more chaotic mechanisms that preceded it.

The History Behind the Badge

To understand why Vine matters, you have to understand what it replaced. Before 2016, Amazon permitted a parallel economy of incentivized reviews that operated directly between sellers and individual consumers. Sellers would recruit reviewers through Facebook groups, review clubs, and third-party broker sites, offer free or heavily discounted products, and receive reviews in return. The expectation of positivity, while never legally stated, was understood by both parties.

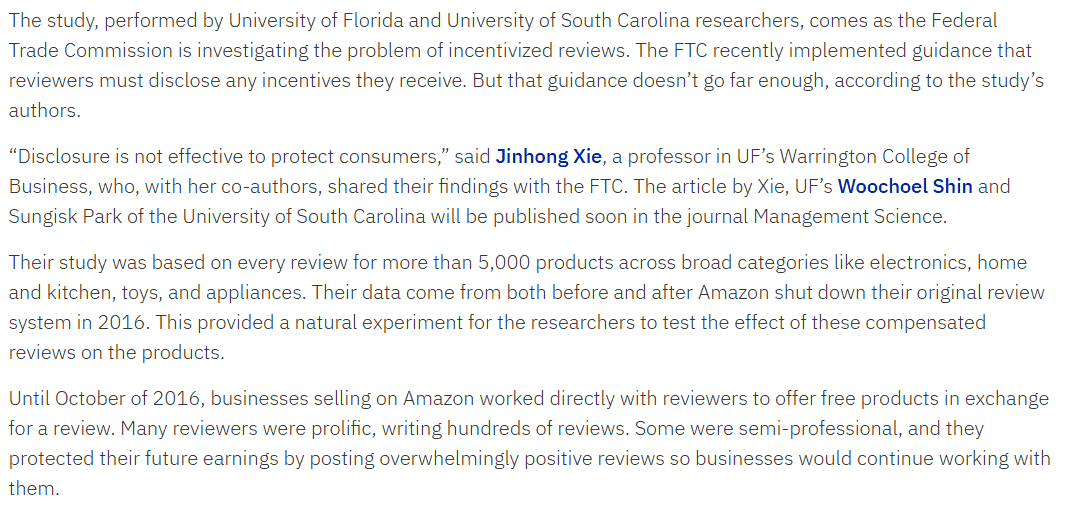

A landmark study by researchers from the University of Florida and the University of South Carolina, published in 2023, examined what happened when Amazon ended that system. The researchers found that incentivized reviews from the old seller-to-reviewer model were ‘systematically more positive than organic reviews’ for exactly the same products. The moment those reviews disappeared, product ratings fell, and customer satisfaction went up. Fewer one-star complaints followed.

The old system had a built-in corruption engine. Reviewers who depended on a steady supply of free products from sellers had every reason to stay positive. A harsh review could mean losing access to the next shipment. There was no formal contract. But the economic logic was unambiguous.

When Amazon introduced Vine as its sanctioned alternative, the crucial structural difference was the removal of that seller-reviewer relationship. The University of Florida3 researchers concluded that Vine reviews showed no measurable rating inflation. The key was that Amazon, not the seller, controlled the matching. Amazon, as a marketplace with an interest in aggregate trust rather than any individual seller’s success, had no incentive to push particular reviewers toward positivity.

This is the architecture that Vine built its credibility on. The removal of the direct financial relationship between seller and reviewer is the entire argument for trusting a Vine review more than what came before.

What the Numbers Actually Say

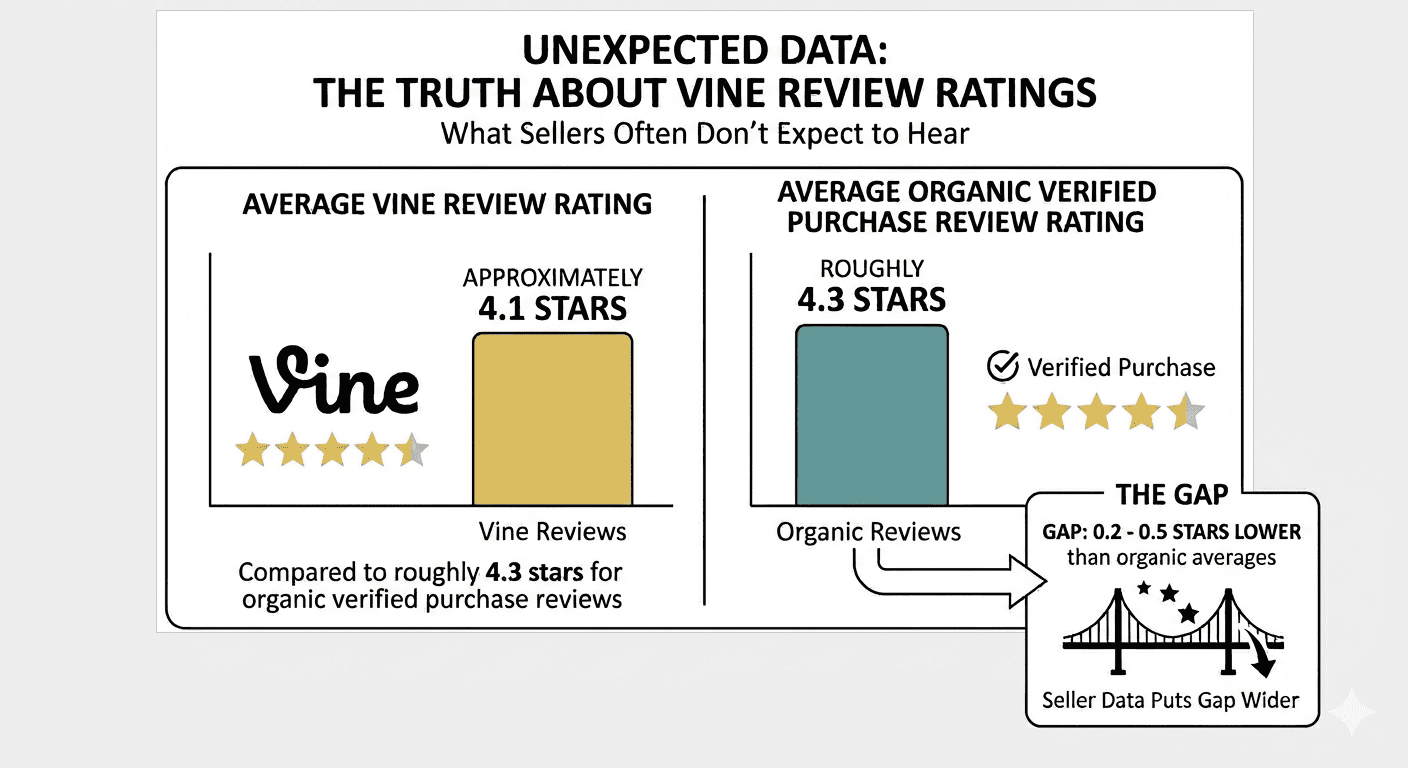

The data on Vine review ratings is genuinely interesting, and it does not say what many sellers expect to hear. According to Qoaura4, the average Vine review rating sits at approximately 4.1 stars, compared to roughly 4.3 stars for organic verified purchase reviews. Some seller data puts the gap even wider, at between 0.3 and 0.5 stars lower than organic averages.

If you expected free products to produce inflated enthusiasm, these numbers suggest the opposite. Vine reviewers are, on the whole, harder markers than regular customers. One longtime Vine member described his own approach on a popular car enthusiast forum in late 2023:

“A bunch of one or two sentence reviews doesn’t cut it. I am tough in my reviews. I probably average a 3.5 stars and I can be brutally honest in the text.”

The explanation for this is not complicated. Vine Voices are selected for exactly the kind of engagement that produces thoughtful, measured criticism. They are not first-time reviewers who bought something and loved it enough to sit down and write about it. They are people who review constantly, who have built a reputation for detailed analysis, and whose continued status in the program depends on the perceived quality of their output rather than the sentiment of it.

There is also something important happening at the structural level. Regular customers who feel mildly satisfied with a product rarely bother to write anything at all. It is the delighted and the furious who review. This skews organic review distributions toward the extremes. Vine reviewers are compelled to review everything they claim, regardless of how strongly they feel. They produce a fuller distribution, including the moderate-middle assessments that normal consumers skip.

This means Vine reviews may actually be a better picture of reality for average users, not because they are kinder, but because they include the reactions of people who were not moved to extraordinary enthusiasm or rage.

Gratitude Bias and the Elephant in the Room

None of this entirely resolves the psychological question. It is reasonable to ask whether receiving something for free changes how you evaluate it, regardless of the formal rules in place. This is what behavioral researchers call gratitude bias, and the honest answer is: yes, it probably does exist, but the evidence suggests it is smaller than you would expect.

A peer-reviewed study published in Information Systems Research5 examined Vine reviews using a two-way fixed-effect model that controlled for both product characteristics and individual reviewer tendencies. The study found that incentivized reviews did show reduced impartiality in their numerical ratings, but compensated for that through significantly higher text quality, measured by discourse coherence and richness of detail.

The study’s authors made a pointed recommendation: rather than discarding incentivized reviews from overall star rating calculations entirely, platforms should consider labeling them clearly (which Amazon already does) and potentially excluding them from the aggregate numerical average while retaining them as text content. The text, they argued, was genuinely valuable. The star rating was the compromised element.

The RateBud6 analysis of Vine versus verified purchase reviews found that Vine reviews average 4.1 stars compared to 4.0 stars for verified purchases, a gap of 0.1 stars. That is smaller than the noise in most product review systems. The same analysis noted that Vine reviewers were more likely to include detailed descriptions of product flaws than organic reviewers.

A current Vine reviewer who participates in forums described it this way on Quora:

‘At the same time, nowhere does it say a Vine reviewer has to say anything nice about a product or give it a particular rating, nothing bad will happen, nobody is going to be kicked out of the program, we are just supposed to say what we think.’

But the same commenter acknowledged the harder truth: ‘While reviewing a free product, you will subconsciously be more prone to forgive shortcomings.’

That subconscious quality is precisely the problem. It cannot be designed away with policy language. It lives below the level of deliberate choice.

The Seller Side of the Story

If you spend any time in Amazon’s Seller Central forums, you will find that sellers have a complicated relationship with Vine. There are grateful success stories, and there are bitter accounts of products damaged by reviews that seem to miss the point entirely.

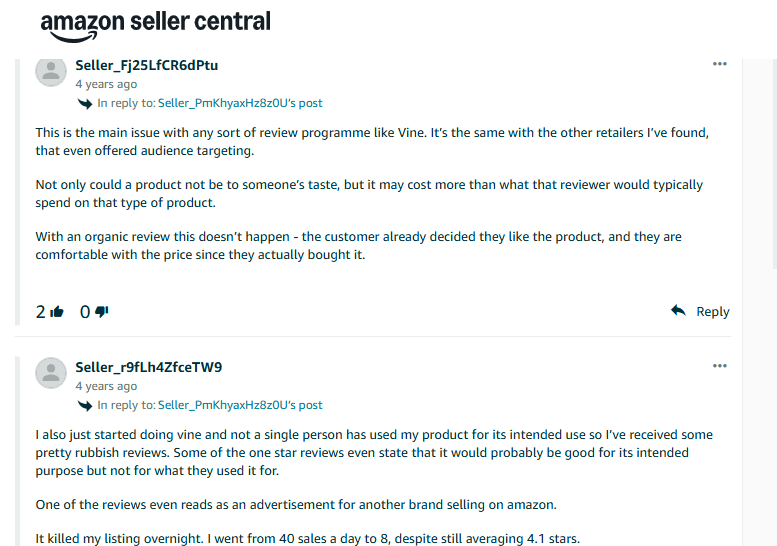

One seller in the UK described their experience this way in Seller Central7:

‘We’ve used Vine on a handful of new products to gain honest reviews; it really has been a disaster on most occasions. Even when their review states that the product is excellent, they still only give you a 4-star review. Apart from this, they usually admit they wouldn’t normally buy this type of product, so they are reviewing a product they already have no interest in.’

This points to a structural flaw that gets less attention than the biased question. Vine reviewers choose products from a catalog, and their choices are driven partly by curiosity and novelty rather than by genuine interest in the category. A reviewer who never uses scented candles might claim one because it is available and free. That reviewer’s evaluation of whether the candle smells good, whether it burns cleanly, and whether it is worth the price at retail is detached from the perspective of the actual target customer.

A seller forum post from earlier this year put it plainly:

‘A big reality: many Vine orders happen because the product is free, not because the person actually wants it. You end up sampling people who might never purchase the product with their own money, and their reviews aren’t always representative of your real buyer.’

Another seller described receiving a review of their candle from a Vine Voice who openly admitted she had no interest in candles, had never purchased one, and was reviewing it on behalf of someone who might enjoy it. She gave it three stars. The seller was paying enrollment fees and giving away stock for that.

There is a darker end of the spectrum, too. One seller described receiving a one-star review from a Vine member who, based on the delivery and review timestamps, had posted the review the same day the product arrived, suggesting no actual use of the product. Amazon’s position, after multiple support escalations: as long as the review does not violate community guidelines on content, it will not be removed.

This is the paradox at the heart of Vine. Amazon has built structural barriers to seller-reviewer collusion, and those barriers are meaningful. But the program’s architecture cannot prevent a reviewer from picking something randomly, using it carelessly, or reviewing it in bad faith, because the program has no mechanism to verify that a product was actually tested before the review was submitted.

The Tax Wrinkle That Changes the Calculus

There is one aspect of Vine that rarely appears in marketing materials about the program: Vine members in the United States are required to pay income tax on the fair market value of products they receive. Amazon issues a 1099-NEC form to any Vine Voice whose total product value exceeds $600 in a calendar year.

A Gold-tier member discussing his 2023 taxes on the BobIsTheOilGuy forum8 described receiving $2,600 worth of products through Vine that year, which triggered a 1099 and a significant, unexpected tax bill. He expressed frustration that Amazon had not provided any mechanism for paying quarterly estimated taxes, since enrollment for most people happens partway through a tax year.

This is not a trivial matter. A Vine reviewer claiming thirty items with a combined retail value of $3,000 might face a tax liability of several hundred dollars, depending on their bracket. It fundamentally changes the calculus of whether the program truly represents ‘free’ products, and some argue it creates its own accountability pressure. You are less likely to treat a product frivolously if you have skin in the game, even if that skin is measured in tax liability rather than purchase price.

Conversely, the reviewer who is deeply aware of their tax exposure may approach the catalog strategically, selecting the items most likely to generate useful reviews relative to their personal tax hit, which could introduce its own form of selection distortion.

What Regulators Are Watching

The Federal Trade Commission has been paying attention to the incentivized review ecosystem for several years. In August 2024, the FTC9 finalized a sweeping new rule on consumer reviews and testimonials that took effect on October 21, 2024. The rule carries civil penalties of up to $51,744 per violation for knowing infractions.

The rule explicitly carves out space for incentivized reviews, provided the incentive is not conditioned on a particular sentiment. In other words, offering a product in exchange for honest feedback is permissible. Offering a product in exchange for a five-star review is not. This is the line that Vine, as designed, does not cross. Amazon has no mechanism by which the seller conveys a sentiment expectation to the reviewer. The Vine architecture was, in some sense, pre-compliant with the FTC’s framework.

But the FTC’s broader research on incentivized reviews is worth sitting with. The University of Florida study10, which the FTC has cited in its rulemaking, showed that even disclosed incentivized reviews inflate ratings and boost sales compared to organic reviews. The disclosure, visible to anyone who looks, does not neutralize the effect on aggregate consumer behavior.

This raises a question the FTC has not fully answered: if the green badge does not actually correct for the psychological and structural distortions baked into incentivized reviews, is transparency enough? The researchers’ recommendation was not to ban disclosed incentivized reviews, but to consider excluding their numerical ratings from aggregate calculations. Amazon has not adopted that approach.

The Bigger Picture: What Reviews Are Actually For

I keep coming back to a basic question: what are reviews supposed to do? They exist, nominally, to give prospective buyers honest information from people who have used a product. The problem is that a perfect review system would require reviewers who have the exact profile of the intended buyer, have used the product in the intended way, for long enough time to assess durability, without any incentive to shade their assessment in either direction.

No such system exists or could exist at scale. Every review mechanism has its distortions. Organically verified purchase reviews skew toward the emotional extremes. Early adopter reviews may not reflect the product experience after manufacturing refinements. Reviews from superfans or habitual negative reviewers introduce personality noise. Amazon’s old seller-to-reviewer model created direct financial pressure toward positivity.

Vine eliminates the direct financial pressure. It introduces a different set of distortions: reviewer mismatch with target audience, the psychological reality of gratitude bias, the 30-day review window that may not capture long-term performance, and the incentive structure of tier maintenance that rewards review velocity.

What Vine produces is not unbiased information. What it produces is a specific kind of information with a known set of limitations, made visible through labeling. That is different from fraud. It is also different from the ideal that the green badge implicitly promises.

I found one of the more illuminating threads about this on Quora, where a current Vine member wrote at length about the experience. The post captures something the official program descriptions never quite acknowledge:

“It’s true that we are not required to be positive or knowledgeable: our reviews should be ‘honest and descriptive of our subjective experience’. Unfortunately the structure of the program promotes quantity over quality: We are given 30 days to submit a review, which doesn’t in some cases allow for a sufficient review. We are asked to be honest first and foremost, positive or negative. No one has ever been kicked out of the program for submitting negative reviews, yet some seem to believe in this myth. Bias. We are supposed to be honest, but the reality is that while reviewing a free product you will subconsciously be more prone to forgive shortcomings.”

That acknowledgment of subconscious bias, coming from inside the program, is more useful than anything Amazon’s marketing copy offers. The reviewer is not claiming Vine is corrupt. They are saying it is human. And human means biased, even when the intentions are good.

The Vine community on Reddit has discussed the declining desirability of the product selection over time, the tax burdens, the tier maintenance pressure, and the awkwardness of reviewing products you had no prior interest in. The picture that emerges from these discussions is of a program that attracts genuinely conscientious reviewers who are navigating a structure that is, at its edges, somewhat misaligned with the thing it claims to produce.

The Verdict: Useful, But Not What It Claims

Amazon Vine is not a fake review factory. The evidence does not support that conclusion. It is a program that produces more detailed, more coherent, and often harsher reviews than organic systems, staffed by people who take their reputation as evaluators seriously.

But it is also a program built on a transaction that is inherently compromising. The free product creates a relationship. The relationship creates a pressure, however subtle, that the system cannot fully eliminate through policy language. The badge tells you that the reviewer got the product free. It does not tell you what that means for how they felt about using it.

The more honest framing is this: Vine reviews are a useful signal, particularly for detailed, feature-level information about a product. They are more likely to catch genuine flaws than organic reviews, because Vine Voices are trained to look for them and have no financial relationship with the seller that might discourage candor. They are less reliable as a measure of whether a product is worth buying at full retail price for someone who fits the actual target customer profile, because many Vine reviewers are not that person and did not pay that price.

The star rating on a product with thirty Vine reviews and no organic reviews should be treated with specific skepticism. Not because Vine reviewers are dishonest. Because they are human, because they did not choose this product the way a buyer chooses it, and because the system that produced those ratings has known structural limitations that a number between one and five cannot convey.

The green badge is transparency. But transparency about the existence of an incentive does not tell you how much that incentive matters, and in this case, the research suggests it matters more than most shoppers know.

Sources

- “Get customer reviews with Amazon Vine” Sell on Amazon, sell.amazon.com/programs/vine. Accessed 8 Apr. 2026. ↩︎

- Amazon, www.amazon.com/vine/about. Accessed 8 Apr. 2026. ↩︎

- Hamilton, Eric. “Incentivized online reviews inflate product ratings, sales, even when disclosed” University of Florida, 8 Dec. 2023, news.ufl.edu/2023/08/incentivized-online-reviews-deceive-consumers/. Accessed 8 Apr. 2026. ↩︎

- “Amazon Vine Program: ROI Math for Sellers (2026)” goaura.com/blog/amazon-vine-program. Accessed 10 Apr. 2026. ↩︎

- PubsOnline, “Just a moment…” pubsonline.informs.org/doi/10.1287/isre.2022.1146. Accessed 10 Apr. 2026. ↩︎

- Spark. “Amazon Vine Program: Are Vine Reviews Trustworthy?” RateBud, 29 Dec. 2025, www.ratebud.ai/blog/amazon-vine-program-trustworthy-reviews. Accessed 10 Apr. 2026. ↩︎

- “Vine reviews has ruined our listings” 29 Aug. 2022, sellercentral-europe.amazon.com/seller-forums/discussions/t/f06f06a989374def1c1999898aa51c2d. Accessed 10 Apr. 2026. ↩︎

- Bobistheoilguy, bobistheoilguy.com/forums/threads/amazon-vine-reviewer.375402/. Accessed 10 Apr. 2026. ↩︎

- FTC, www.ftc.gov/business-guidance/resources/consumer-reviews-testimonials-rule-questions-answers. Accessed 10 Apr. 2026. ↩︎

- Hamilton, Eric. “Incentivized online reviews inflate product ratings, sales, even when disclosed” University of Florida, 8 Dec. 2023, news.ufl.edu/2023/08/incentivized-online-reviews-deceive-consumers/. Accessed 10 Apr. 2026. ↩︎