Post Author

OpenAI’s public documentation explains that API access depends on usage tier and verification status. Model availability varies by account history and billing engagement. The OpenAI official help page1 outlines this clearly.

Rate limits scale according to usage level. The more a developer spends and the longer the billing history, the higher the throughput permitted.

On paper, this structure is straightforward. Systems require stability. Computing is expensive. Abuse must be mitigated. Scaling access based on usage history is a common infrastructure strategy.

But AI is not just infrastructure. It is increasingly a cognitive infrastructure. That shift changes how access policies are perceived.

The First Barrier Developers Notice

When OpenAI releases a new model, developer excitement moves faster than formal documentation. Announcements ripple across GitHub repositories, Reddit threads, Discord servers, and X timelines within minutes. Benchmark screenshots appear almost immediately. Early testers publish latency comparisons. Others begin probing edge cases in code generation, reasoning, and prompt sensitivity.

This pattern has repeated with nearly every major release, but the launch of OpenAI o12 in 2024 offered a particularly clear illustration. Public descriptions framed the model as optimized for complex reasoning performance. The implication was that multi-step logic, structured analysis, and advanced code synthesis would improve in measurable ways.

For developers building debugging tools, automated research assistants, or logic-heavy enterprise applications, that promise was not abstract. It represented potential competitive differentiation.

Yet alongside excitement came a quieter realization. Access was not universal.

Developers quickly discovered that early availability of the reasoning optimized model was tied to usage tiers. Accounts in lower tiers could see references to the model but could not invoke it. In practice, this meant that the very moment of peak curiosity coincided with structural restriction.

In the Reddit community r/OpenAI3, one user summarized their experience bluntly. After reviewing API pricing and attempting access, they reported being denied and concluded that prior spending at a significant threshold was required before the model became available. The tone of the thread was not incendiary. It was bewildered.

A similar dynamic unfolded in r/ChatGPTCoding4. A developer building with new features explained that certain functionality required Tier 2 or Tier 3 status, yet their team remained on Tier 1. Without access, they could not benchmark the feature, validate performance claims, or integrate it into their stack. Their frustration centered on testing constraints rather than pricing itself.

Reading through dozens of such discussions, a pattern became visible. Most developers were not alleging deception. They were trying to reverse engineer the rules.

Some believed tier upgrades occurred automatically once spending thresholds were crossed. Others speculated that identity verification status might influence eligibility. A few suggested there could be internal review cycles or cooldown periods that were not publicly described. The official help documentation confirms the existence of usage tiers and rate limits. It does not provide granular insight into evaluation cadence, internal scoring logic, or precise trigger mechanics.

In highly technical ecosystems, developers are accustomed to deterministic systems. APIs either return data or return errors. Version numbers map to documented changes. Rate limits are often expressed numerically and transparently. When access decisions appear opaque, the experience feels qualitatively different from ordinary system constraints.

This is the first barrier many developers notice. It is not the cost of tokens. It is not even the existence of limits. It is the gap between announcement-level excitement and tier-dependent availability.

The psychological effect of that gap is subtle but important. At the moment when a new model is framed as transformative, some developers are positioned to experiment immediately, while others must wait or increase spending. In competitive environments, timing matters. Early experimentation allows teams to refine integration strategies, discover performance nuances, and publish comparative results.

When access stratification aligns with release timing, tier status becomes more than a throughput setting. It becomes a gate to participation in the initial wave of innovation.

In governance systems, ambiguity has consequences. If developers understand exactly how to progress, constraints feel procedural. If progression appears uncertain or partially discretionary, speculation fills the vacuum. Over time, speculation can erode confidence even when the underlying system is operationally justified.

The first barrier, then, is informational as much as financial. Developers want to know the rules of the game. When those rules are only partially visible, access control begins to feel less like infrastructure management and more like hidden architecture shaping opportunity.

Why Tiering Exists in the First Place

To understand why tiered access exists, it is necessary to begin with the economics and physics of large-scale AI systems. Advanced language models are not lightweight software features. They run on dense clusters of specialized hardware. Every inference request consumes GPU memory, processing cycles, and network bandwidth. When multiplied across millions of users and billions of tokens per day, the aggregate demand becomes enormous.

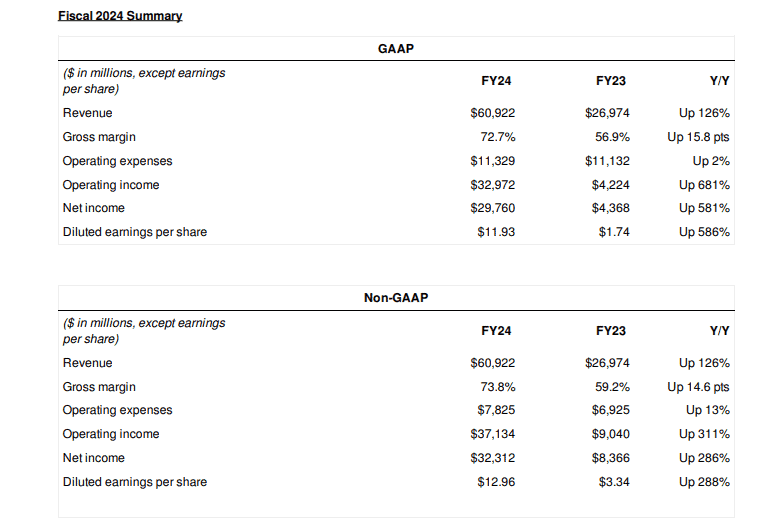

The surge in demand for AI compute is well-documented. The Financial Times5 has reported extensively on the global race for AI chips, particularly those produced by NVIDIA. In coverage detailing AI infrastructure growth, the publication described unprecedented demand driven by generative AI workloads and hyperscale data center expansion. One such report on Nvidia’s chip demand can be found here

NVIDIA’s6 own financial disclosures reinforce this scale. In its fiscal year 2024 earnings report, the company announced record data center revenue of $47.5 billion, a dramatic increase largely attributed to AI demand.

This revenue surge reflects not only model training but also inference workloads. Training large models is expensive, but serving them in production at a global scale can be equally demanding. Each user prompt triggers a sequence of computations across GPUs. As usage grows, inference becomes a persistent cost center rather than a one-time training expense.

For companies like OpenAI, which deliver models via API endpoints rather than static downloads, infrastructure load is continuous. Unlike open-weight models that can be run locally, API based models centralize compute responsibility within the provider’s data centers.

In that environment, rate limits are not decorative policy tools. They are operational safeguards. Without them, sudden spikes in demand could degrade performance, increase latency, or cause service interruptions. Tiered throughput ensures that capacity is distributed predictably and that infrastructure planning remains feasible.

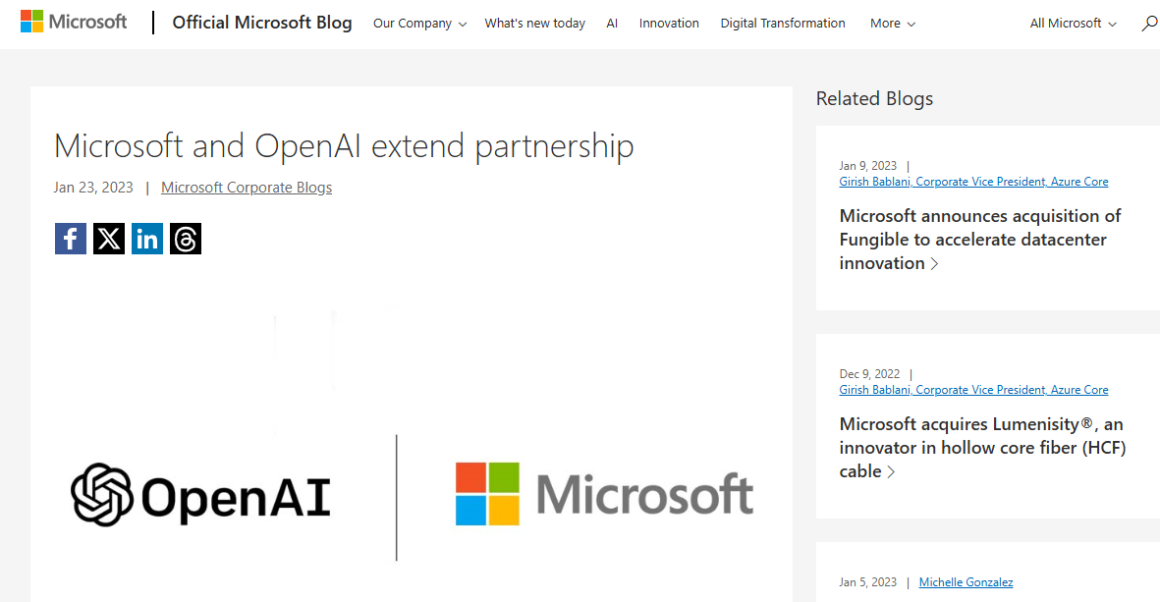

The economic pressure is further amplified by partnership structures. OpenAI relies heavily on cloud infrastructure provided through its partnership with Microsoft7 and its Azure platform. Microsoft has publicly discussed the capital intensity of building AI supercomputing infrastructure to support OpenAI workloads.

Large-scale GPU clusters require billions of dollars in capital expenditure. Data center expansion requires land, energy contracts, cooling systems, and long-term hardware procurement agreements. In such a capital-intensive environment, unlimited or unmetered access is economically unrealistic.

Tiering, therefore, addresses a basic infrastructure truth. Compute is finite and expensive.

Yet infrastructure is only part of the explanation.

Structured access has also been defended as a safety strategy. The Center for the Governance of AI8 has written about structured API distribution as a compromise between open-sourcing powerful models and restricting them entirely. Their analysis argues that API mediated access allows model providers to monitor usage patterns, enforce safeguards, and intervene when harmful applications emerge.

The central idea is that control over distribution enables oversight. When models are accessed via centralized APIs, providers can apply content filters, detect anomalous usage patterns, throttle suspicious activity, and suspend accounts that violate policies.

OpenAI’s9 own usage policies outline prohibited activities and enforcement mechanisms. The policy page describes monitoring and potential suspension for misuse.

From this perspective, tiering operates as part of a graduated trust framework. New accounts begin with limited throughput and narrower access. As they establish billing history, identity verification, and compliant usage patterns, they gain expanded privileges. This mirrors established security practices in other domains such as cloud computing and financial services.

The rationale rests on two pillars.

First, the allocation must be managed to maintain system stability and cost sustainability.

Second, misuse risk must be mitigated through controlled rollout and monitored access.

In policy terms, the logic is coherent.

However, governance is not solely about coherence. It is about legitimacy. Systems that are rational from an engineering standpoint can still generate friction if their criteria are not transparent or if their impact feels uneven.

Developers often accept constraints when those constraints are clearly explained and predictably applied. They are less comfortable when the criteria appear implicit or partially discretionary.

Tiering, therefore, sits at the intersection of infrastructure economics and governance psychology. It reflects real operational pressures and real safety concerns. At the same time, it shapes opportunity within a rapidly growing ecosystem.

Understanding why tiering exists does not resolve debates about its fairness. It does, however, ground the conversation in the material realities of AI deployment rather than abstract speculation.

The policy logic is coherent. Yet governance is not only about logic. It is about how systems feel to those inside them.

Competitive Acceleration and Capital

As I continued reviewing discussions across Reddit, Discord communities, and independent developer blogs, a pattern became increasingly clear. Developers affiliated with venture-backed startups rarely expressed prolonged frustration about usage tiers. Independent developers and bootstrapped founders did.

The explanation may be straightforward. Venture-funded startups often allocate meaningful budgets to infrastructure from the earliest stages of product development. In the context of API driven AI, this can mean thousands of dollars in usage during prototyping alone. Higher spending accelerates tier progression. Higher tiers typically bring expanded rate limits and, in some cases, earlier access to newly released models.

From a purely operational standpoint, this is predictable. Usage tiers often scale with consumption history. Accounts demonstrating sustained demand are prioritized in capacity planning. However, in competitive product markets, timing matters. Early access can translate directly into a feature advantage.

If one company can experiment with an advanced reasoning model while another remains constrained to a lower tier, the first can integrate and refine capabilities earlier. That difference may appear small over weeks. Over months, it compounds.

This dynamic mirrors capital advantages that exist across technology sectors. Firms with greater funding typically access better infrastructure, talent, and distribution channels. Generative AI, however, influences product differentiation at a deeper functional level. It is not merely a backend efficiency tool. It shapes user experience directly.

The capital infrastructure underpinning this ecosystem is also highly concentrated. OpenAI operates in close partnership with Microsoft, which has committed billions of dollars in investment and cloud infrastructure support. Microsoft’s Azure data centers host large-scale AI workloads, enabling OpenAI’s API services to operate globally.

Regulators have begun examining the implications of such concentrated investment structures. Reuters10 reported in January 2024 that the U.S. Federal Trade Commission was probing investments in major AI firms, including OpenAI and its partnerships.

The FTC11 inquiry focused broadly on whether strategic investments by dominant technology companies could entrench power in emerging AI markets. Tiered API access itself has not been the subject of formal regulatory action. Yet it operates within this wider landscape of scrutiny around infrastructure control and competitive leverage.

From a developer perspective, the issue is less about antitrust theory and more about practical acceleration. When access scales with spending velocity, well-funded teams move faster. They test earlier. They publish sooner. They refine prompts and workflows before others have the opportunity.

In high-growth technology sectors, even modest timing advantages can shape market narratives. Early adopters of new AI models often publish benchmark comparisons, blog posts, and integration guides that attract customers and investors. Visibility compounds alongside technical capability.

None of this implies malicious design. It reflects structural incentives. Systems that scale access with consumption will naturally favor actors capable of higher early consumption.

The Academic Constraint

Academic researchers face a related but distinct challenge. Evaluating model bias, hallucination frequency, safety vulnerabilities, and reasoning consistency requires large sample sizes. A handful of prompts cannot reveal systemic patterns. Meaningful empirical studies often demand thousands or millions of inference calls.

Lower usage tiers impose rate limits that may restrict sustained high-volume experimentation. For independent scholars without institutional grants or industry partnerships, scaling usage can become financially prohibitive.

A paper published on arXiv12 discusses what its authors describe as an accountability paradox in AI platform governance. The paper argues that restricted access to advanced systems can inadvertently undermine independent oversight. It suggests that when meaningful evaluation requires high-throughput access that only well-funded actors can afford, public accountability becomes uneven.

The authors do not single out one company. Their argument applies broadly to API mediated AI ecosystems. Still, the implications are relevant. If academic auditing depends on spending thresholds or selective program admission, then scrutiny may concentrate among a limited set of institutions.

OpenAI does operate research partnerships and grant programs. It has collaborated with universities and policy institutes to study safety and alignment issues. These programs can provide expanded access beyond standard tiers. However, participation is necessarily selective. Independent scholars without formal affiliation often rely solely on public API pathways.

In that context, throughput constraints become more than a budget issue. They influence the scope of inquiry. A researcher studying hallucination patterns across legal queries, for example, may require thousands of prompts to achieve statistical confidence. If rate limits restrict the sampling speed or cost ceilings limit total calls, the study design must adapt.

This does not imply deliberate obstruction. It reflects structural reality. API mediated governance centralizes control over compute. Centralization enables safety enforcement and infrastructure stability. It can also constrain decentralized auditing.

From my vantage point as someone researching this issue, the tension is structural rather than conspiratorial. Tiering aligns with capital allocation and safety monitoring. It also shapes who can experiment at scale, who can publish early findings, and who can critique effectively.

In ecosystems where knowledge and capability compound quickly, those differences matter.

The Psychology of Tiered Trust

Beyond economics and regulation lies a psychological layer that is harder to quantify but equally influential.

Across discussions on Reddit and independent developer forums, tier status is often described in emotional terms rather than operational ones. Developers speak about being “stuck” on a lower tier as if they are being evaluated by an invisible scoring system. The language shifts from technical limitation to perceived legitimacy.

In traditional cloud services, rate limits are rarely interpreted as judgment. They are understood as infrastructure constraints. In AI ecosystems, the perception changes because capability differences are more visible. Higher tiers may gain access to more advanced reasoning models or expanded throughput. Lower tiers may remain limited to earlier or lighter versions.

When access to what feels like intellectual amplification is tiered, the emotional impact differs from simple bandwidth allocation. The constraint does not feel like storage or compute. It feels closer to restricted cognitive leverage.

Repeated phrasing appears across discussions. Developers ask, “What exactly do I need to do to move up?” The question is rarely confrontational. It reflects a search for clarity. They are not demanding special treatment. They are seeking predictable rules.

Governance research consistently shows that procedural transparency increases perceived fairness. The Stanford Social Innovation Review has examined governance legitimacy in hybrid and mission-driven organizations, emphasizing that clarity in decision criteria strengthens institutional trust. When criteria remain opaque, speculation fills the gap.

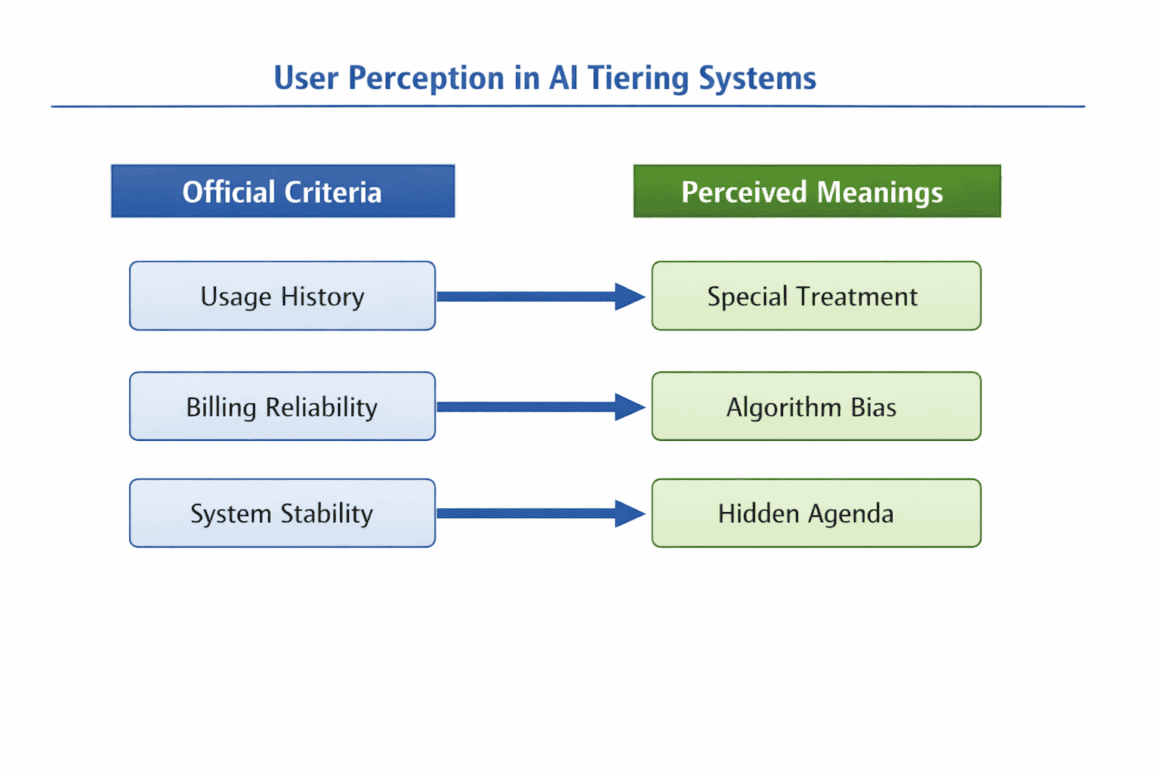

In AI platforms such as OpenAI, tiering is typically described in terms of usage history, billing reliability, and system stability. Yet if the thresholds and progression logic are not clearly communicated, users may infer additional meanings. Human psychology tends to attribute intent to systems, especially when those systems mediate access to high-value tools.

This does not imply intentional opacity. It highlights a predictable trust dynamic. When access shapes opportunity, clarity becomes as important as capacity.

Global Access and Regional Questions

As AI adoption spreads globally, tiered access intersects with regional economic disparities in subtle but meaningful ways.

OpenAI’s documentation does not explicitly differentiate by geography. Rate limits and spending thresholds are generally standardized. However, economic context varies dramatically across markets. Developers operating in lower-income regions may face greater difficulty accumulating usage spending quickly. Currency conversion costs, fluctuating exchange rates, and local payment system barriers can compound the challenge.

In absolute dollar terms, a spending threshold may appear modest in one market and substantial in another. If tier progression depends on spending velocity, economic disparities indirectly influence access speed.

The structural effect is incremental rather than dramatic. No explicit exclusion exists. Yet progression may occur faster for developers operating in stronger currency environments or within venture-backed ecosystems concentrated in major technology hubs.

If generative AI becomes foundational infrastructure for entrepreneurship, research, and education, these differences could compound over time. Infrastructure pricing models historically do not adjust for global purchasing power disparities unless specifically designed to do so.

This is not unique to AI. Cloud computing, SaaS platforms, and developer tooling have long reflected similar dynamics. What makes generative AI distinct is the degree to which model capability directly influences product differentiation and knowledge work.

When intellectual tooling scales unevenly with economic context, the long-term implications extend beyond individual startups. They shape who experiments earliest, who publishes first, and who builds with the most advanced capabilities.

Again, this is a structural observation rather than an accusation. Tiering mechanisms are rational from an infrastructure management perspective. At the same time, they intersect with psychology, governance legitimacy, and global inequality in ways that merit careful attention as AI systems become embedded in economic and educational foundations worldwide.

Comparison to Alternative Models and the Future of Tiered Governance

The debate over tiered access becomes clearer when viewed alongside alternative distribution philosophies in the AI ecosystem.

Some developers and researchers advocate for open weight releases, arguing that transparency and decentralization strengthen innovation and independent oversight. Others favor strict API mediated control, emphasizing safety, monitoring, and responsible deployment.

Meta AI provides a useful point of contrast. Its Llama13 models are released under a partial open weight framework. Open weight access allows researchers and companies to download model weights and run them locally or on their own infrastructure. This approach enables deep experimentation, architecture inspection, and independent evaluation without centralized gatekeeping. It lowers certain access barriers, particularly for well resourced research institutions with their own compute clusters.

However, open distribution also introduces misuse risks. Once weights are released, control diminishes. Content moderation layers and monitoring tools can be bypassed. The governance burden shifts from centralized provider oversight to distributed responsibility.

By contrast, OpenAI has consistently favored structured API distribution over full weight release for its most advanced models. This approach prioritizes controlled rollout, centralized monitoring, and usage enforcement. It reflects a governance philosophy rooted in managed access rather than decentralized autonomy.

Tiered access sits squarely within that structured framework. It is not an anomaly. It is an extension of a broader philosophy that treats advanced AI models as infrastructure requiring oversight.

The governance question, then, is not which philosophy is inherently correct. Open weight and structured API models each carry tradeoffs. The question is whether implementation balances safety imperatives with equitable participation.

The tension between safety and democratization underlies nearly every debate in AI governance.

Releasing powerful models widely can accelerate innovation. It can empower researchers in underserved regions. It can reduce dependency on centralized providers. At the same time, broad release increases the potential for misuse, from automated disinformation to malicious code generation.

Restricting access through APIs reduces some risks. Monitoring tools can flag suspicious activity. Usage policies can be enforced. Deployment can be staged and reversible. Yet restriction also concentrates control. It conditions participation on compliance with centralized rules and, in the case of tiering, on usage history and financial engagement.

Tiered access represents a middle path. It does not fully withhold advanced models. It conditions access on demonstrated engagement and infrastructure allocation logic.

Critics argue that economic conditioning may reinforce structural advantages already present in venture-backed ecosystems and high-income markets. Defenders argue that graduated trust frameworks protect society from reckless deployment and systemic abuse.

Both arguments contain elements of truth.

The design challenge lies in ensuring that tier systems preserve safety without unnecessarily privileging capital or obscuring advancement criteria.

After reviewing documentation, policy analysis, and extensive developer discussions, several potential improvements emerge that could strengthen legitimacy without undermining infrastructure stability.

Publishing explicit numeric thresholds for tier progression would reduce confusion. If developers knew precisely what spending or usage milestones triggered upgrade evaluation, speculation would decline.

Providing estimated timelines for automatic review cycles would increase predictability. Uncertainty about when evaluation occurs contributes to frustration more than the thresholds themselves.

Clarifying whether account verification, compliance history, or additional identity checks influence advancement would eliminate ambiguity around non-financial criteria.

Creating dedicated academic or nonprofit access tiers could strengthen independent research capacity. High-volume sampling is essential for auditing bias, hallucination patterns, and safety vulnerabilities.

Releasing periodic transparency reports summarizing tier distribution metrics would signal commitment to fairness. Even aggregate data on how many accounts exist at each tier would enhance institutional trust.

None of these reforms requires abandoning structured access. They refine its governance.

Developer communities often function as early warning systems. Their questions surface friction before formal regulatory scrutiny begins. When developers repeatedly ask how progression works, they are signaling a transparency gap rather than demanding entitlement.

Tiered access is not inherently unjust. It reflects real infrastructure costs and real safety considerations in an era when AI capability is expanding rapidly. At the same time, access architecture shapes opportunity. As advanced models become embedded in education, entrepreneurship, and knowledge work, the design of tier systems will influence who experiments, who scales, and who audits.

The future of AI governance will not hinge solely on model accuracy or compute capacity. It will also depend on how transparently and equitably access frameworks evolve.

Structured distribution may be necessary. Transparent implementation will determine whether it feels legitimate.

Sources

- OpenAI, help.openai.com/en/articles/10362446-api-model-availability-by-usage-tier-and-verification-status. Accessed 20 Feb. 2026. ↩︎

- OpenAI, openai.com/o1/. Accessed 20 Feb. 2026. ↩︎

- Reddit, www.reddit.com/r/OpenAI/. Accessed 24 Feb. 2026. ↩︎

- Reddit, www.reddit.com/r/ChatGPTCoding/. Accessed 24 Feb. 2026. ↩︎

- “Nvidia’s AI supremacy is a weapon that cuts both ways” 20 Nov. 2025, www.ft.com/content/bd6ff6cd-3f27-49d7-930b-f3d83c0cb6c8. Accessed 21 Feb. 2026. ↩︎

- NVDIA, nvidianews.nvidia.com/_gallery/download_pdf/65d669a33d63329bbf62672a/. Accessed 21 Feb. 2026. ↩︎

- The Official Microsoft Blog, 23 Jan. 2023, blogs.microsoft.com/blog/2023/01/23/microsoftandopenaiextendpartnership/. Accessed 21 Feb. 2026. ↩︎

- “Sharing Powerful AI Models” GovAI, www.governance.ai/analysis/sharing-powerful-ai-models. Accessed 21 Feb. 2026. ↩︎

- OpenAI, openai.com/en-GB/policies/usage-policies/. Accessed 21 Feb. 2026. ↩︎

- “Reuters.Com” www.reuters.com/technology/ftc-launches-inquiry-into-generative-ai-investments-partnerships-2024-01-25/. Accessed 21 Feb. 2026. ↩︎

- “FTC Launches Inquiry into Generative AI Investments and Partnerships” 24 Jan. 2024, www.ftc.gov/news-events/news/press-releases/2024/01/ftc-launches-inquiry-generative-ai-investments-partnerships. Accessed 24 Feb. 2026. ↩︎

- “The Accountability Paradox: How Platform API Restrictions Undermine AI Transparency Mandates” 14 Jan. 2026, arxiv.org/abs/2505.11577. Accessed 21 Feb. 2026. ↩︎

- “Industry Leading, Open-Source AI | Llama” Industry Leading, Open-Source AI | Llama, www.llama.com/. Accessed 21 Feb. 2026. ↩︎