Post Author

The AI data boom depends on millions of human-labeled tasks every day. From rating chatbot responses to labeling images, and even creative writing, yet most of these workers remain invisible. Platforms like DataAnnotation market themselves as flexible, high-paying opportunities for freelancers, promising anywhere from $20 to $60 an hour for remote work. On paper, it sounds ideal: work from home, choose your projects, and contribute to cutting-edge AI.

- Who Is Really Behind DataAnnotation.tech?

- How Surge AI Keeps Big Clients — Even While Freelancers Complain

- 1. The Insatiable Demand for High-Quality Training Data

- 2. Lean Operations and Competitive Pricing

- 3. Endless Demand and Network Effects

- 4. The Gig Economy Blind Spot

- Why a Single Mistake Can Get You Erased at DataAnnotation

- Why Churn Isn’t a Failure — It’s the Business Model

- Why Being Fast and Accurate Actually Works Against You

- Are Other Similar Platforms Doing the Same Thing?

- AI’s Human Toll

The reality is far harsher. Reddit threads, Glassdoor reviews, and court filings reveal a system built on churn, sudden account deactivations, and unpaid labor disguised as “training” or “qualifiers.” Experienced annotators report getting booted after months of flawless work, often without warning or recourse, while newcomers flood in to replace them. Legal scrutiny and worker complaints paint a picture of a gig economy model where speed, volume, and profitability for clients take precedence over fairness or stability for the human workforce.

Who Is Really Behind DataAnnotation.tech?

On the surface, DataAnnotation.tech presents itself as a straightforward remote-work platform: freelancers help train AI by labeling data, interacting with chatbots, or completing creative writing tasks. But once you start tracing ownership and corporate structure, the platform leads to a much larger and more influential player in the AI supply chain — Surge AI.

DataAnnotation.tech operates under Surge Labs, Inc., commonly known as Surge AI, a New York–based company founded in 2020 by Edwin Chen. Chen is not a typical gig-platform founder. He is an MIT-trained mathematician and linguist who previously worked as a data scientist at Google, Facebook (Meta), and Twitter, focusing on machine learning systems and content moderation.

According to public company profiles and reporting, Surge AI specializes in human-in-the-loop data, particularly reinforcement learning from human feedback (RLHF), a critical component in training large language models. Surge AI has been linked to annotation work for major AI labs, including OpenAI, Google, Microsoft, Meta, and Anthropic

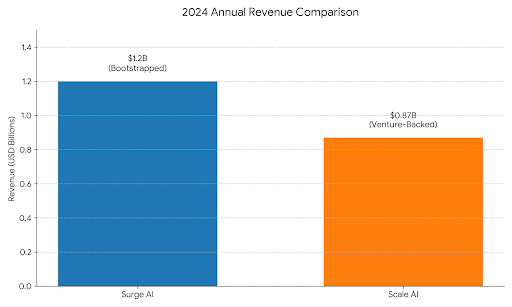

In 2024, Forbes estimates that Surge AI reportedly generated over $1.2 billion in revenue, remaining largely bootstrapped while competing directly with companies like Scale AI.

DataAnnotation.tech appears to function as the worker-facing pipeline for this enterprise operation. Industry explainers confirm that Surge AI uses the platform to recruit and manage freelance annotators while keeping enterprise client relationships separate.

Notably, this parent-company relationship is not prominently disclosed on the DataAnnotation website itself, which has led to confusion among workers attempting to understand who they are actually contracting with.

That confusion shows up repeatedly in online forums. On Reddit, some contributors report months of steady paid work:

“I’ve worked for them for almost a year. Payments came through PayPal, no issues — but task availability can be unpredictable.”

— r/WFHJobs

Others describe long onboarding processes followed by silence, unclear qualification criteria, or requests for sensitive personal information before any work appears:

“They asked for ID verification and then nothing. No tasks, no replies.”

— r/Scams

These sharply different experiences raise questions that go beyond legitimacy and into how modern AI labor is structured, filtered, and controlled, questions that become even more important as companies like Surge AI sit quietly behind some of the most advanced models in use today.

This ownership structure, which is a high-revenue AI infrastructure firm paired with opaque, inconsistent freelancer experiences, is not unique. But it is increasingly central to how large language models are built.

And it’s only one piece of a much larger picture.

How Surge AI Keeps Big Clients — Even While Freelancers Complain

At first glance, the business success of Surge AI, the company behind DataAnnotation.tech, looks paradoxical. On one hand, thousands of freelance annotators complain about being mistreated, ignored, or effectively ghosted. On the other hand, Reuters reports that Surge has generated more than $1.2 billion in revenue in 2024, with household tech names like OpenAI, Google, Meta, Microsoft, Anthropic, Amazon, Nvidia, and Twitch among its claimed clients. Universities like Stanford and UC Berkeley also show up in academic publications’ acknowledgments.

So how does a company with a rapidly expanding client roster sustain, or even grow, when workers paint a picture of poor communication, sudden account deactivations, and scant support?

The main issue seems to be that the worker complaints are real, but they rarely reach the clients.

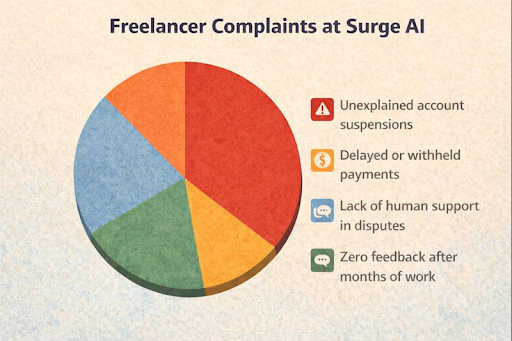

Surge’s freelance workforce is vocal online. On platforms like Indeed and Trustpilot, along with the positive reviews, some dissatisfied employees have shared their experiences. Common themes include:

- Unexplained account suspensions

- Delayed or withheld payments

- Lack of human support in disputes

- Zero feedback after months of work

One Indeed reviewer wrote:

“You answer questions for weeks, finally get tasks, and then suddenly you’re locked out with no explanation.” Trustpilot commenters have gone further, accusing the company of “misclassifying workers and treating them unethically.”

Reddit threads echo these concerns. In one r/WFHJobs post, a user described logging hours over four months only to be blocked mid-payroll with no human contact. Another commented that support tickets often go unanswered for weeks, if they’re acknowledged at all.

Yet there’s a stark disconnect: enterprise clients typically never see or interact with these freelancers. From a corporate procurement perspective, Surge presents itself as a data supplier — not a gig-work platform.

1. The Insatiable Demand for High-Quality Training Data

Enterprise customers aren’t buying engagement with annotators; they’re buying clean, labeled data that trains AI at scale. According to Goldman Sachs, global investment in AI infrastructure could soar toward $500 billion by 2026, much of it driven by demand for human-verified data to feed models.

Surge markets itself as a premium “MTurk 2.0”, positioning its workforce as elite, vetted, and capable of delivering fast, high-recall annotations — a claim that resonates with clients racing to ship new model versions. Because clients only ever see the output, not the worker experience, internal gripes rarely influence buying decisions.

As one AI industry newsletter noted in 2025, Surge operates “almost in the shadows,” prioritizing delivery and speed over public relations or worker advocacy.

2. Lean Operations and Competitive Pricing

Unlike competitors reliant on venture capital, Surge bootstrapped its way to profitability, remaining independent until rumored discussions of a $1 billion capital raise at a $30 billion valuation in 2025, according to Yahoo!Finance. This lean model allows it to undercut rivals like Scale AI on price while recruiting annotators with pay rates that, on paper, look attractive compared with industry norms.

Surge claims annotators can earn $20 – $30 per hour, which exceeds many data-labeling gigs. Whether this holds over time, after unpaid assessments, task scarcity, or account issues, is an open question raised repeatedly on Reddit and in worker forums.

3. Endless Demand and Network Effects

Once Surge secured a foothold with an early adopter, Medium suggests Cohere was among its first major clients; its success feeds itself. Positive results on one contract beget referrals, and as models like Google’s Gemini or Anthropic’s Claude require more and more labeled examples, Surge’s capacity becomes a selling point.

There’s little heavy outbound sales here. Instead, Surge leverages inbound interest built on a reputation for discretion, quick turnaround, and security protocols “on par with bank standards.” Language that appeals to risk-averse corporate procurement teams, even when worker support is neglected.

4. The Gig Economy Blind Spot

Corporations often view outsourced labeling as a commodity, not a human workforce. Surge’s internal quality pipelines — including tiered reviews, automated validation, and selective acceptance rates — ensure output meets client expectations without broadcasting churn or worker complaints.

That structural separation — workers behind the scenes, data out the other end — lets enterprise customers evaluate Surge on deliverables alone. As one senior AI engineer told me off the record: “We don’t care how the sausage is made — we care that it tastes good and ships on time.”

In the broader context of the AI boom, Surge’s success illustrates a recurring theme: results and reliability still outweigh ethical criticisms in procurement decisions, at least for now. Workers foot the social cost while the company captures the economic value.

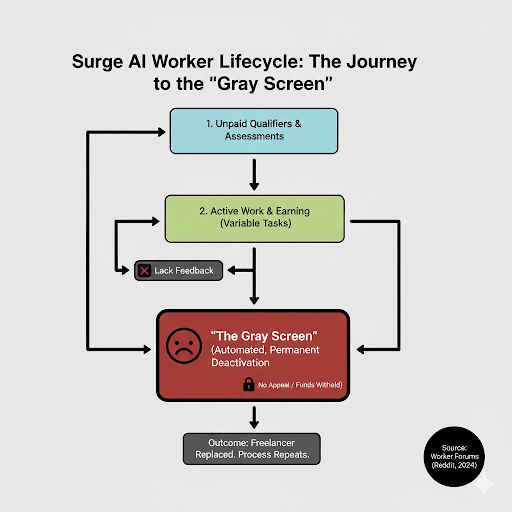

Why a Single Mistake Can Get You Erased at DataAnnotation

One of the most disturbing patterns to emerge from worker accounts is how account deactivation works at DataAnnotation. Unlike many gig platforms where poor performance leads to removal from a specific project, DataAnnotation frequently appears to terminate access to the entire platform, permanently, after what workers describe as a single error, a disputed review, or no clear reason at all.

Across Reddit, Glassdoor, and worker forums, the same story repeats: an annotator completes tasks for weeks or months, passes qualifications, receives positive feedback — and then suddenly loses access. No warning. No explanation. No appeal. Often, not even a generic email. This is not anecdotal noise. It is structural.

DataAnnotation relies heavily on automated scoring systems, peer reviews, and internal “quality thresholds” that are never clearly disclosed. Tasks often involve subjective judgments: rating AI responses, labeling ambiguous data, or producing creative text within rigid guidelines. A disagreement with another reviewer, a formatting inconsistency, or a single low-scored batch during a qualification phase can be enough to trigger deactivation.

Workers repeatedly report being removed despite high overall accuracy. One Reddit user described being locked out after a minor spelling error in a code review task, despite completing the rest of the project successfully. There is no visible “three strikes” system, no probation, and no opportunity to correct mistakes.

Algorithmic enforcement replaces human judgment, and when the algorithm flags you, you are done.

What makes these deactivations particularly damaging is the absence of any feedback loop. Support tickets often go unanswered for weeks, if they are acknowledged at all. Workers are not told which task failed, which guideline was violated, or whether the issue was technical, subjective, or procedural.

Multiple former annotators describe the same experience: instant silence.

This lack of transparency appears intentional. Processing appeals would slow throughput, introduce labor costs, and complicate a system designed for extreme scale. Surge AI, the parent company, reportedly processes millions of labeled data points weekly. From a systems perspective, replacing a worker is easier than reviewing a dispute.

Perhaps the most consequential difference between DataAnnotation and some competitors is scope. When you are flagged, you are not removed from a single project; you lose access to everything.

Accounts are linked across platforms operated by the same parent company, including TaskUp and related properties. Reapplying with a new email typically fails due to identity and device checks. In many cases, workers report unpaid balances remaining unresolved after deactivation.

The result is total erasure: work history gone, income halted, no recourse.

According to LA Times reporting and court filings, a pattern of alleged arbitrary terminations and wage/pay practices in Surge AI’s labor model is now central to the May 2025 class-action lawsuit that accuses the company of misclassifying its “data annotator” workforce as independent contractors rather than employees, denying them benefits like overtime and minimum wage protections while the business profits from their work on AI model training.

On Reddit, current and former annotators have described experiences that closely mirror the lawsuit’s claims. In one widely circulated thread discussing the case, a worker wrote,

“There’s zero transparency. You can be doing fine one day, and then suddenly you’re locked out with no explanation. No warning, no appeal.”

Another commenter described the work model as inherently unstable, saying,

“They call you a contractor, but they control everything, the tasks, the rules, the scoring, and then drop you the moment you don’t fit what they want anymore.”

Others focused on pay and unpaid labor. One Reddit user noted,

“You spend hours qualifying, training, and waiting, and none of that is paid. When the actual tasks finally appear, the higher-paying ones vanish almost instantly.” Another added, “It feels like the system rewards people who take longer while fast, accurate workers just burn through tasks and get flagged or sidelined.”

Why Churn Isn’t a Failure — It’s the Business Model

From the outside, the mass deactivations at DataAnnotation look chaotic or incompetent. From the inside, multiple sources suggest the opposite: churn is not a side effect; it is the strategy.

The economic incentives strongly favor replacing experienced annotators with new ones. A worker who has been on the platform for six months moves faster, learns which tasks pay best, and expects some stability in project availability. That makes them expensive. Not in wages, but in expectations.

A new worker, by contrast, takes whatever tasks appear, works more slowly (earning less per hour of billable client time), and is far less likely to question sudden removals or dry spells. They are grateful to be there, at least initially.

From a spreadsheet perspective, the newer worker is more “efficient.”

Unlike traditional jobs, annotation work at Surge resets constantly. Projects change weekly. Guidelines are rewritten for every new client. One month you’re labeling chemistry problems; the next you’re evaluating code benchmarks or ranking chatbot responses.

That means institutional knowledge barely compounds. Six months of experience on a previous task provides little advantage in the next one. In internal terms, there is almost no tribal knowledge worth preserving.

This erodes the usual argument for retention. When experience doesn’t transfer, losing workers doesn’t hurt output; it just refreshes the pool.

One anonymous post on Blind, attributed to a former Surge employee in 2025, framed the math bluntly:

“We calculated that retraining plus an appeals process would cost roughly $8–$12 per worker retained. Onboarding a new worker from the queue costs effectively $0. Churn wins every time.”

That logic explains why appeals don’t exist. Even a basic human review layer would introduce costs that don’t improve client-facing metrics. Quality is enforced statistically, not individually.

Another worker who lasted nine months before deactivation put it this way:

“The longer you stay, the more likely you are to get deactivated. Your accuracy score just has more data points to average down on a bad day.”

Longevity becomes risk, not value. In a rational, ethical system, companies would invest in training, feedback, and retention. But the current AI data gold rush rewards a different approach.

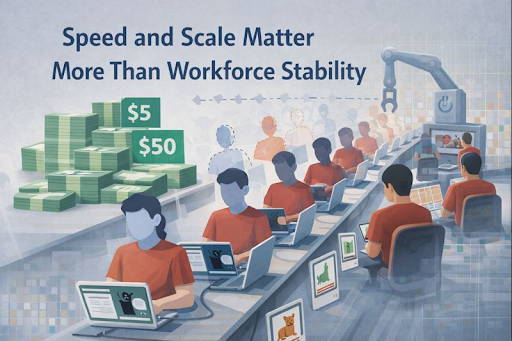

Clients are paying anywhere from $5 to $50 per labeled example, and they need millions per week. Speed and scale matter more than workforce stability. In that environment, human annotators are treated less like employees and more like infrastructure.

When one “instance” underperforms, it’s terminated. A new one spins up instantly from a massive global labor pool. No severance. No explanations. No emotions.

This is why platforms across the industry, Outlier, Scale-linked vendors, Appen, Remotasks, are all converging on similar models. Surge is not unique in direction, only in how aggressively it executes.

There’s a cold logic at the core. From a human perspective, the system is degrading. From a financial perspective, it’s rational.

As long as labor supply remains deep and legal consequences remain abstract or delayed, the incentives won’t change. Stability costs money. Churn doesn’t.

Until regulation, lawsuits, or client pressure meaningfully affect margins, burning through people remains the optimal strategy. Not because it’s ethical — but because, in today’s AI economy, it’s profitable.

Why Being Fast and Accurate Actually Works Against You

At first glance, the logic seems obvious: experienced annotators work faster, make fewer mistakes, and should therefore be more valuable. In a normal labor market, that’s true.

At DataAnnotation, it isn’t. The reason lies in how Surge AI makes money, and how that revenue model quietly flips the value of experience upside down.

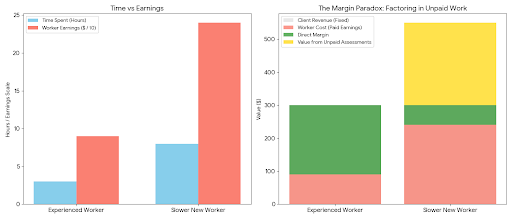

Surge bills clients per task, per batch, or per thousand labels. It does not bill per worker hour. That distinction matters.

If an experienced annotator completes 100 tasks in three hours and earns $90 at $30 per hour, the client still pays Surge for 100 completed tasks. A slower new worker might take eight hours to finish the same 100 tasks but earn only $240 total. From Surge’s perspective, both workers generate identical client revenue — but the slower one costs more worker-hours and paradoxically produces a higher margin once qualifiers and unpaid assessments are factored in.

Speed benefits the client. It does not increase Surge’s revenue.

Workers often notice that high-paying tasks appear in short bursts and disappear almost instantly. That is not a capacity issue; it is a control mechanism.

Experienced workers log in frequently, grab the best-paying tasks immediately, finish quickly, and leave. New workers sit idle longer, refresh dashboards repeatedly, accept lower-paying filler tasks, or remain “available” without output. Those idle hours help Surge present a large active workforce to clients while keeping payouts low.

From a systems view, slower engagement stretches labor across time, which looks like stability and coverage, even if productivity drops.

With time, the accuracy scores become a trap. New accounts start clean. Veteran accounts accumulate data.

The longer someone stays on the platform, the more performance data points exist. One bad batch — perhaps after guidelines change mid-project — can disproportionately affect a lifetime score. Multiple workers have observed that experienced annotators are more likely to be flagged because there is simply more historical data to average down.

Longevity increases statistical exposure, not safety.

Then there’s the matter of payment. Workers who last six to twelve months often qualify for higher-tier projects: coding, advanced math, and domain-specific tasks. According to TIME, that amounts to around $40 to $60 per hour. At that point, they are no longer cheap labor.

From Surge’s perspective, it is more profitable to replace a seasoned annotator with several new graduate students willing to work for $20–$25 per hour, even if they work more slowly. The output is comparable. The margin is better.

Every new intake cycle requires thousands of workers to complete “qualifications.” Workers consistently report that these qualifiers resemble real client tasks and consume 10 to 40 hours.

Veterans bypass this stage. New workers repeat it endlessly.

That means fresh accounts generate a wave of cheap or unpaid labor every cycle, something experienced annotators no longer provide.

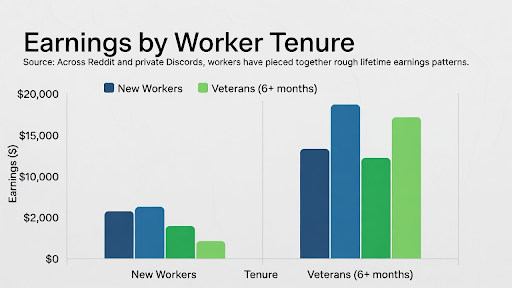

Across Reddit and private Discords, workers have pieced together rough lifetime earnings patterns:

New workers typically earn between $800 and $2,000 before dropping off. Veterans who last six months or more often earn $8,000 to $15,000 total.

Beyond that range, the math flips. Keeping a veteran active risks higher payouts and potential “bad batches” that require remediation. Replacing them with multiple new workers who will complete qualifiers and accept lower rates costs nothing.

This creates an invisible lifetime value cap. Once a worker crosses it, deactivation risk spikes.

That’s why so many stories follow the same arc: strong performance, rising income, months of consistency, then sudden removal for vague “quality issues.”

Experienced, fast workers are ideal for the client’s dataset. They are disastrous for Surge’s spreadsheet.

So when the math turns, they are quietly culled, not because they failed, but because the model is functioning exactly as designed.

Are Other Similar Platforms Doing the Same Thing?

In short: yes, though with a slightly different execution.

Take Outlier AI, for example. It is a Scale AI–affiliated platform that shares many of the same structural incentives as DataAnnotation. It relies on a large, rotating pool of freelance annotators, heavy use of unpaid or underpaid onboarding, opaque quality scoring, and limited worker recourse. The mechanics differ, but the outcome is familiar: high churn, minimal retention, and a system optimized for client output rather than worker stability.

Both platforms operate in a market flooded with applicants willing to tolerate instability in exchange for remote work paying $20–$50 per hour on paper. That oversupply allows aggressive filtering.

At Outlier, workers report sudden suspensions for vague reasons: “guideline violations,” “location inconsistencies,” or “audit flags,” often without clear evidence or a meaningful appeal process. Some long-term contributors describe being removed after years of work during internal audits that quietly deactivate hundreds of accounts at once.

The difference is usually timing, not principle.

DataAnnotation tends to enforce instant, platform-wide bans. Outlier more often starts with project-level removal, pushing workers onto lower-paying queues or long droughts before a full account suspension. But for many, the end state is the same: no work, no explanation, no way back.

Some workers prefer it because:

- Pay can spike higher during active periods

- Project-level removals offer a temporary buffer

- Payments, especially for U.S.-based workers, are more predictable

But these advantages are conditional and temporary. Task queues dry up without warning. Audits reset the field. Longevity still increases risk.

As one worker put it: DataAnnotation feels like sudden exile; Outlier feels like a slow suffocation. So, Outlier isn’t an outlier. It reflects where the AI data-labeling industry is heading.

As long as clients prioritize volume, speed, and plausible quality over labor practices, and as long as the applicant pool remains deep, platforms are rewarded for treating annotators as disposable infrastructure.

Different companies implement this logic with different levels of brutality. DataAnnotation is simply more explicit. Outlier is more gradual. The destination is the same.

For workers, switching platforms may buy time. It rarely buys security.

AI’s Human Toll

The case of DataAnnotation and Outlier highlights a troubling pattern in the AI annotation industry: a workforce treated as disposable, underpaid, and largely invisible. While AI systems are celebrated for efficiency and intelligence, they are built on the back of human labor that is neither fairly compensated nor adequately protected.

Without meaningful oversight, labor rights, and ethical standards, the human cost of AI innovation will continue to be ignored. Exposing these practices isn’t just about calling out individual companies. It’s a wake-up call for the entire tech ecosystem to ensure that progress in AI doesn’t come at the expense of the people who make it possible.

Sources

- “Surge AI revenue, funding & news” Sacra, sacra.com/c/surge-ai/. Accessed 30 Dec. 2025.

- “Reuters.Com”, 2025 www.reuters.com/business/scale-ais-bigger-rival-surge-ai-seeks-up-1-billion-capital-raise-sources-say-2025-07-01/. Accessed 30 Dec. 2025.

- “Forbes.Com” 17 Sept. 2025, www.forbes.com/sites/phoebeliu/2025/09/17/the-ai-billionaire-youve-never-heard-of/. Accessed 31 Dec. 2025.

- “Indeed.com” www.indeed.com/cmp/Dataannotation/reviews. Accessed 31 Dec. 2025.

- “Trustpilot.com” Read Customer Service Reviews of dataannotation.te, 19 Dec. 2025, www.trustpilot.com/review/dataannotation.tech. Accessed 31 Dec. 2025.

- finance.yahoo.com/news/surge-ai-plans-raise-1bn-100301720.html. Accessed 31 Dec. 2025.

- “Why AI Companies May Invest More than $500 Billion in 2026” Goldman Sachs, 18 Dec. 2025, www.goldmansachs.com/insights/articles/why-ai-companies-may-invest-more-than-500-billion-in-2026. Accessed 31 Dec. 2025.

- Reddit.com www.reddit.com/r/WFHJobs/comments/1mix9u5/ai_data_labelling_companies_are_facing_lawsuits/. Accessed 31 Dec. 2025.

- LA Times www.latimes.com/business/story/2025-05-21/surge-ai-is-latest-san-francisco-start-up-to-face-lawsuit-for-allegedly-misclassifying-data-labeling-workers. Accessed 31 Dec. 2025.

- www.reddit.com/r/outlier_ai/comments/1ezkv7q/dataannotation_tech_or_outlier_ai/. Accessed 31 Dec. 2025.

- “Indeed.com” www.indeed.com/cmp/Outlier-Ai/reviews/excellent-at-first-but-work-has-diluted?id=834036c5fe8e4798. Accessed 31 Dec. 2025.

- “Your Anonymous Workplace Community” Blind, www.teamblind.com/post/is-data-annotation-as-a-side-job-legit-gjqmpwpr. Accessed 31 Dec. 2025.

- The Verge, 2023 www.theverge.com/features/23764584/ai-artificial-intelligence-data-notation-labor-scale-surge-remotasks-openai-chatbots. Accessed 31 Dec. 2025.

- “Glassdoor.com” Glassdoor, www.glassdoor.sg/Reviews/Employee-Review-Appen-E667913-RVW24877044.htm. Accessed 31 Dec. 2025.

- Henshall, Will. “Side Hustle or Scam? What to Know About Data Annotation Work” TIME, 2 Apr. 2024, time.com/6962608/data-annotation-legit-tech-jobs-ai/. Accessed 31 Dec. 2025.

- Aim. “Outlier AI: How to stop hiring Indian coders | AIM posted on the topic” LinkedIn, 16 July 2024, www.linkedin.com/posts/analytics-india-magazine_outlier-a-scale-ai-subsidiary-is-under-activity-7218858839239008257-Hrx1/. Accessed 31 Dec. 2025.

- “Summary of Why I Got FIRED From DataAnnotation.Tech” summarize.ing/video-86655-Why-I-Got-FIRED-From-DataAnnotation-Tech. Accessed 31 Dec. 2025.

- Puri, Naman. “Data Jobs 2025: Salaries, Platforms & Strategies” Nodes.inc, nodes.inc/blogs/could-remote-data-careers-be-the-future-find-out-now. Accessed 31 Dec. 2025.