Post Author

Every time you open Google Search, watch a YouTube recommendation, or interact with an AI‑enhanced feature, there’s an invisible workforce at work behind the scenes. Known inside the tech industry as Trust & Safety (T&S), this team’s job is simple in description but monumental in scale: protect users from harmful content, abusive behavior, scams, and anything that violates a platform’s policies. On one hand, trust and safety is part policy, part psychology, part detective work. Human judgment where machine judgment fails. On the other hand, it’s about maintaining trust in platforms that billions of people use daily.

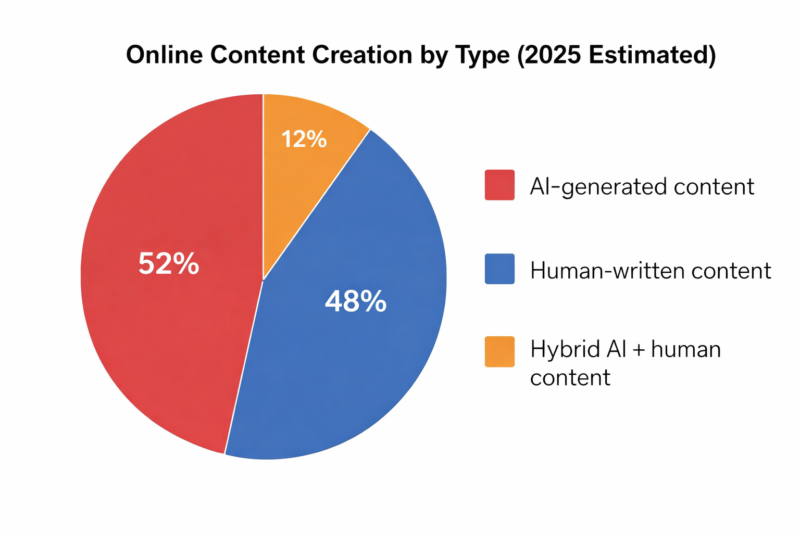

Historically, Google and other tech giants invested heavily in these teams throughout the 2010s and early 2020s, hiring thousands of moderators and policy experts to review flagged material and refine automated systems. But that era appears to be receding. In 2025, layoffs and strategic shifts have seen trust and safety workforces shrink just as artificial intelligence is generating content at previously unimaginable volumes, basically more than 50% according to AI Base1. Platforms are now leaning on automated systems to handle the flood, leaving fewer humans to oversee nuanced decisions.

Industry observers have described this moment as a quiet retreat of trust and safety. A shift from human‑centered protection to compliance‑focused automation. Many trust and safety professionals acknowledge that this quiet ensued not because there’s nothing to say, but because speaking out can jeopardize careers and personal safety. Regardless, the result is unmistakable: the people entrusted with keeping platforms safe are shrinking even as the volume and complexity of content, especially AI‑generated, explodes.

Who Runs Google’s Trust & Safety

At Google, trust and safety isn’t controlled by a single individual. It’s a global leadership network embedded in policy, product, and enforcement functions across the company:

- Laurie Richardson (Vice President of Trust & Safety, Google): Named on Google’s own transparency site as a key leader in trust and safety strategy and policy enforcement across products.

David Graff (Vice President of Global Policy and Standards): Oversees large parts of how Google develops, implements, and evolves content policies that T&S teams enforce.- Amanda Storey & Mary Phelan (Directors of Trust & Safety, Google Safety Engineering Centre): Lead on‑the‑ground enforcement, risk compliance, and content safety work at Google’s engineering centres.

In addition, specialised leaders direct local and product‑specific safety efforts. For example, Sachin Kakkar (Engineering Site Director, Privacy, Safety & Security) and Ram Papatla (Senior Director, Trust & Safety, APAC) in India, who oversee regional safety operations.

These executives work alongside product, legal, and engineering teams to build moderation tools, draft and interpret policy, and respond to emerging risks such as AI‑generated content. Despite this structure, many users on Reddit express frustration about the opacity of this leadership:

“Hard to know who even answers for safety — emails bounce, support is slow, and there’s no clear point of contact.” — Comment from a developer awaiting a response from Google’s Trust & Safety team.

While these leaders define policy and strategy, the operational reality involves hundreds of analysts, engineers, and policy specialists working under their guidance to enforce rules and balance automation with human judgment.

Google’s Trust & Safety in Numbers

As artificial intelligence fuels an explosion of online content, one of the most pressing questions facing Google is this: Are humans meant to keep the internet safe, shrinking faster than the problem is growing? To answer that, we need to look at two intersecting trends. Changes in the Trust & Safety workforce, and the sheer scale of AI‑generated content flooding the web.

In the early 2020s, Google’s Trust & Safety operations included not only full‑time teams embedded in Search, YouTube, and AI product groups, but also a large contingent of contractors hired to rate, review, and refine outputs from emerging tools like the Gemini chatbot and Search Overviews. These “super raters” played a crucial role in shaping AI systems’ responses and detecting harmful outputs.

That landscape is shifting. Financial Express2 reports that more than 200 contract workers involved in AI evaluation, people who helped “train the trainers,” were laid off without warning, with many skeptical that their own ratings had been used to help automate their roles.

On Reddit3, workers who had been doing this behind‑the‑scenes rating shared stark accounts:

“More than 200 contractors who worked on evaluating and improving Google’s AI products have been laid off without warning in at least two rounds of layoffs… used to teach chatbots and other AI products, including Google’s search summaries feature.”

These layoffs reflect more than HR reshuffling: they suggest a shift away from human‑centred oversight toward automated systems that promise to scale faster. While Google has not publicly disclosed precise Trust & Safety headcount changes, layoffs in related AI‑rating functions are consistent with broader workforce reductions and “efficiency measures” that have affected multiple teams at the company.

What these trends mean in practice is that fewer humans are now tasked with supervising increasingly sophisticated AI systems, even as those systems generate more content than ever before.

If human moderation is shrinking, what is it up against? The numbers are staggering.

Multiple analyses show that AI is no longer a minority contributor to online content. It’s rapidly becoming dominant. A 2025 study by SEO analytics firm Graphite4 found that more than half of newly published written content on the internet is currently AI‑generated, up from just 10% before the launch of ChatGPT in late 2022.

This tidal wave of AI content, like text, images, videos, and automated summaries, dramatically increases the volume of material Google’s systems must assess for safety, quality, and adherence to policy. Relying on automated filters alone is an appealing shortcut, but it also means that subtle nuances, cultural contexts, and borderline cases may slip past without human insight.

Reddit user discussions about AI content also underscore how pervasive this issue feels to people trying to interpret what’s real versus what’s machine‑made:

“AI content is everywhere; the tools can’t keep up with nuance anymore.”— Reddit commenter in an AI discussion thread.

While individual search results may still favour quality human‑written pages on top SERPs, the underlying mass of AI‑generated materials continues to swell, intensifying the workload for Trust & Safety teams and the complexity of enforcement decisions.

What Trust & Safety Did — Before the Cuts

Before Google’s recent round of workforce reductions and automation shifts, Trust & Safety teams operated with a clear set of responsibilities and a structured human‑centric workflow that balanced policy, context, and judgment. Their days revolved around three core tasks:

Content review: Human moderators and policy specialists scanned flagged material (text, images, and video snippets) to determine whether it violated Google’s policies on abuse, spam, hate, or safety risks.

Policy enforcement: Teams interpreted and enforced guidelines that governed acceptable content across products like Search, YouTube, and Gmail, taking into account context and local norms.

Appeals and escalations: When users disputed automated decisions or contestable flags, human reviewers stepped in to analyse nuance before reversing or upholding actions.

Visuals behind this section could include generic stock UI screenshots of trust and safety dashboards, moderation queues, or policy tags, illustrating the complex workflows moderators balance between automated tools and human judgment.

Even at full strength, the workload was staggering. On community platforms like Reddit, itself a deeply human‑driven moderation ecosystem, volunteer and paid moderators have long warned about limits. In an earlier thread on a trust & safety professionals subreddit, one commenter highlighted how razor‑thin teams already were before cuts:

“…only 97 workers, then down to 74, handling appeals — no wonder most are AI‑handled…” — a Reddit post reflecting on staffing constraints as workloads ballooned.

Academic research underscores these inherent challenges in moderation environments. ResearchGate5 shows that Studies like Policy‑as‑Prompt: Rethinking Content Moderation in the Age of Large Language Modelsdescribe how moderation infrastructure traditionally depended on humans to operationalise and interpret policies, a model now under strain as AI scales content and blurs contextual judgments.

Another recent study introduces frameworks for AI-assisted governance that seek to blend human and machine review, precisely because relying solely on software misses the nuanced reasoning humans provide. Especially in community contexts where rules vary, and content interpretation is complex.

In other words, even before current cuts, trust and safety work was a balancing act between human interpretation and automated assistance. As teams shrank and automated systems took on more responsibility, that balance shifted. Often, leaving nuanced decisions to tools still learning the rules while humans focus only on the most ambiguous or serious cases.

The Impact of AI‑Generated Content: What Workers and Users Are Saying

As Google’s platforms absorb ever greater volumes of AI‑generated content, the lived experience of workers and everyday users reveals how this rapid shift is impacting trust, morale, and real‑world outcomes. Below, we break the conversation into insider reactions from the AI workforce and users‑generated criticism of AI‑powered search results, drawing on authentic Reddit commentary and industry reporting.

Google’s internal workforce responsible for moderating and improving AI systems has been under intense pressure. Many of these workers, often outsourced contractors known as “super raters”, performed nuanced tasks like evaluating and editing outputs from tools such as the Gemini chatbot and AI Overviews for search summaries. These roles required judgment, subject‑matter expertise, and quality control long before automation took over.

But in 2025, hundreds of these workers were abruptly laid off, triggering both uncertainty and backlash.

These layoffs, according to workers and reporting, weren’t solely about cost. Many employees believe they were replaced with automation or cheaper third‑party labour, often at the expense of working conditions and wages. Salaries for comparable work varied widely, with some raters earning $28–$32 an hour while subcontracted colleagues earned far less.

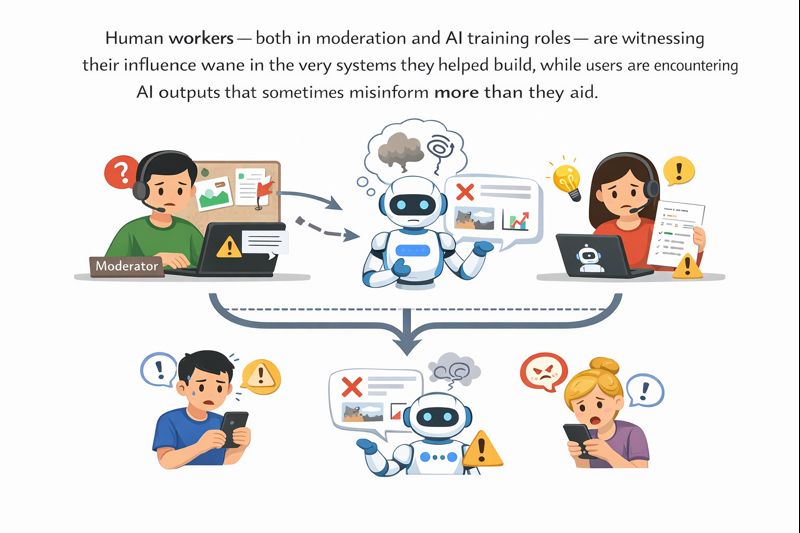

The broader morale impact is clear in online discussions: many workers felt their expertise was being undercut by technology they were helping to build. Instead of recognising their role in shaping cleaner AI outputs, they watched systems they helped train edge them out of the process. Some described the work environment as “oppressive” following layoffs, performance pressure, and reduced internal communication channels.

Insiders describe a tension between the value of human judgment and a push toward automated tooling that often sidelines the very people who taught those tools how to behave.

It’s not just workers who are feeling the reverberations of AI‑driven content shifts. Regular users are increasingly vocal about how Google’s AI‑augmented search outputs behave in the wild.

Across Reddit, users have shared examples of AI‑generated search summaries that are inaccurate, misleading, or downright frustrating:

“AI Overviews put people at risk of harm with misleading health advice,”summarising a news report about inaccurate AI health summaries that could worsen user decisions.

One commenter reacted to these developments with exasperation:

“I’ve had so much obviously incorrect info in Google AI Overviews I never asked for. It’s exhausting. It’s hostile. Pull the plug already.”— A Reddit user responding to concerns about harmful or misleading AI‑generated summaries.

Another thread on Reddit’s YouShouldKnow subreddit surveyed user experiences, highlighting repeated errors and confidence in incorrect AI answers:

“I used the full AI search earlier … it told me it was a different animal breed entirely… then doubled down.”— Reddit user sharing firsthand examples of AI search inaccuracies.

Critiques often focus on how AI responses can appear confident even when they’re wrong, creating situations where users trust the answer because the system sounded authoritative. Other discussions express frustration that these AI summaries can block access to traditional search results that might better answer a question.

Some Redditors paint a bigger picture, suggesting that widespread AI content and AI‑generated responses have diluted the quality of Google Search and encouraged people to seek human‑driven sources like Reddit and TikTok instead.

Human workers (both in moderation and AI training roles) are witnessing their influence wane in the very systems they helped build, while users are encountering AI outputs that sometimes misinform more than they aid. These overlapping frustrations from insiders and everyday searchers alike underscore a critical challenge: automation alone cannot guarantee accuracy or safety without significant human oversight and iterative refinement.

Consequences: Backlash, Misinformation & Trust Erosion

As AI‑generated content and automated moderation systems rise, real-world consequences are emerging, from misleading information in search results to growing distrust among users and creators. Google’s AI Overviews(the generative snippets that appear at the top of search results) have faced increasing scrutiny for delivering inaccurate, misleading, or potentially harmful information. Reporting by The Guardian6 documented several cases where AI summaries provided erroneous medical advice, such as incorrect dietary guidance for serious illnesses, raising concerns about risk to public health.

In Reddit communities, users readily share their frustrations with these automated results.

One commenter wrote, “Google AI overviews are wildly inaccurate and just in the way at this point.” Another thread mocked the absurdity of trusting AI summaries: “…drive me absolutely insane. This is far worse than taking the first search result and running with it…like taking life advice from a scam artist.”

Comments like these reveal a key tension: AI systems sound confident even when they’re wrong, which can mislead users into trusting incorrect information. Independent research shared on Reddit showed that AI-powered search tools across platforms demonstrate high error rates, with models incorrectly answering more than 60% of tested queries in some cases.

Large-scale surveys suggest a broader trust crisis for online content, a backdrop that makes AI missteps even more consequential. According to poll results reported in National Enquirer7, 75% of Americans trust the internet less than ever before, with many struggling to distinguish real from artificially generated or misleading content. Research into AI trust echoes this trend globally. One analysis found that although many people regularly use AI tools, only a minority fully trust the systems’ responses, and 42% report encountering inaccurate or misleading AI content. Experts describe this dynamic as the AI trust paradox, where advances in language and presentation make AI outputs appear credible, even when they are not.

The consequences are significant: when users repeatedly encounter inaccurate AI summaries at the top of search results, it can reduce confidence in both AI systems and the underlying platforms themselves. One analysis suggests that misinformation shown near the top of search pages can damage the perceived reliability of the entire result set, even when accurate information appears further down.

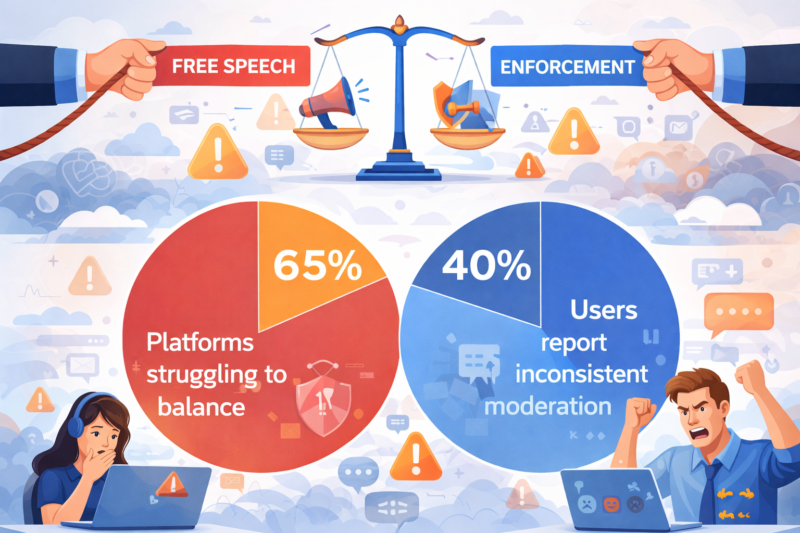

Content moderation efforts (whether automated or human-assisted) also face backlash and trust tensions. Across online platforms, 55% of content removed is for hate speech or harassment, reflecting enforcement priorities against harmful material. Around 65% of platforms cite challenges in balancing free speech with enforcement, highlighting the complexity of policy decisions at scale, and approximately 40% of users report that inconsistent moderation undermines trust in platforms.

Despite advances in AI moderation, false positives and undetected harmful content remain concerns. One moderation study observed that AI tools still miss large amounts of toxic content without human oversight, underscoring the limits of automation.

It isn’t just users reacting. Content creators and publishers have also voiced frustration. On Reddit, web developers and creators described feeling “betrayed” by Google’s AI search rollout, arguing that AI summaries have undercut traffic to original sources and weakened incentives for high-quality content. This sentiment aligns with broader concerns from journalism and online media analysts, who warn that AI systems’ lack of transparency and failure to reliably cite or credit original sources can erode trust in legitimate publishers and information ecosystems.

Taken together, these trends paint a challenging picture. AI systems’ misinformation and hallucinations can harm users, especially when authoritative platform branding creates misplaced trust. Public confidence in online information has declined significantly, making users more sceptical and less engaged. Both enforcement gaps and over-reliance on automation contribute to distrust, even when well-intended. Platforms that rely heavily on automatic systems for content curation and moderation must grapple with this credibility gap. For Google and similar companies, trust erosion isn’t just a reputational issue. It affects how billions of people use search and information services daily.

What’s Next: Solutions, Rebuilds, and Industry Trends

As AI‑generated content continues to surge and human moderators face shrinking teams, many industry experts and insiders are asking a critical question: What comes next? The consensus among policy analysts, trust and safety professionals, and civil‑society advocates is that the future of online governance must involve smarter, more ethical frameworks that combine automation with deliberate human judgment.

One leading voice in this conversation is PEN America8, whose report Treating Online Abuse Like Spamlays out concrete recommendationsfor how platforms can evolve their moderation and safety strategies. Rather than treating content enforcement as a purely reactive backend process, the report argues that platforms should proactively detect abusive or harmful content and empower users with more control over what they see, essentially giving individuals tools to quarantine and review content much like an email spam filter. This model aims to increase transparency, user agency, and trust, rather than leaving content decisions solely in opaque automated black boxes.

PEN America’s proposals reflect a broader shift in thinking: platforms cannot simply cut costs while expecting AI to handle everything. Effective systems require ethical design, human oversight, and user engagement to mitigate harms without stifling free expression. As one expert quoted in their research put it, technology to detect harm already exists. What’s missing is the commitment from platforms to invest in tools that prioritize user safety and autonomy.

Inside the ranks of trust and safety professionals, there’s a similar dialogue about the evolving role of humans in moderation workflows. In forums dedicated to the field, seasoned moderators stress that while AI can dramatically improve scale and speed, it cannot replace human intuition and policy interpretation, especially for edge cases where context matters. One Reddit commentator with direct experience in the space summed it up this way:

“As good as these LLMs are, and they are good, there’s an interpretive bar to our work that looks really hard to overcome without human oversight… Bad actors will use AI systems to generate spam and content violations, and they will specialize in evading AI systems designed to detect them.” — Reddit user in a trust and safety professionals community.

This perspective mirrors a growing industry trend: hybrid moderation models that use AI for initial filtering and pattern recognition, but retain human reviewers for policy nuance, appeals, context‑specific decisions, and complex enforcement judgments. Another Reddit discussion highlights this balanced approach, noting that AI provides critical scalability while humans bring emotional judgment and adaptability, a layered solution many believe will define the next generation of content governance.

Beyond workflow considerations, many insiders are also exploring career evolution within trust and safety roles. Faced with cuts in moderator headcount, professionals are pivoting toward policy, engineering, and strategic operational roles that focus on designing the systems around moderation, rather than performing first‑line screening themselves. As one moderator put it in discussing future career paths:

“If you want longevity in T&S, look at legal/policy or more technical routes like product management, analyst, or engineer roles… basic technical skills combined with policy knowledge will help.” — Reddit professional discussion on evolving roles.

Platforms themselves are responding to these pressures with new safety initiatives aimed at equipping moderators (both automated and human) with better tools. For example, Reddit’s Mod News updates showcase efforts to overhaul moderation tooling, reduce burden, and help communities grow with stronger support systems. These moves reflect a broader recognition that moderation can’t remain static if it’s expected to handle billions of interactions daily.

Taken together, these voices point toward a future of trust and safety that isn’t purely machine‑centric or human‑centric, but blends the strengths of both. Ethical frameworks like those advocated by PEN America push platforms to invest not just in automation, but in transparent, user‑centred safety tools that empower people and respect free expression. At the same time, trust and safety professionals themselves are adapting, seeking roles and skills that align with an AI‑infused ecosystem where human insight remains indispensable.

For platforms like Google, which sit at the intersection of search, social communication, and AI dissemination, these shifts aren’t optional. Without investment in balanced, ethically grounded moderation frameworks, the risk isn’t just operational inefficiency; it’s a deeper erosion of public trust in the digital spaces billions rely on every day. Google’s shrinking Trust & Safety teams amid a flood of AI-generated content highlight a tough balance: scale, accuracy, and humanity. Algorithms can process millions of posts instantly, but they miss nuance, context, and ethical judgment. Things that humans still provide. Trust isn’t lost, but rebuilding it requires human-in-the-loop systems, transparent policies, and ethical moderation tools.

As Meredith Whittaker, co-founder of the AI Now Institute9, said:

“Platforms cannot scale trust with algorithms alone. Humanity, oversight, and accountability are the core ingredients for an internet that users can actually rely on.”

Without this balance, the internet risks becoming fast, automated, and inscrutable, leaving users unsure of what to trust.

Sources

- “New data shows that more than 50% of articles online are AI-generated” news.aibase.com/news/21957. Accessed 23 Feb. 2026. ↩︎

- Financial Express, www.financialexpress.com/life/technology-google-lays-off-over-200-ai-contractors-amid-clash-over-working-conditions-3979282/. Accessed 23 Feb. 2026. ↩︎

- Reddit, www.reddit.com/r/artificial/comments/1nhr7za/hundreds_of_google_ai_workers_were_fired_amid/. Accessed 23 Feb. 2026 ↩︎

- Name. “More Articles Are Now Created by AI Than Humans” Graphite Growth Inc., 7 May 2024, graphite.io/five-percent/more-articles-are-now-created-by-ai-than-humans. Accessed 23 Feb. 2026. ↩︎

- “ResearchGate”, www.researchgate.net/publication/392947981_Policy-as-Prompt_Rethinking_Content_Moderation_in_the_Age_of_Large_Language_Models. Accessed 23 Feb. 2026. ↩︎

- Gregory, Andrew. “Google AI Overviews put people at risk of harm with misleading health advice” The Guardian, 2 Jan. 2026, www.theguardian.com/technology/2026/jan/02/google-ai-overviews-risk-harm-misleading-health-information. Accessed 23 Feb. 2026. ↩︎

- National Enquirer, nationalenquirer.com/americans-trust-less-than-half-of-what-they-see-online-survey-finds/. Accessed 23 Feb. 2026. ↩︎

- “Treating Online Abuse Like Spam” PEN America, 21 May 2025, pen.org/report/treating-online-abuse-like-spam/. Accessed 23 Feb. 2026. ↩︎

- Kak, Amba. “Home” AI Now Institute, ainowinstitute.org/. Accessed 23 Feb. 2026. ↩︎