Post Author

Meta presents itself as a global leader in AI safety. Executives describe advanced systems that can detect harmful content before users ever see it. Public statements frame AI as faster, fairer, and more scalable than human moderation. The implication is progress. The future is automated. Platforms are safer because machines are watching.

At the same time, Meta still depends on large armies of contract content moderators. These workers review some of the most disturbing material on the internet. Graphic violence. Child exploitation. Terrorist propaganda. Self-harm. Much of this work happens far from Silicon Valley, in outsourced facilities across Africa, Asia, and Latin America.

This is the paradox at the heart of Meta’s safety story. AI is marketed as the solution. Humans remain the last line of defense.

The contradiction raises a basic question. If Meta’s AI systems are truly as advanced as claimed, why has human moderation not been phased out? Why are tens of thousands of contractors still required to make final decisions on what billions of users can see?

According to TIME1, outsourcing allows technology companies to retain control over content decisions while distancing themselves from the legal and moral responsibility tied to labor conditions. Contractors absorb the psychological risk. Platforms retain the brand narrative of innovation and responsibility.

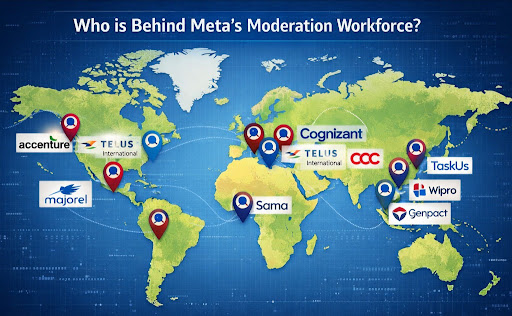

Who is Behind Meta’s Moderation Workforce?

When Meta talks about AI safety breakthroughs, it never mentions the people and the organisations that actually enforce those rules day to day. In reality, much of the content moderation work is done not by Meta’s own employees but by outsourced contractors. These firms supply thousands of reviewers in dozens of countries to make final calls on graphic, abusive, or harmful content that automated systems cannot resolve on their own.

Several third-party companies contract with Meta to provide human moderation services.

A Canadian digital services firm contracted to moderate Meta content in Europe, Latin America, and other regions. In April 2025, Reuters2reported that Meta ended its contract with Telus for a major moderation hub in Barcelona, resulting in up to 2,000 moderators being placed on gardening leave and the work being shifted elsewhere. Unions confirmed the team was handling Meta content before the contract ended.

In Africa and other markets, Meta’s moderation work has been linked to Majorel, a Luxembourg-based outsourcing company under the French-headquartered Teleperformance group. According to Courthouse News3, in Accra, Ghana, around 150 moderators working for Majorel report that they are exposed to extreme graphic content with inadequate support, prompting legal action and complaints from advocacy groups.

Sama previously held Meta’s content moderation contract in East Africa. Former moderators have said Sama paid low wages, imposed strict surveillance, and provided limited mental health care. According to the Center for Democracy & Technology4, Meta has since ended its contract with Sama amid backlash over working conditions.

These staffing arrangements create layers between Meta and the moderators themselves. Moderators often sign confidentiality agreements and are told only after hiring that they will review Meta content. One Reddit user who said they worked as a Facebook/Instagram moderator under strict nondisclosure described how recruiters withheld the nature of the job until after onboarding.

Other workers also state confusion about which companies handle moderation roles and where those jobs are located, underscoring the secrecy built into outsourcing. Users noted that contracts are often unclear or opaque, with some speculating that AI automation is increasing as contractor roles shift or disappear.

Contracting has legal implications. In Kenya, moderators sued Meta and subcontractors over unfair dismissal and poor conditions, and the Court of Appeal ruled Meta could be held liable in local courts despite its position that moderators are not its direct employees.

Meanwhile, moderators are organising globally to demand better protections. The Verge5reported that they are forming coalitions and unions with support from labour groups in Ghana, Kenya, and other countries to challenge outsourcing practices and secure rights like fair pay and mental health support.

These contractors and their thousands of employees form the human backbone of content moderation on Meta’s platforms. Yet they remain largely invisible in Meta’s public safety narrative. Companies like Telus, Majorel, and formerly Sama absorb the operational burden while Meta focuses on branding AI progress.

Exploring who these workers are and how they are managed is essential to understanding why humans remain integral to content moderation, even as Meta publicly claims AI can handle more of this work.

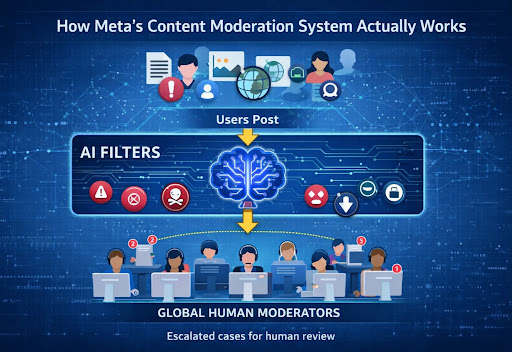

How Meta’s Content Moderation System Actually Works

Meta often describes its moderation pipeline as “AI first.” The company highlights automated systems that scan billions of posts, images, and videos every day. These systems detect known harmful content patterns and remove obvious violations instantly. This is what Meta focuses on in press statements when it talks about “AI safety breakthroughs.”

But the reality on the ground is more complex. Automated tools handle volume. They do not handle nuance.

When AI cannot confidently classify a piece of content, it is routed to human reviewers working for third-party contractors. These workers are placed on the front line of Meta’s safety operations. According to reports tied to lawsuits in Ghana, as shown by the Business and Human Rights Centre6, roughly 150 content moderators employed by a contractor in Accra spend their days reviewing material flagged from Facebook, Instagram, and Messenger. They are expected to meet “opaque targets that dictate whether or not they are able to scrape by in Accra.” Many have reported developing mental illnesses due to the work.

These accounts come from interviews collected by Foxglove Legal alongside Guardian and investigative reporting. The same sources describe workers being told not to reveal their moderate Meta content to their families. Many came from abroad and fear being sent back to conflict zones if they complain.

Foxglove’s Michaela Chen states, “Meta’s content moderation model made this tragedy not just possible, but inevitable.” And Martha Dark described the situation as “a complete disregard for the humanity of its key safety workers.”

This dynamic persists even after Meta’s previous moderation hub in Nairobi was closed and replaced with the new site in Ghana.

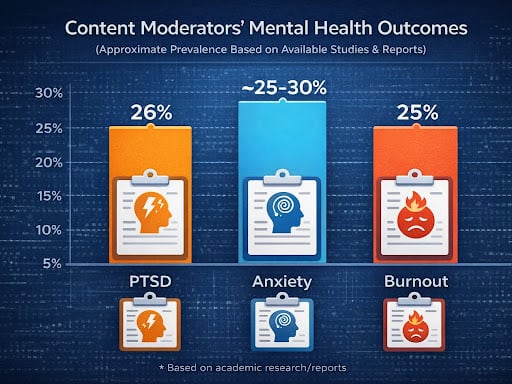

In Kenya, legal filings allege more than 140 Facebook content moderators were diagnosed with severe PTSD, generalized anxiety disorder, and major depressive disorder due to exposure to graphic content, including murders, suicides, and child abuse. Dr Ian Kanyanya from Kenyatta National Hospital found 81% of those examined had severe or extremely severe PTSD symptoms, often persisting long after they stopped working. The Guardian7 wrote that one lawyer representing moderators said the work “damaged” them in profound ways.

These harms happen despite Meta’s public narrative of technological progress and AI safety leadership. Outsourced contractors like Majorel or Sama handle the bulk of review work while Meta positions itself at arm’s length from the day-to-day labor. According to business rights reporting, Kenyan courts have even ruled that Meta can be sued in local courts over how outsourced moderation was handled, undermining Meta’s claim that contractors fully shield it from legal responsibility.

Former moderators and labour advocates describe a global pattern. Many moderators have formed unions or joined broader alliances to demand better working conditions, more mental health support, and meaningful oversight. A global trade union alliance for content moderators cites widespread “depression, post-traumatic stress disorder, suicidal ideation, and severe mental health consequences” from the work and aims to hold firms like Meta accountable for outsourcing practices that shield them from direct labor obligations.

At the same time, community sentiment on Reddit highlights a different angle. Some users notice mass layoffs tied to AI shifts. One Reddit8 thread about the Barcelona moderation centre losing up to 2,000 moderators after Meta ended its contract shows public surprise and concern.

Another Reddit discussion connected layoffs with AI implementation, with a user saying,

“AI is nowhere near advanced enough to do a human’s job” but acknowledging “nobody is stupid enough to replace humans with half-assed AI this early.”

The result is a combined system. AI screens and flags. Humans adjudicate and interpret. Neither is fully sufficient alone, and Meta’s public emphasis on AI breakthroughs obscures how dependent the company remains on human moderators to handle complexity, context, and judgment in real-world cases.

The Hidden Army of Contract Moderators and the Cost

Behind Meta’s polished AI safety messaging sits a largely invisible workforce. Contract content moderators operate across Ghana, Kenya, the Philippines, India, and several other countries. They review the content that automated systems cannot confidently judge. Their work includes extreme violence, child sexual abuse material, terrorism footage, and even self-harm.

According to The Guardian, Meta relies on outsourcing firms such as Teleperformance and Sama, formerly known as Samasource, to run these moderation centres. These companies recruit, train, and manage moderators on Meta’s behalf. The workers are not Meta employees. They are contractors, often earning a fraction of Silicon Valley salaries.

Recent reporting highlights the scale of this workforce in West Africa. According to one of The Guardian’s investigations, around 150 content moderators employed through a contractor in Ghana were tasked with reviewing some of the most disturbing material on Meta’s platforms. Many of these workers were recruited from other countries and relocated to Accra. Some reported being warned not to tell family members what their job involved.

The psychological toll has been severe. According to the same Guardian reporting, moderators described panic attacks, insomnia, and long-term trauma linked directly to their daily exposure to graphic content. Mental health support was described as limited or inconsistent.

Worker advocates have been blunt in their assessment. In comments cited by The Guardian from the legal group Foxglove, one advocate described Meta’s outsourcing model as “nothing short of a complete disregard for the humanity of its key safety workers.” The criticism focuses not only on the content itself, but on the deliberate distance created by contracting.

The Guardian’s investigation into the Ghana moderation hub documents strict productivity targets, heavy surveillance, and minimal power for workers to refuse harmful tasks. While Meta maintains that it requires vendors to meet safety standards, the lived reality described by moderators tells a different story.

This hidden army remains essential. AI systems filter content. Human moderators absorb the psychological cost.

The most advanced AI systems do not experience trauma. Human moderators do.

According to The Guardian, more than 140 former Facebook content moderators in Kenya were diagnosed with post traumatic stress disorder after reviewing graphic and disturbing material on Meta’s platforms. Medical assessments cited in court filings found widespread symptoms of severe anxiety, depression, nightmares, and emotional numbness. Some moderators reported being unable to sleep without medication. Others struggled to function in daily life long after leaving the job.

One legal filing referenced by The Guardian describes moderators reviewing videos of murders, suicides, sexual abuse, and extreme violence for hours each day with limited breaks. Psychological support was available on paper, but many workers said it was inadequate or discouraged in practice.

Worker testimony from these cases paints a stark picture. One anonymised moderator quoted in investigative reporting said,

“You stop reacting like a normal human being. Things that should shock you no longer do.” Another described going home and replaying images in their head despite trying to disconnect.

“I felt like the content followed me,” the worker said.

The burden is compounded by low pay and precarious employment. Many moderators earn wages that barely meet local living costs despite the severity of the work. Contracts are often short-term. Job security is weak. Workers who fail accuracy targets or request reassignment risk dismissal.

Moderators frequently sign strict nondisclosure agreements, limiting their ability to speak openly about their experiences. This silence makes the scale of harm harder to measure and easier to ignore.

This human cost explains why content moderation cannot be discussed purely as a technical problem. AI may reduce volume, but it does not eliminate the need for people to absorb the most disturbing corners of the internet.

Meta’s AI safety claims

Meta frequently promotes its progress on artificial intelligence and frames these advancements as critical to platform safety. Here are some of the core claims the company has made publicly:

- AI improves safety at scale

Meta says machine learning and generative models help detect and remove harmful content more quickly than humans alone. Executives regularly highlight how algorithms can scan billions of posts and flag content for review. - Automation streamlines risk checks

Internal reports suggest Meta plans to use AI to automate up to 90 percent of privacy, safety, and risk evaluations across Facebook, Instagram, and WhatsApp. Meta presents this shift as a way to enhance efficiency and consistency in enforcement. According to internal documents reviewed by NPR9 and reported in news outlets, the company states this will let teams scale safety oversight using AI rather than relying as heavily on manual processes. - Safety features in LLaMA models

Meta has discussed building guardrails directly into its LLaMA models and derivative tools to prevent harmful outputs. AI Insider10 reports that tools like Llama Guard and related safety modules are positioned as part of Meta’s broader trust and safety strategy for developers using its AI. - Youth and user-safety updates

Meta has announced AI enhancements aimed at protecting teens and vulnerable users, such as updates to content filters for teenage accounts and expanded privacy controls tied to AI experiences. - Guardrails and industry collaboration

On policy pages and summit materials, Meta11 outlines principles like responsible capability scaling, model red-teaming, and external reporting frameworks in support of safer AI deployment.

Meta’s public messaging often places AI at the heart of its future safety strategy, implying that next-generation models and automated systems will reduce harm and protect users more effectively than ever before.

At the same time, internal documents reviewed by news organisations show that Meta has invested billions of dollars into safety and security efforts over many years, including hiring thousands of staff and deploying technology resources beyond moderation alone. According to Meta’s responses to U.S. Senate questions, the company has spent more than $20 billion on safety and security since 2016, including roughly $5 billion in 2023 alone.

This massive investment feeds into Meta’s narrative that AI is a tool for improved safety. Yet, as other sections of this article explore, the company’s continued reliance on contracted human moderators highlights a gap between public AI safety claims and operational realities.

On Reddit, Meta’s shift toward AI-driven moderation is not discussed in abstract terms. Users talk about it through lived experience. Bans that make no sense. Harmful content that slips through. Appeals that go nowhere. Together, these comments offer a ground-level view of how automation actually feels to users.

One recurring theme is fear that fewer humans means worse outcomes.

“Feels like we need more moderation… not less,” one user said in a thread reacting to reports of Meta ending contracts with large moderation teams.

Discussions following layoffs at moderation centres, users worried that removing human reviewers would increase errors rather than reduce them. The concern was not ideological. It was practical. People had already seen what happened when moderate coverage dropped.

Other users described how Meta itself frames the transition internally.

“Meta will let AI approve most changes … humans only for complex cases,”

According to that thread, automation now handles routine enforcement decisions while human reviewers are reserved for edge cases. Several commenters questioned whether AI was actually capable of identifying what counts as routine versus complex in the first place.

Some accounts went further, describing a loss of meaningful appeal.

“AI made the final judge … even Meta staff can’t override,” one user stated after being repeatedly denied account reinstatement.

It seems that users who appeal bans often receive identical responses, suggesting automated review rather than human reconsideration. The sense that no one is actually reviewing the case fuels frustration and distrust.

These concerns are not hypothetical. Reddit is filled with examples of AI moderation failures.

In multiple threads, users reported massive waves of Instagram bans affecting artists, small businesses, and personal accounts. Many of these bans were triggered without explanation and upheld automatically. Users described losing years of content and contacts overnight, with no clear violation identified.

At the same time, users have documented the opposite failure. Harmful content stays up when humans are absent. During moderation strikes or staffing disruptions, users reported a noticeable surge in graphic or abusive content appearing on feeds. AI systems alone failed to catch material that would normally be removed by human reviewers.

Independent research supports these experiences. According to PEN America12, algorithmic moderation frequently misclassifies context, banning neutral or reclaimed terms while missing coded harassment and evolving abuse tactics. Automated systems struggle with irony, regional language, and intent, leading to both over-enforcement and under-enforcement.

Taken together, these reports and users’ experiences expose the limits of Meta’s AI safety narrative. Automation can scale. It cannot understand the harm the way people experience it. When humans are reduced or removed, users feel the consequences directly.

The Legal and Ethical Landscape and Why Full Automation Still Falls Short

As Meta pushes forward with AI-driven safety systems, the legal and ethical ground beneath its moderation model is becoming increasingly unstable. Lawsuits in multiple countries are forcing uncomfortable questions about responsibility, harm, and accountability.

According to The Guardian, former content moderators in Kenya and Ghana have taken Meta and its contractors to court, alleging severe mental health damage caused by their work and inadequate protections. In Kenya, moderators sued after medical evaluations found widespread PTSD, anxiety, and depression linked directly to reviewing graphic content. Courts ruled that Meta could be sued locally despite its argument that moderators were employed by third parties, not Meta itself. This decision weakened the legal shield that outsourcing was meant to provide.

In Ghana, similar complaints have emerged. Moderators employed through contractors described unsafe working conditions, limited psychological support, and fear of retaliation if they spoke out. These cases highlight how existing labor laws struggle to keep pace with global contractor models where responsibility is diffused across borders and companies.

Ethically, this creates a gap. Meta controls the rules, the tools, and the content standards. Contractors absorb the human cost. Regulators are only beginning to address this imbalance.

At the same time, full automation remains out of reach for practical reasons.

AI systems still struggle with nuance. Context matters in moderation. Satire, political speech, cultural references, and reclaimed slurs cannot be reliably judged by models trained on historical data. Language evolves faster than policies or datasets.

Low-resource languages pose another challenge. According to academic research published on arXiv, automated moderation systems perform significantly worse in languages with limited training data, leading to higher error rates and uneven enforcement across regions.

Regulation also limits automation. Under laws like the EU Digital Services Act13, platforms are required to provide meaningful explanations, appeal mechanisms, and human oversight for moderation decisions. Fully automated enforcement without human review risks violating these requirements.

Even Meta’s own Oversight Board has acknowledged these limits. According to an Oversight Board14 report on automation thresholds, AI should assist moderation but not replace human judgment in high-impact or ambiguous cases. The Board warned that over-reliance on automation can undermine fairness, transparency, and user trust.

Together, these pressures explain why Meta cannot simply automate its way out of moderation. Legal scrutiny is increasing. Ethical expectations are rising. Technological limits remain real. For now, human moderators remain essential, even as the company publicly emphasizes AI safety breakthroughs.

What Must Change and Why Incremental Fixes Are Not Enough

The gap between Meta’s public AI safety narrative and the lived reality of moderation work has reached a breaking point. Incremental policy tweaks are no longer enough. Structural change is required.

First, contract moderators need stronger protections. This includes enforceable mental health care, realistic review quotas, and the right to refuse extreme content without penalty. According to UNI Global Union, which represents workers across the tech supply chain, content moderators should be covered by clear global standards that treat psychological harm as an occupational risk, not an individual failure. UNI has called for binding protocols on pay, care, and transparency for platform moderation work.

Second, worker conditions must be transparent. Outsourcing thrives on opacity. Moderators are hidden behind vendors, nondisclosure agreements, and shifting contracts. Transparency would mean disclosing where moderation happens, who employs the workers, and what safeguards exist. It would also mean acknowledging moderators publicly as part of the safety system, not as an external afterthought.

Finally, AI must complement humans, not replace them. Experts consistently argue that hybrid systems are the only viable path forward. One digital labor researcher quoted in labor rights reporting described effective moderation as “a socio-technical system, not a software problem.” AI can filter scale. Humans must handle judgment. Treating AI as a replacement increases harm for both users and workers.

Without these changes, Meta’s reliance on contract moderators will remain extractive. The work will continue. The cost will stay hidden.

Meta’s story about AI safety is polished and optimistic. The reality of content moderation is messy, human, and painful. This tension defines the company’s current approach to safety.

Despite claims of rapid AI progress, contract moderators remain essential. AI still fails at nuance, context, and cultural understanding. Laws and oversight bodies demand human involvement. Users feel the impact when humans are removed too quickly.

The contradiction is no longer subtle. Meta promotes AI as the future of safety while depending on an invisible workforce to clean up what automation cannot handle.

Accountability can no longer stop at technology. It must extend to labor. Until worker protections, transparency, and genuine hybrid systems are treated as core safety investments, Meta’s AI safety promises will remain incomplete.

Sources: