Post Author

Microsoft presents a confident vision of responsible AI. The company states that AI should be human-centered and accountable. It promotes fairness, safety, reliability, transparency, and inclusiveness as core commitments. These ideas appear across Microsoft’s official Responsible AI framework and public communications. Readers are told that AI should empower people, not replace them. They are assured that Microsoft approaches AI with care and strong ethical grounding. Microsoft highlights governance structures, ethical standards, and oversight mechanisms. It describes review boards and expert teams. It also describes internal controls that are meant to reduce risk and protect users. Microsoft says this is how it designs, builds, and deploys AI systems.

On paper, this sounds reassuring. It suggests protection. It suggests rigorous responsibility. It also suggests that humans are central to every major decision involving AI products. Microsoft states that it takes bias, harm, and misuse seriously. It also states that it does not treat AI as an autonomous, unaccountable system. Instead, it tells the world that humans remain in charge and that AI remains a tool. That is the narrative. It is polished. It is public. It is strategic.

But this public narrative opens a critical question. What does “human-centered” actually mean inside real AI development? How does it affect the people whose labor shapes AI systems daily? How does it affect the workers who label data, test outputs, evaluate risks, and absorb emotional stress from harmful material so that AI can function safely? Microsoft talks about responsible AI. It talks about human protection. It talks about alignment with values. Yet there is a quieter reality behind the product launches and keynote promises.

What Microsoft Publicly Says About Human Oversight

Microsoft’s1 official message frames human oversight as a central part of building trustworthy AI. The Responsible AI principles page states that AI should treat all people equitably, perform reliably, respect privacy, and maintain transparency and accountability.

In its Responsible AI guide, Microsoft’s leaders stress human control over AI systems. The company says that fairness, reliability, and accountability are core to its mission. This messaging emphasizes safety. It suggests that humans remain essential at every step.

Natasha Crampton, Chief Responsible AI Officer at Microsoft, said that responsible AI guidelines are embedded into product design from the very beginning.

“With the right guardrails, cutting-edge technology can be safely introduced to the world to help people be more productive and go on to solve some of our most pressing societal problems,” she explained in Microsoft’s own press

Microsoft goes further in its corporate human rights statement. It’s Global Human Rights Statement2 claims to apply its Responsible AI policy to its products, services, and relationships with partners. This includes protecting human rights and ensuring ethical development.

Many external Microsoft resources reinforce the narrative that humans remain at the center of AI development. A dedicated blog emphasizes how Microsoft’s Responsible AI Standard guides product teams throughout the AI lifecycle. To users and customers, this messaging sounds reassuring. It suggests that human judgment remains crucial. It suggests that humans are respected and protected in the loop of AI development. But the corporate narrative often stops short of explaining how this plays out for gig workers, data laborers, and contractors far outside Microsoft’s own workforce.

Who Actually Does the “Human in the Loop” Work?

The corporate narrative paints “human oversight” as a value statement. But in practice, much of this work is done by independent contractors, third-party vendors, and low-paid digital labor across the globe. This is especially true for tasks like data labeling, content moderation, annotation, and quality assurance.

Investigations outside Microsoft reveal that millions of workers worldwide perform essential AI support roles that the public rarely sees. WIRED3 claims that these people annotate datasets, review outputs, and shape AI behavior.

Journalistic reporting and academic research show how the AI economy depends on this hidden workforce. Tasks that improve AI accuracy or remove harmful results are outsourced to remote workers in Africa, Asia, and Latin America. A comprehensive analysis by Digital Labor scholars4 calls this the “hidden human labor” of AI development.

It is important to be precise here. Microsoft itself employs tens of thousands of full-time AI engineers and researchers. These workers design models, test algorithms, and build systems. But the specific human labor that labels training data or evaluates AI outputs at scale is often hired through outsourcing companies, online gig platforms, or subcontractors that operate partly or wholly outside direct employment by Microsoft.

Investigative reporting by Time magazine5 and Oxford researchers found that many AI gig workers face “unfair working conditions.” These include low pay, precarious employment, and limited protections despite doing work that AI systems rely on heavily. Thus, “human in the loop” can mean very different things depending on which humans are referenced. On one hand, high-level AI safety engineers in Seattle. On the other hand, contract workers in outsourcing hubs perform repetitive, high-volume tasks.

Reality of Work Conditions Behind the Scenes

While Microsoft highlights human oversight as a positive safety attribute, those on the ground tell another story. Many workers describe frantic timelines, strict quotas, and constant pressure to perform. Workers sometimes feel they are part of a pipeline built for speed and scale, not welfare and dignity.

A separate global union push focused on emerging safety standards for content moderators highlighted how outsourced reviewers deal with psychological strain when exposed to harmful imagery or text. According to TIME, 81% of moderators believe their employer lacks adequate mental health support.

This matter is closely linked to the broader AI supply chain. Moderation and evaluation are essential steps in preparing models like Copilot or ChatGPT for safe use. This work doesn’t just involve clicking buttons. People must apply judgment. They must flag subtle biases. They must correct harmful outputs. All of this carries emotional and cognitive weight.

Beyond mental strain, labor reports point to structural inequalities. Tech Equity6 reports that any AI support roles are subcontracted across borders to regions with lower labor standards and limited worker protections. Salaries can be low relative to the value generated by the work, and benefits may be minimal or absent.

The result is a workforce that bears the burden of human oversight but often lacks the recognition, compensation, and safeguards promised by corporate branding around responsible AI.

While Microsoft highlights human oversight as a positive safety attribute, workers on the ground often paint a very different picture. Many developers feel pressured to use AI tools in ways that don’t match the reality of their work.

On Reddit7, for example, one developer wrote that their manager “thinks Copilot will do 10 days of work in 10 hours,” even though “the code that is generated by Copilot is full of bogus logic.”

Another Reddit coder described how Copilot is tracked and encouraged at work:

“our company is encouraging to use Copilot & also tracking every developers Github Copilot usage,” adding that the tool “takes long to generate solutions & most of them are not accurate enough.”

These posts reflect broader frustrations about how AI tools are integrated into workflows without regard for worker experience. One user summed up the impact on code quality:

“The quality has visibly dropped…the tool already explained it, so people are skipping context or documentation.”

Reports from worker rights groups find that many AI support roles are subcontracted to regions with lower labor protections, and pay often doesn’t match the value of the work performed. This structural inequality means workers “bear the burden of human oversight” without the recognition, compensation, or safeguards that corporate branding promises around responsible AI.

The Human in the Loop Paradox

There is a contradiction at the heart of the AI story. Companies like Microsoft present AI as a breakthrough that reduces human effort and improves efficiency. Marketing stresses automation. Leaders talk about speed, intelligence, and productivity gains. Yet the reality is far more human than the narrative suggests.

Modern AI systems cannot exist without people. They do not learn without human guidance. They do not refine themselves without workers correcting outputs, labeling datasets, and filtering harmful material. Researchers studying the AI ecosystem repeatedly confirm this truth.

The Oxford Internet Institute8 explains that AI relies on enormous amounts of invisible labor behind the scenes. Millions of workers perform tasks like data annotation and labeling to train AI models and keep them functioning safely. The institute calls this the “AI supply chain of invisible workers.”

MIT Technology Review9 reports that the “illusion of AI magic” is only possible because of low-paid humans continuously correcting and supervising machines. One report states that humans are “the fallback system when AI fails. And AI fails a lot.” Millions of people worldwide train AI models for extremely low pay. Workers sit behind screens labeling images, checking text, and fixing AI mistakes. The real workforce powering AI systems that tech executives claim are mostly autonomous.

This means the “human in the loop” concept is not just a technical safety design. It is a labor system. It is an economic structure built on human effort. And it is rarely highlighted in corporate AI announcements.

A CBS News10 look at AI training work describes workers in Africa being paid as little as two dollars an hour to help clean toxic data and moderate harmful content for large AI companies. One worker described the emotional toll of reading violent and sexual content every day just to make AI safer for people in richer countries. This pattern exists across the AI sector. It affects annotation workers. It affects content moderators. It affects human QA evaluators. It affects anyone asked to “train” or “oversee” AI systems so they appear polished and responsible to the public.

The scale of this hidden workforce is large. One academic estimate from the International Labour Organization11 suggests that 3.3% people globally perform AI-support labor in some capacity. Many work without formal recognition or meaningful legal protection.

This hidden labor not only shapes AI performance. It also affects accountability. When AI produces biased or harmful outputs, responsibility is often framed in purely technical language. Companies talk about model tuning. They talk about alignment. They talk about training improvements.

But behind every improvement is a person.

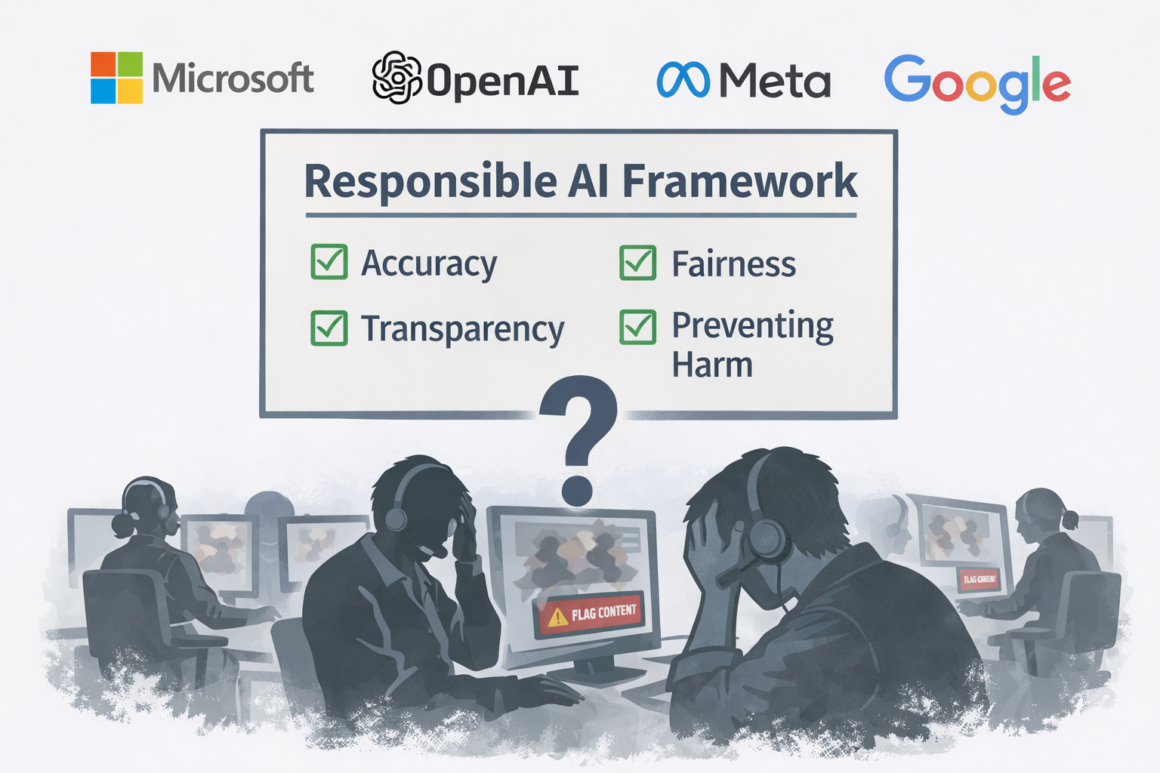

Microsoft, OpenAI, Meta, Google, and many other firms have released Responsible AI frameworks. These frameworks focus strongly on accuracy, fairness, transparency, and preventing harm. But they often say far less about the people who carry out the work that enables those protections to exist.

Labor scholars argue that this omission creates an ethical blind spot. AI ethics discussions often center on users and technology. They rarely center on workers. The social cost of AI is therefore hidden in marketing language about safety and trust.

The paradox becomes clear.

AI promises to reduce human burden. Yet it depends on human burden to function. AI is marketed as independent. Yet it leans on constant human oversight. AI is framed as a technical marvel. Yet it sits on top of human labor that is largely invisible to customers.

Worker voices make this contradiction personal. A user in a thread discussing AI training work wrote,

AI looks magical to outsiders. But behind every answer, some human somewhere had to correct something, label something, or absorb something awful.”

In another thread, someone who worked on annotation tasks said they felt “like a shadow part of the tech industry.” Their contributions were critical to AI development, but they said they remained invisible in public conversations.

At the same time, customers hear inspirational AI messages. They hear that AI will increase productivity. They hear that AI will reduce drudgery. They hear that AI frees people to think and create. That narrative is true for some users in privileged roles. But it omits the people doing the invisible grounding work.

This has serious implications.

If AI depends on human judgment, then companies cannot treat that human contribution as disposable. If AI systems need humans for safety, then worker protections are a safety requirement, not a luxury. If AI ethics matter, then labor ethics must sit inside AI ethics, not outside it.

Academic voices have begun to insist on this point. Researchers from New York University’s AI Now Institute12 argue that AI policy must include worker rights and working conditions as part of AI governance frameworks. They warn that ignoring labor problems lets corporations celebrate “ethical AI” while people quietly suffer to maintain it.

The more AI spreads into workplaces and daily life, the more this paradox matters. Every expansion of the AI scale increases the scale of human labor behind it. Every new feature marketed as smart automation usually requires even more human testing, review, and correction.

This is the paradox of humans in the loop. AI appears to reduce the need for humans. But at the same time, it creates new human dependencies that are pushed out of sight.

As companies like Microsoft publicly celebrate innovation and responsibility, the question remains. Will they acknowledge the full human reality inside their AI systems? Or will the workers who make AI safer continue to stay hidden behind the polished software and confident messaging?

This is not just a technical question. It is a human one.

Accountability and Transparency Questions

If human labor is essential to AI, then accountability must include real working conditions. Transparency is not just about explaining model decisions. It must also mean revealing how labor is structured, compensated, and protected.

One academic study13 explains how several labor advocacy outlets argue for industry-wide standards to protect AI workers from unrealistic quotas, exposure to harmful material, and precarious contracts. Based on interviews with 113 workers across Colombia, Kenya, and the Philippines, the report uncovers severe occupational, psychological, sexual, and economic harms endured by people moderating violent content and training AI systems for platforms such as Meta, TikTok, and ChatGPT. Workers describe PTSD, substance dependency, union-busting, and exploitative contracts as routine features of an industry designed to normalize harm while hiding abuse.

Tech companies outsource risk through opaque and informal supply chains, leaving workers gagged by NDAs, punished for speaking out, and stripped of basic protections. Grounded in worker testimony and legal analysis, the report provides a concrete roadmap for reform and calls on technology companies, investors, governments, and the ILO to finally center the rights, dignity, and safety of digital workers.

Policy researchers also argue that labor protections can no longer be optional. When digital labor creates billions in value, basic rights like fair pay, mental health support, and worker representation become essential ethical issues. This is the next frontier of responsible AI conversations.

Corporate Responsible AI standards rarely mention worker protections outside of high-level principles. Yet questions about job security, benefits, and working conditions of outsourcing networks are central to true accountability.

What Needs to Change

Real change starts with recognition. Corporations like Microsoft must acknowledge the full scope of human labor embedded in AI systems. Not just high-level oversight teams, but all workers involved in annotation, review, moderation, and refinement of outputs must be visible in the ethical landscape.

Integration of worker welfare into Responsible AI standards could include:

- Fairer compensation across global labor markets

- Mental health safeguards for workers exposed to stressful tasks

- Limits on daily exposure to harmful content

- Transparency about what tasks workers will face before hiring

- Tools to allow worker feedback into AI development

Countries and trade groups are beginning to discuss global safety protocols. The goal is baseline protections that apply regardless of where the work is performed. This includes unionization rights, workplace democracy, and minimum benefit structures for all AI labor roles.

Ultimately, AI ethics must expand its frame. Responsible AI is not just about fairness in model outputs. It must also be about fairness in how the work that produces those outputs is structured and protected.

Microsoft’s official messaging paints a world where AI and humans work together safely and responsibly. It frames human oversight as a safeguard and a moral imperative. But for many workers around the world, human involvement in AI is hard labor, under-recognized, and under-protected.

Corporate principles matter, but so do real working conditions that reflect those principles. Workers who train models, vet outputs, and fine-tune complex systems deserve dignity, protection, and transparency. Until that happens, the term “human-in-the-loop” will remain more of a slogan than a reality for the people who make AI usable. One experienced worker who chose to remain anonymous summed this tension well:

“We are now professional AI prompters. It feels like we are here to support the illusion of autonomy.”

AI systems might promise efficiency, innovation, and safety. But they cannot escape the fact that humans are still the muscle and the mind behind the machine. Every dataset is cleaned by people. Every toxic post filtered away is absorbed by a human first. Every “automated” judgment depends on thousands of unseen workers training, correcting, reviewing, and enduring the emotional toll that algorithms cannot feel. The glossy language of responsible AI often celebrates breakthroughs and technological brilliance, yet it rarely centers the human cost, the labour conditions, and the lived reality of those who keep these systems functioning.

As long as corporations frame AI as an independent, almost magical intelligence, the people who sustain it remain easy to erase. That erasure has consequences: it makes exploitation easier, justifies underinvestment in worker protections, and turns genuine harms into abstract “system failures” instead of human accountability issues. Real responsibility requires acknowledging that AI is not replacing humans; it is powered by them. Until corporate narratives embrace that truth—and companies are willing to be transparent about who builds and maintains AI, under what conditions, and at what emotional and ethical price—accountability will continue to be a slogan rather than a standard.

Sources

- Microsoft “The Microsoft Responsible AI Standard” www.microsoft.com/en-us/ai/principles-and-approach. Accessed 7 Jan. 2026 ↩︎

- “Microsoft Global Human Rights Statement” www.microsoft.com/en-us/corporate-responsibility/human-rights-statement. Accessed 9 Jan. 2026. ↩︎

- Rowe, Niamh. “Millions of Workers Are Training AI Models for Pennies” WIRED, 12 Oct. 2023, www.wired.com/story/millions-of-workers-are-training-ai-models-for-pennies/. Accessed 9 Jan. 2026. ↩︎

- Larsson, Anthony. “The Future of Labour: How AI, Technological Disruption and Practice Will Change the Way We Work” 9 Feb. 2023, library.oapen.org/bitstream/handle/20.500.12657/103409/library.oapen.org/bitstream/handle/20.500.12657/103409/9781040378014.pdf. Accessed 9 Jan. 2026. ↩︎

- Perrigo, Billy. “AI Gig Workers Face ‘Unfair Working Conditions,’ Study Says” TIME, 20 July 2023, time.com/6296196/ai-data-gig-workers/. Accessed 9 Jan. 2026. ↩︎

- “Tech Equity” 30 Sept. 2025, techequity.us/2025/09/30/ghost-workers-in-the-machine/. Accessed 9 Jan. 2026. ↩︎

- Reddit www.reddit.com/r/developersIndia/comments/1cysdnc/it_has_started_managers_are_thinking_copilot_as/. Accessed 9 Jan. 2026. ↩︎

- “OII | Fairwork AI report: Improving employment conditions for invisible internet workers” Fairwork AI report: Improving employment condition, www.oii.ox.ac.uk/news-events/fairwork-ai-report-improving-employment-conditions-for-invisible-internet-workers/. Accessed 9 Jan. 2026. ↩︎

- Heaven, Will Douglas. “AI needs to face up to its invisible-worker problem” MIT Technology Review, 11 Dec. 2020, www.technologyreview.com/2020/12/11/1014081/ai-machine-learning-crowd-gig-worker-problem-amazon-mechanical-turk/. Accessed 9 Jan. 2026. ↩︎

- News, CBS. “Training AI takes heavy toll on Kenyans working for $2 an hour | 60 Minutes” CBS News, 29 June 2025, www.cbsnews.com/video/ai-workers-kenya-60-minutes-video-2025-06-29/. Accessed 9 Jan. 2026. ↩︎

- “ILO” www.ilo.org/meetings-and-events/artificial-intelligence-ai-and-non-discrimination-world-work. Accessed 9 Jan. 2026. ↩︎

- ainowinstitute.org/wp-content/uploads/2023/04/ainowinstitute.org/wp-content/uploads/2023/04/AI_Now_2019_Report.pdf. Accessed 9 Jan. 2026. ↩︎

- Bhattacharjee, Shikha Silliman. “Scroll. Click. Suffer. The Hidden Human Cost of Content Moderation and Data Labelling” 1 Jan. 2025, www.academia.edu/129608986/Scroll_Click_Suffer_The_Hidden_Human_Cost_of_Content_Moderation_and_Data_Labelling. Accessed 9 Jan. 2026. ↩︎